Have a C7000 with NAS4FREE NFS storage ....

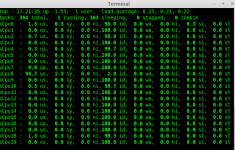

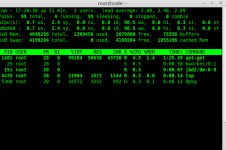

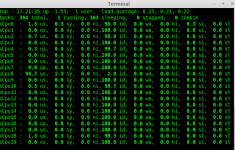

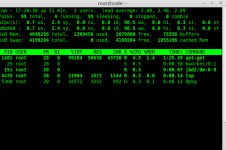

The issue is that upgrades on my vm's are taking 35 minutes ... but on my crappy T400 laptop, the same upgrade will take less than 3 minutes. Sigh. The one blade on the C7000 running the proxmox host shows 0 iowait time... the vm shows like 95+% iowait during upgrading (see images at bottom of post). (host on the blade is configure with local SSD storage, vm is on qcow2 nfs storage).

NETWORK

1 GIG network, I do NOT have jumbo frames enabled

PROXMOX CLUSTER INFORMATION

Cluster of 3 proxmox hosts. /etc/hosts file has been updated to include all three in each one.

root@prox100:~# pveversion

pve-manager/4.0-48/0d8559d0 (running kernel: 4.2.2-1-pve)

64 GB ram

2- Intel X5660 processors

NAS INFORMATION

Version 9.1.0.1 - Sandstorm (revision 847)

Build date Sun Aug 18 03:49:41 CEST 2013

Platform OS FreeBSD 9.1-RELEASE-p5 (kern.osreldate: 901000)

Platform x64-embedded on Intel(R) Celeron(R) CPU G1610 @ 2.60GHz

Ram 8 GB

System ASRock Z77 Pro4

System bios American Megatrends Inc. version: P1.60 12/06/2012

System time

System uptime 39 minute(s) 3 second(s)

NAS 23% of 16.2TB

Total: 16.2T | Used: 2.59T | Free: 8.06T | State: ONLINE

6 - 3TB disks RAIDZ2 (all three TOSHIBA DT01ACA300 )

ON LOCAL STORAGE

root@prox100:~# pveperf

CPU BOGOMIPS: 128002.44

REGEX/SECOND: 1206298

HD SIZE: 7.01 GB (/dev/dm-0)

BUFFERED READS: 169.73 MB/sec

AVERAGE SEEK TIME: 0.20 ms

FSYNCS/SECOND: 572.80

DNS EXT: 105.45 ms

DNS INT: 68.47 ms (home.priv)

ON NFS STORAGE

root@prox100:~# pveperf /mnt/pve/NAS-isos/

CPU BOGOMIPS: 128002.44

REGEX/SECOND: 1227257

HD SIZE: 8361.39 GB (192.168.2.253:/mnt/NAS/isos)

FSYNCS/SECOND: 118.36

DNS EXT: 83.72 ms

DNS INT: 84.45 ms (home.priv)

NAS SETTINGS

nas4free: ~ # sysctl -a | grep nfsd

kern.features.nfsd: 1

vfs.nfsd.disable_checkutf8: 0

vfs.nfsd.server_max_nfsvers: 3

vfs.nfsd.server_min_nfsvers: 2

vfs.nfsd.nfs_privport: 0

vfs.nfsd.enable_locallocks: 0

vfs.nfsd.issue_delegations: 0

vfs.nfsd.commit_miss: 0

vfs.nfsd.commit_blks: 0

vfs.nfsd.mirrormnt: 1

vfs.nfsd.minthreads: 48

vfs.nfsd.maxthreads: 48

vfs.nfsd.threads: 48

vfs.nfsd.request_space_used: 0

vfs.nfsd.request_space_used_highest: 1291212

vfs.nfsd.request_space_high: 13107200

vfs.nfsd.request_space_low: 8738133

vfs.nfsd.request_space_throttled: 0

vfs.nfsd.request_space_throttle_count: 0

NAME STATE READ WRITE CKSUM

NAS ONLINE 0 0 0

raidz2-0 ONLINE 0 0 0

ada0.nop ONLINE 0 0 0

ada1.nop ONLINE 0 0 0

ada2.nop ONLINE 0 0 0

ada3.nop ONLINE 0 0 0

ada4.nop ONLINE 0 0 0

ada5.nop ONLINE 0 0 0

errors: No known data errors

FWIW, vmware esxi seemed fine when I was running it.... nothing this slow...

I've read documentation till my eye's are sore...

1. I want to use qcow2 because of the snapshots.

2. The vm's are set as virtio disks.

3. The network is set as virtio as well.

4. Processors are set to "host" with numa enabled.

5. Need to use the NAS for several reasons, but mostly because buying disks for all the blades would be insanely expensive, and have the same data store for the cluster is a must.

I am open to suggestions. I REALLY want Proxmox to work.... I do NOT want to go back to vmware...

Thanks in advance.

Image of high iowait on the vm

Image of no iowait on the host

The issue is that upgrades on my vm's are taking 35 minutes ... but on my crappy T400 laptop, the same upgrade will take less than 3 minutes. Sigh. The one blade on the C7000 running the proxmox host shows 0 iowait time... the vm shows like 95+% iowait during upgrading (see images at bottom of post). (host on the blade is configure with local SSD storage, vm is on qcow2 nfs storage).

NETWORK

1 GIG network, I do NOT have jumbo frames enabled

PROXMOX CLUSTER INFORMATION

Cluster of 3 proxmox hosts. /etc/hosts file has been updated to include all three in each one.

root@prox100:~# pveversion

pve-manager/4.0-48/0d8559d0 (running kernel: 4.2.2-1-pve)

64 GB ram

2- Intel X5660 processors

NAS INFORMATION

Version 9.1.0.1 - Sandstorm (revision 847)

Build date Sun Aug 18 03:49:41 CEST 2013

Platform OS FreeBSD 9.1-RELEASE-p5 (kern.osreldate: 901000)

Platform x64-embedded on Intel(R) Celeron(R) CPU G1610 @ 2.60GHz

Ram 8 GB

System ASRock Z77 Pro4

System bios American Megatrends Inc. version: P1.60 12/06/2012

System time

System uptime 39 minute(s) 3 second(s)

NAS 23% of 16.2TB

Total: 16.2T | Used: 2.59T | Free: 8.06T | State: ONLINE

6 - 3TB disks RAIDZ2 (all three TOSHIBA DT01ACA300 )

ON LOCAL STORAGE

root@prox100:~# pveperf

CPU BOGOMIPS: 128002.44

REGEX/SECOND: 1206298

HD SIZE: 7.01 GB (/dev/dm-0)

BUFFERED READS: 169.73 MB/sec

AVERAGE SEEK TIME: 0.20 ms

FSYNCS/SECOND: 572.80

DNS EXT: 105.45 ms

DNS INT: 68.47 ms (home.priv)

ON NFS STORAGE

root@prox100:~# pveperf /mnt/pve/NAS-isos/

CPU BOGOMIPS: 128002.44

REGEX/SECOND: 1227257

HD SIZE: 8361.39 GB (192.168.2.253:/mnt/NAS/isos)

FSYNCS/SECOND: 118.36

DNS EXT: 83.72 ms

DNS INT: 84.45 ms (home.priv)

NAS SETTINGS

nas4free: ~ # sysctl -a | grep nfsd

kern.features.nfsd: 1

vfs.nfsd.disable_checkutf8: 0

vfs.nfsd.server_max_nfsvers: 3

vfs.nfsd.server_min_nfsvers: 2

vfs.nfsd.nfs_privport: 0

vfs.nfsd.enable_locallocks: 0

vfs.nfsd.issue_delegations: 0

vfs.nfsd.commit_miss: 0

vfs.nfsd.commit_blks: 0

vfs.nfsd.mirrormnt: 1

vfs.nfsd.minthreads: 48

vfs.nfsd.maxthreads: 48

vfs.nfsd.threads: 48

vfs.nfsd.request_space_used: 0

vfs.nfsd.request_space_used_highest: 1291212

vfs.nfsd.request_space_high: 13107200

vfs.nfsd.request_space_low: 8738133

vfs.nfsd.request_space_throttled: 0

vfs.nfsd.request_space_throttle_count: 0

NAME STATE READ WRITE CKSUM

NAS ONLINE 0 0 0

raidz2-0 ONLINE 0 0 0

ada0.nop ONLINE 0 0 0

ada1.nop ONLINE 0 0 0

ada2.nop ONLINE 0 0 0

ada3.nop ONLINE 0 0 0

ada4.nop ONLINE 0 0 0

ada5.nop ONLINE 0 0 0

errors: No known data errors

FWIW, vmware esxi seemed fine when I was running it.... nothing this slow...

I've read documentation till my eye's are sore...

1. I want to use qcow2 because of the snapshots.

2. The vm's are set as virtio disks.

3. The network is set as virtio as well.

4. Processors are set to "host" with numa enabled.

5. Need to use the NAS for several reasons, but mostly because buying disks for all the blades would be insanely expensive, and have the same data store for the cluster is a must.

I am open to suggestions. I REALLY want Proxmox to work.... I do NOT want to go back to vmware...

Thanks in advance.

Image of high iowait on the vm

Image of no iowait on the host