Hi,

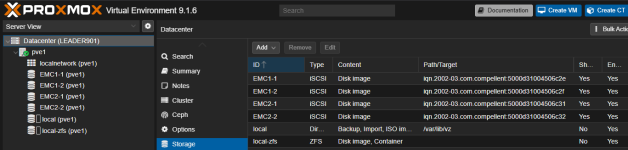

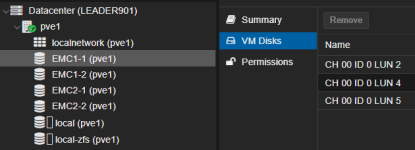

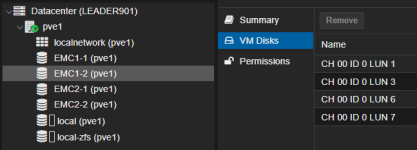

I have installed and tested the free / open source Starwind Proxmox SAN storage plugin on a small testcluster (PVE 8) with shared block storage and it works really good .... thin provisioning, snapshots, thin disk auto extension, live migration , linked clones.... at the end a cluster aware (shared) LVM thin implementation (SAN vendor neutral)

just wondering why I have not found more infos/feedback .... would be eventually also a good candidate/alternative for direct PVE integration

Is someone using this in a production environment ? any experience ?

https://www.starwindsoftware.com/re...-proxmox-san-integration-configuration-guide/

I have installed and tested the free / open source Starwind Proxmox SAN storage plugin on a small testcluster (PVE 8) with shared block storage and it works really good .... thin provisioning, snapshots, thin disk auto extension, live migration , linked clones.... at the end a cluster aware (shared) LVM thin implementation (SAN vendor neutral)

just wondering why I have not found more infos/feedback .... would be eventually also a good candidate/alternative for direct PVE integration

Is someone using this in a production environment ? any experience ?

https://www.starwindsoftware.com/re...-proxmox-san-integration-configuration-guide/