Hi all,

I recently ran a series of CPU and memory benchmark comparisons in PVE and came across some unexpected results, specifically with the host CPU type performing worse than anticipated.

Environment

Setup / background

Tested CPU types

Tests

I performed multiple benchmark runs per CPU type and compared

Benchmark results

Observations

Questions

I'm aware that there are several threads about the host CPU type performing worse than expected. However, I still wonder why host is acting like that. Does anyone have deeper insight into why this happens?

What would you recommend as the preferred CPU type for EPYC-based Proxmox clusters in practice?

Thanks in advance.

McShadow

I recently ran a series of CPU and memory benchmark comparisons in PVE and came across some unexpected results, specifically with the host CPU type performing worse than anticipated.

Environment

- AMD EPYC 9375F (32-Core)

- 384 GB RAM

- Storage:

- Micron NVMe

- Kioxia NVMe

- Proxmox VE 9.1 (fresh install on bare metal Supermicro server)

Setup / background

- Uninstalled VMware tools from Windows Server 2019 VM

- Migrated this VM from VMware to Proxmox

- Initially kept the original VM configuration and started the VM

- Installed qemu tools and virtio driver, rebooted and changed to VirtIO network model

- Doing this a new Ethernet adapter has been installed and I lost my static settings

- As expected, performance improved significantly due to better hardware

- Used AIDA64 for benchmarking

Tested CPU types

- x86-64-v2-AES

- x86-64-v4-AES

- host

- EPYC

- EPYC-Genoa

- EPYC-v4

Tests

I performed multiple benchmark runs per CPU type and compared

- Memory throughput (read,write,copy)

- Cache performance (L1/L2/L3)

- Latency

- NUMA: enabled vs disabled

- C-States: enabled vs disabled

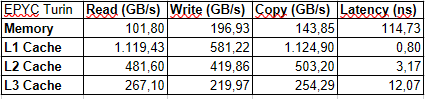

Benchmark results

Observations

- host CPU type consistently performed worse than expected, especially in:

- Memory latency

- Some throughputs

- x86-64-v4 and EPYC-based types often showed more stable or better results

- NUMA enabled:

- No major impact (except for less throughput as expected)

- Slight improvement in latency

- NUMA enabled and C-States disabled:

- Noticeable improvement in latency (up to 10%)

- No significant change in throughputs

Questions

I'm aware that there are several threads about the host CPU type performing worse than expected. However, I still wonder why host is acting like that. Does anyone have deeper insight into why this happens?

What would you recommend as the preferred CPU type for EPYC-based Proxmox clusters in practice?

Thanks in advance.

McShadow

Last edited: