Can you post your network configuration on the affected host as well as the VM configuration of an affected VM? Preferably when the system is in the erroneous state:

Code:

cat /etc/network/interfaces

cat /etc/network/interfaces.d/sdn

ip a

qm config <VMID>

Yesterday, I changed my proxmox network configuration before I got your response.

I did this because I read online that it is not good practice to have a proxmox PVID that is not equal the native unifi swtich port's VLAN ID.

If I am understanding correctly, having the default proxmox vmbr0 PVID 1 and a unifi native switch port VLAN of 1610 effectively transparently translates proxmox's VLAN 1 to unifi's VLAN 1610, potentially causing security issues due to VLAN leakage.

Since yesterday my network configuration looks like this:

/etc/network/interfaces:

Code:

auto lo

iface lo inet loopback

iface nic0 inet manual

auto vmbr0

iface vmbr0 inet manual

bridge-ports nic0

bridge-stp off

bridge-fd 0

bridge-vlan-aware yes

bridge-vids 2-4094

bridge-pvid 1610

auto vmbr0.1610

iface vmbr0.1610 inet static

address 172.16.10.99/24

gateway 172.16.10.1

source /etc/network/interfaces.d/*

/etc/network/interfaces.d/sdn is empty.

I am unsure if this change is relevant to my issue.

Since the change, I had to edit untagged VM NICs to VLAN tag 1610 to make them get a DHCP lease on the correct VLAN (which I did not expect).

Before, my network configuration was basically default, with the singe exception that `bridge-vids 2-4094` and `bridge-vlan-aware yes` was added under the vmbr0 configuration (standard VLAN-aware vmbr0 settings).

Concerning the VMs, I set up three for testing: Two with two tagged VLAN NICs each and one with 7 tagged VLAN NICs, all Alma Linux; see below for configuration.

All can ping their respective interfaces' gateways successfully (`ping 192.168.15.1 -I<815_INTERFACE>`), when configuring my router firewall (pfSense) to allow such traffic.

What doesn't work is pinging a public IP from an arbitrary (otherwise functional) interface: `ping 8.8.8.8 -Iens1` doesn't work, eventhough my router firewall allows such traffic.

The two VMs with 2 NICs do succeed in sending outbound traffic (see below).

The VM with 7 NICs does seem to allow any outgoing traffic (see below).

I read somewhere that this behavior is expected and related to the standard linux routing logic of only having one upstream gateway.

But, considering linux' versatility, this must surely be possible, right (how?)?

When hosting a DNS server I need outgoing forwarder / recursive DNS requests (to public servers like 8.8.8.8 or 1.1.1.1) to go out the same NIC that the DNS request came in on, which doesn't sound too hard.

The VM with 7 NICs has the following configuration:

`ip a`:

Code:

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host noprefixroute

valid_lft forever preferred_lft forever

2: ens18: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether bc:24:11:39:9a:14 brd ff:ff:ff:ff:ff:ff

altname enp6s18

altname enxbc2411399a14

inet 172.16.10.53/24 brd 172.16.10.255 scope global dynamic noprefixroute ens18

valid_lft 5197sec preferred_lft 5197sec

inet6 fe80::be24:11ff:fe39:9a14/64 scope link noprefixroute

valid_lft forever preferred_lft forever

3: ens19: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether bc:24:11:e4:2d:46 brd ff:ff:ff:ff:ff:ff

altname enp6s19

altname enxbc2411e42d46

inet 192.168.16.53/24 brd 192.168.16.255 scope global dynamic noprefixroute ens19

valid_lft 5197sec preferred_lft 5197sec

inet6 fe80::be24:11ff:fee4:2d46/64 scope link noprefixroute

valid_lft forever preferred_lft forever

4: ens20: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether bc:24:11:04:fe:1a brd ff:ff:ff:ff:ff:ff

altname enp6s20

altname enxbc241104fe1a

inet 192.168.20.53/24 brd 192.168.20.255 scope global dynamic noprefixroute ens20

valid_lft 5197sec preferred_lft 5197sec

inet6 fe80::be24:11ff:fe04:fe1a/64 scope link noprefixroute

valid_lft forever preferred_lft forever

5: ens21: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether bc:24:11:0e:73:e7 brd ff:ff:ff:ff:ff:ff

altname enp6s21

altname enxbc24110e73e7

inet 192.168.10.53/24 brd 192.168.10.255 scope global dynamic noprefixroute ens21

valid_lft 5197sec preferred_lft 5197sec

inet6 fe80::be24:11ff:fe0e:73e7/64 scope link noprefixroute

valid_lft forever preferred_lft forever

6: ens22: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether bc:24:11:2f:6b:d1 brd ff:ff:ff:ff:ff:ff

altname enp6s22

altname enxbc24112f6bd1

inet 192.168.15.53/24 brd 192.168.15.255 scope global dynamic noprefixroute ens22

valid_lft 5197sec preferred_lft 5197sec

inet6 fe80::be24:11ff:fe2f:6bd1/64 scope link noprefixroute

valid_lft forever preferred_lft forever

7: ens23: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether bc:24:11:16:a1:b9 brd ff:ff:ff:ff:ff:ff

altname enp6s23

altname enxbc241116a1b9

inet 172.16.20.53/24 brd 172.16.20.255 scope global dynamic noprefixroute ens23

valid_lft 5197sec preferred_lft 5197sec

inet6 fe80::be24:11ff:fe16:a1b9/64 scope link noprefixroute

valid_lft forever preferred_lft forever

8: ens1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether bc:24:11:6f:bb:3e brd ff:ff:ff:ff:ff:ff

altname enp7s1

altname enxbc24116fbb3e

inet 10.35.10.53/24 brd 10.35.10.255 scope global dynamic noprefixroute ens1

valid_lft 5197sec preferred_lft 5197sec

inet6 fe80::be24:11ff:fe6f:bb3e/64 scope link noprefixroute

valid_lft forever preferred_lft forever

Every NIC interface has gotten the correct DHCP lease.

My router firewall (pfSense) only shows ens1 (10.35.10.53) as up, maybe because of:

`ip route show default`:

Code:

default via 10.35.10.1 dev ens1 proto dhcp src 10.35.10.53 metric 100

default via 172.16.10.1 dev ens18 proto dhcp src 172.16.10.53 metric 101

default via 192.168.16.1 dev ens19 proto dhcp src 192.168.16.53 metric 102

default via 192.168.20.1 dev ens20 proto dhcp src 192.168.20.53 metric 103

default via 192.168.10.1 dev ens21 proto dhcp src 192.168.10.53 metric 104

default via 192.168.15.1 dev ens22 proto dhcp src 192.168.15.53 metric 105

default via 172.16.20.1 dev ens23 proto dhcp src 172.16.20.53 metric 106

Trying to access the internet through any interface:

Code:

for i in 1 {18..23}; do curl --connect-timeout 3 --verbose --interface ens$i 1.1.1.1; done

Code:

* Trying 1.1.1.1:80...

* socket successfully bound to interface 'ens1'

* Connection timed out after 3002 milliseconds

* closing connection #0

curl: (28) Connection timed out after 3002 milliseconds

* Trying 1.1.1.1:80...

* socket successfully bound to interface 'ens18'

* Connection timed out after 3002 milliseconds

* closing connection #0

curl: (28) Connection timed out after 3002 milliseconds

* Trying 1.1.1.1:80...

* socket successfully bound to interface 'ens19'

* Connection timed out after 3002 milliseconds

* closing connection #0

curl: (28) Connection timed out after 3002 milliseconds

* Trying 1.1.1.1:80...

* socket successfully bound to interface 'ens20'

* Connection timed out after 3002 milliseconds

* closing connection #0

curl: (28) Connection timed out after 3002 milliseconds

* Trying 1.1.1.1:80...

* socket successfully bound to interface 'ens21'

* Connection timed out after 3002 milliseconds

* closing connection #0

curl: (28) Connection timed out after 3002 milliseconds

* Trying 1.1.1.1:80...

* socket successfully bound to interface 'ens22'

* Connection timed out after 3002 milliseconds

* closing connection #0

curl: (28) Connection timed out after 3002 milliseconds

* Trying 1.1.1.1:80...

* socket successfully bound to interface 'ens23'

* Connection timed out after 3002 milliseconds

* closing connection #0

curl: (28) Connection timed out after 3002 milliseconds

My router firewall does allow outgoing traffic from eg. 10.35.10.53.

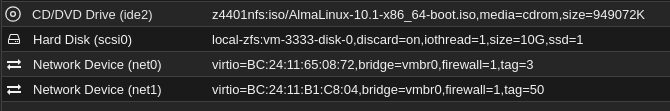

VM config (`qm config 106` on proxmox host):

Code:

bios: ovmf

boot: order=scsi0;net0

cores: 1

cpu: host

efidisk0: local-zfs:vm-106-disk-0,efitype=4m,size=1M

machine: q35

memory: 2048

meta: creation-qemu=10.1.2,ctime=1772731561

name: DeezNatS

net0: virtio=bc:24:11:39:9a:14,bridge=vmbr0,firewall=1,tag=1610

net1: virtio=bc:24:11:e4:2d:46,bridge=vmbr0,firewall=1,tag=816

net2: virtio=bc:24:11:04:fe:1a,bridge=vmbr0,firewall=1,tag=820

net3: virtio=bc:24:11:0e:73:e7,bridge=vmbr0,firewall=1,tag=810

net4: virtio=bc:24:11:2f:6b:d1,bridge=vmbr0,firewall=1,tag=815

net5: virtio=bc:24:11:16:a1:b9,bridge=vmbr0,firewall=1,tag=1620

net6: virtio=bc:24:11:6f:bb:3e,bridge=vmbr0,firewall=1,tag=3510

numa: 1

ostype: l26

scsi0: local-zfs:vm-106-disk-1,iothread=1,size=10G

scsihw: virtio-scsi-single

smbios1: uuid=9ed50fd1-8f33-45fd-a196-9a84d2c56ef6

sockets: 1

vmgenid: 69e95e9b-81d7-41eb-bc30-0527edbf277c

One of the VMs with 2 NICs has the following configuration:

`ip a`:

Code:

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host noprefixroute

valid_lft forever preferred_lft forever

2: ens18: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether bc:24:11:cb:50:00 brd ff:ff:ff:ff:ff:ff

altname enp6s18

altname enxbc2411cb5000

inet 192.168.15.107/24 brd 192.168.15.255 scope global dynamic noprefixroute ens18

valid_lft 6079sec preferred_lft 6079sec

inet6 fe80::be24:11ff:fecb:5000/64 scope link noprefixroute

valid_lft forever preferred_lft forever

3: ens19: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether bc:24:11:0e:73:32 brd ff:ff:ff:ff:ff:ff

altname enp6s19

altname enxbc24110e7332

inet 192.168.16.101/24 brd 192.168.16.255 scope global dynamic noprefixroute ens19

valid_lft 6079sec preferred_lft 6079sec

inet6 fe80::be24:11ff:fe0e:7332/64 scope link noprefixroute

valid_lft forever preferred_lft forever

On this VM, my psSense also only shows ens18 (192.168.15.107) as up.

Note that the 7-NIC and the 2-NIC VMs both have an interface on 192.168.15.0/24, which is allowed all outbound traffic on my pfSense.

So in theory - if one can communicate, the other should, too, right?

`ip route show default`:

Code:

default via 192.168.15.1 dev ens18 proto dhcp src 192.168.15.107 metric 100

default via 192.168.16.1 dev ens19 proto dhcp src 192.168.16.101 metric 101

Trying to access the internet through any interface:

Code:

for i in ens18 ens19; do curl --connect-timeout 3 --verbose --interface $i 1.1.1.1; done

Code:

* Trying 1.1.1.1:80...

* socket successfully bound to interface 'ens18'

* Connected to 1.1.1.1 (1.1.1.1) port 80

* using HTTP/1.x

> GET / HTTP/1.1

> Host: 1.1.1.1

> User-Agent: curl/8.12.1

> Accept: */*

>

* Request completely sent off

< HTTP/1.1 301 Moved Permanently

< Server: cloudflare

< Date: Thu, 05 Mar 2026 18:38:28 GMT

< Content-Type: text/html

< Content-Length: 167

< Connection: keep-alive

< Location: https://1.1.1.1/

< CF-RAY: 9d7b3ce22a2e2657-FRA

<

<html>

<head><title>301 Moved Permanently</title></head>

<body>

<center><h1>301 Moved Permanently</h1></center>

<hr><center>cloudflare</center>

</body>

</html>

* Connection #0 to host 1.1.1.1 left intact

* Trying 1.1.1.1:80...

* socket successfully bound to interface 'ens19'

* Connection timed out after 3002 milliseconds

* closing connection #0

curl: (28) Connection timed out after 3002 milliseconds

VM config (`qm config 105` on proxmox host):

Code:

bios: ovmf

boot: order=scsi0;net0

cores: 1

cpu: host

efidisk0: local-zfs:vm-105-disk-0,efitype=4m,size=1M

machine: q35

memory: 2048

meta: creation-qemu=10.1.2,ctime=1772730688

name: Test2

net0: virtio=BC:24:11:CB:50:00,bridge=vmbr0,firewall=1,tag=815

net1: virtio=BC:24:11:0E:73:32,bridge=vmbr0,firewall=1,tag=816

numa: 1

ostype: l26

scsi0: local-zfs:vm-105-disk-1,iothread=1,size=10G

scsihw: virtio-scsi-single

smbios1: uuid=3a3817fa-2bca-4a52-a17e-e3ecce219b84

sockets: 1

vmgenid: d435e9cb-1321-4cd7-8377-9670b64d52d2

Explanation of subnets and their VLAN IDs:

3510: 10.35.10.0/24

1610: 172.16.10.0/24

1620: 172.16.20.0/24

810: 192.168.10.0/24

815: 192.168.15.0/24

816: 192.168.16.0/24

820: 192.168.20.0/24

Sorry for the lengthy response, I wanted to make sure to thoroughly include all relevant information.

The next best thing that would work for me (after gathering all data above) would be to set up one DNS server VM for every subnet I want to host one on, but this would incur major effort which shouldn't be required, I assume.

Also, this would of course cause chaos in the sense that every server would require similar configurations - configuration changes to DNS would then be needed to be applied accross multiple (at least seven - maybe eleven) VMs.