acpi: 1

agent: 1

autostart: 0

balloon: 0

bios: seabios

boot: c

bootdisk: virtio0

cicustom:

ciuser: ubuntu

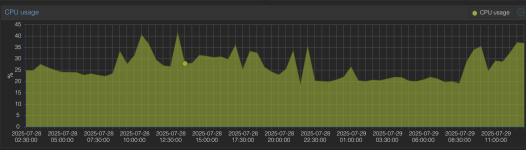

cores: 4

cpu: host

cpuunits: 1000

description: db

hotplug: network,disk,usb

ide2: common:vm-100-cloudinit,media=cdrom

ipconfig0: ip=10.10.10.10/24,gw=10.10.10.1

kvm: 1

memory: 8192

meta: creation-qemu=7.2.10,ctime=1728285531

name: db

net0: virtio=FE:A5:30:7B:B9:3B,bridge=vmbr100

numa: 1

onboot: 1

ostype: l26

protection: 0

scsihw: virtio-scsi-pci

smbios1: uuid=cf9faa60-fd2a-4f13-93e1-d8c58303e731

sockets: 1

sshkeys: ...

tablet: 1

vga: std

virtio0: common:vm-100-disk-0,format=raw,mbps_rd=75,mbps_rd_max=100,mbps_wr=75,mbps_wr_max=100,size=50G

virtio1: common:vm-100-disk-1,format=raw,mbps_rd=75,mbps_rd_max=100,mbps_wr=75,mbps_wr_max=100,size=50G

vmgenid: 34944264-6568-4f10-9d33-27cbed32b0c2