Sometime last year I found that when snapshotting a running container with mariadb would cause the database to be unable to complete any queries - the process was running but queries just stacked up. It was as if the fs was missing briefly but could not recover. It was assumed it was a mariadb issue. I was told it was to do with the workload or speed of the underlying disk. None of that was true - it was the snapshot on zfs that was the issue.

Then later we had an issue with another one, this time I was told, its something to do with a bug where the guest services running inside the VM issues an fs-freeze then can't fs-thaw. In the end it wasn't too much of an issue for me to just change the snaphot to shutdown mode instead - resulting in a better backup anyway.

Now, I have a complex WMS running an antiquated as a VM, its replicating every 15 mins to 2 other nodes and at 22.30 and 3.30 it runs a snapshot. It doesn't support guest.

I noticed that the backup had not been running because the backup fs was not actually online so having resolved that I went home and late at night it started the backup and duly broke the VM.

What it looked like was that the VM and its various processes were still running but it was unable to flush to disk. eventually when made aware, we could log in but it couldn't' see any disks.

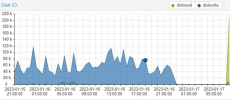

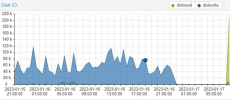

Here you can see the disk access dies on snapshot.

Here you can see the disk access dies on snapshot.

So far the only log I have recovered is the part of the backup log that relates to the VM <below>

and obviously nothing from the VM itself - because its log abruptly stops at 22.31.

The guest VM is ubunto 12.04, 32 bit PAE. But I don't think any of that is relevant. We were able to log into it but you could not run any commands because it couldn't read any binaries. For example uptime resulted in ssh freezing.

For now I have taken 101 out of the backup list - what other logs <and where are they> should I look in for clues?

:

Inside the VM:

Virtual Environment 7.2-7

Then later we had an issue with another one, this time I was told, its something to do with a bug where the guest services running inside the VM issues an fs-freeze then can't fs-thaw. In the end it wasn't too much of an issue for me to just change the snaphot to shutdown mode instead - resulting in a better backup anyway.

Now, I have a complex WMS running an antiquated as a VM, its replicating every 15 mins to 2 other nodes and at 22.30 and 3.30 it runs a snapshot. It doesn't support guest.

I noticed that the backup had not been running because the backup fs was not actually online so having resolved that I went home and late at night it started the backup and duly broke the VM.

What it looked like was that the VM and its various processes were still running but it was unable to flush to disk. eventually when made aware, we could log in but it couldn't' see any disks.

Here you can see the disk access dies on snapshot.

Here you can see the disk access dies on snapshot.So far the only log I have recovered is the part of the backup log that relates to the VM <below>

and obviously nothing from the VM itself - because its log abruptly stops at 22.31.

The guest VM is ubunto 12.04, 32 bit PAE. But I don't think any of that is relevant. We were able to log into it but you could not run any commands because it couldn't read any binaries. For example uptime resulted in ssh freezing.

For now I have taken 101 out of the backup list - what other logs <and where are they> should I look in for clues?

:

Code:

INFO: starting new backup job: vzdump 103 109 110 904 101 102 100 --storage pittolm --compress zstd --notes-template '{{guestname}}' --mode snapshot --prune-backups 'keep-daily=7,keep-monthly=1,keep-weekly=1' --mailto infrastructure@farmfoods.co.uk --quiet 1 --mailnotification failureINFO: skip external VMs: 100, 102, 109, 110, 904

INFO: Starting Backup of VM 101 (qemu)

INFO: Backup started at 2023-01-16 22:30:05

INFO: status = running

INFO: VM Name: wmsvtol3

INFO: include disk 'scsi0' 'local-zfs:vm-101-disk-2' 25G

INFO: include disk 'scsi1' 'local-zfs:vm-101-disk-0' 4G

INFO: include disk 'scsi2' 'local-zfs:vm-101-disk-1' 25G

INFO: backup mode: snapshot

INFO: ionice priority: 7

INFO: snapshots found (not included into backup)

INFO: creating vzdump archive '/mnt/pve/pittolm/dump/vzdump-qemu-101-2023_01_16-22_30_05.vma.zst'

INFO: started backup task '9cf5ffe1-5f29-4327-b0ae-c50ed7f8f4de'

INFO: resuming VM again

INFO: 1% (989.6 MiB of 54.0 GiB) in 3s, read: 329.9 MiB/s, write: 261.7 MiB/s

INFO: 3% (1.8 GiB of 54.0 GiB) in 6s, read: 269.5 MiB/s, write: 252.9 MiB/s

INFO: 4% (2.5 GiB of 54.0 GiB) in 9s, read: 269.5 MiB/s, write: 254.9 MiB/s

INFO: 6% (3.3 GiB of 54.0 GiB) in 12s, read: 270.7 MiB/s, write: 256.4 MiB/s

INFO: 9% (5.3 GiB of 54.0 GiB) in 15s, read: 681.5 MiB/s, write: 232.4 MiB/s

INFO: 11% (6.2 GiB of 54.0 GiB) in 18s, read: 299.7 MiB/s, write: 151.8 MiB/s

INFO: 14% (7.8 GiB of 54.0 GiB) in 21s, read: 546.2 MiB/s, write: 200.7 MiB/s

INFO: 15% (8.1 GiB of 54.0 GiB) in 2m 41s, read: 2.2 MiB/s, write: 2.1 MiB/s

INFO: 16% (8.7 GiB of 54.0 GiB) in 3m 21s, read: 13.9 MiB/s, write: 12.4 MiB/s

INFO: 17% (9.2 GiB of 54.0 GiB) in 3m 46s, read: 22.3 MiB/s, write: 18.3 MiB/s

INFO: 18% (9.8 GiB of 54.0 GiB) in 3m 52s, read: 97.9 MiB/s, write: 79.9 MiB/s

INFO: 19% (10.3 GiB of 54.0 GiB) in 3m 56s, read: 135.0 MiB/s, write: 82.7 MiB/s

INFO: 20% (10.8 GiB of 54.0 GiB) in 4m 1s, read: 110.9 MiB/s, write: 89.3 MiB/s

INFO: 21% (11.5 GiB of 54.0 GiB) in 4m 5s, read: 161.3 MiB/s, write: 143.8 MiB/s

INFO: 22% (11.9 GiB of 54.0 GiB) in 4m 10s, read: 85.5 MiB/s, write: 82.3 MiB/s

INFO: 23% (12.6 GiB of 54.0 GiB) in 4m 13s, read: 228.4 MiB/s, write: 149.3 MiB/s

INFO: 24% (13.0 GiB of 54.0 GiB) in 4m 21s, read: 53.9 MiB/s, write: 52.4 MiB/s

INFO: 25% (13.5 GiB of 54.0 GiB) in 4m 25s, read: 136.8 MiB/s, write: 127.6 MiB/s

INFO: 26% (14.1 GiB of 54.0 GiB) in 4m 29s, read: 158.6 MiB/s, write: 148.5 MiB/s

INFO: 27% (14.6 GiB of 54.0 GiB) in 4m 32s, read: 160.7 MiB/s, write: 154.9 MiB/s

...

INFO: 99% (53.9 GiB of 54.0 GiB) in 16m 6s, read: 171.6 MiB/s, write: 75.8 MiB/s

INFO: 100% (54.0 GiB of 54.0 GiB) in 16m 7s, read: 87.2 MiB/s, write: 55.1 MiB/s

INFO: backup is sparse: 19.71 GiB (36%) total zero data

INFO: transferred 54.00 GiB in 967 seconds (57.2 MiB/s)

INFO: archive file size: 9.24GB

INFO: adding notes to backup

INFO: prune older backups with retention: keep-daily=7, keep-monthly=1, keep-weekly=1

INFO: removing backup 'pittolm:backup/vzdump-qemu-101-2022_12_14-22_30_01.vma.zst'

INFO: pruned 1 backup(s) not covered by keep-retention policy

INFO: Finished Backup of VM 101 (00:17:36)

INFO: Backup finished at 2023-01-16 22:47:41Inside the VM:

Code:

Jan 16 22:28:01 wmsvtol3 sshd[31817]: Did not receive identification string from ::ffff:192.168.6.98

Jan 16 22:29:01 wmsvtol3 sshd[31817]: Did not receive identification string from ::ffff:192.168.6.98 <-- last entry is just before snapshot, i.e. not later on in that process.

..

Jan 17 07:28:08 wmsvtol3 syslogd 1.4.1: restarVirtual Environment 7.2-7

Last edited: