Hey folks,

I've recently updated my cluster to Proxmox 9. Most things went without issues, but I'm stuck on one problem.

In one machine I have a LSI 9207-8i that I'm passing through to a VM. On Proxmox 8 with a 6.8 kernel this is working fine.

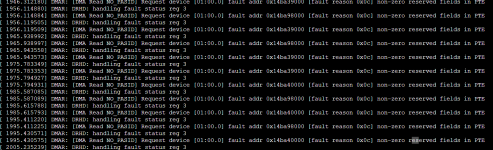

After upgrading to Proxmox 9 with kernel 6.14.8-2-pve the passthrough is eventually failing. The issue is somewhere in the handoff of the controller to the VM (See the attached screenshot for the errors).

I've done a bit of digging and there's been some movement in the mpt3sas driver in the kernel (https://github.com/torvalds/linux/commits/master/drivers/scsi/mpt3sas), with some interesting commits that could potentially relate:

- https://github.com/torvalds/linux/commit/3f5eb062e8aa335643181c480e6c590c6cedfd22

- https://github.com/torvalds/linux/commit/5612d6d51ed2634a033c95de2edec7449409cbb9

Long story short, I'm on Proxmox 9, but I've pinned the kernel to 6.8.12-13-pve. Things are working fine. But that's running everything in an unsupported configuration, which I don't like.

Has anybody else run into this issue or has some pointers or where I better go digging?

I've recently updated my cluster to Proxmox 9. Most things went without issues, but I'm stuck on one problem.

In one machine I have a LSI 9207-8i that I'm passing through to a VM. On Proxmox 8 with a 6.8 kernel this is working fine.

After upgrading to Proxmox 9 with kernel 6.14.8-2-pve the passthrough is eventually failing. The issue is somewhere in the handoff of the controller to the VM (See the attached screenshot for the errors).

I've done a bit of digging and there's been some movement in the mpt3sas driver in the kernel (https://github.com/torvalds/linux/commits/master/drivers/scsi/mpt3sas), with some interesting commits that could potentially relate:

- https://github.com/torvalds/linux/commit/3f5eb062e8aa335643181c480e6c590c6cedfd22

- https://github.com/torvalds/linux/commit/5612d6d51ed2634a033c95de2edec7449409cbb9

Long story short, I'm on Proxmox 9, but I've pinned the kernel to 6.8.12-13-pve. Things are working fine. But that's running everything in an unsupported configuration, which I don't like.

Has anybody else run into this issue or has some pointers or where I better go digging?