Hello everyone,

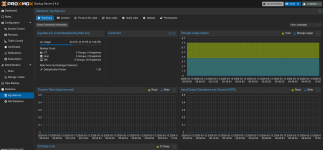

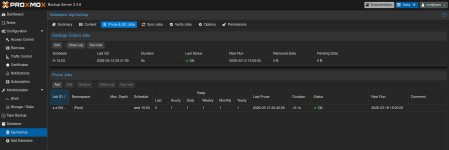

I use Proxmox and Proxmox Backup Server. I have a single ZFS-formatted 8TB archive HDD that I use with Nextcloud that's showing 97% full, a RAID1 ZFS 8TB HDD pool that I use with PBS for backups that's showing 97% full, and a RAID6 ZFS 8TB HDD pool used as a secondary PBS backup that is also showing 97% full. Why is this? Both PBS instances are showing around 56% full when logged-in.

I use Proxmox and Proxmox Backup Server. I have a single ZFS-formatted 8TB archive HDD that I use with Nextcloud that's showing 97% full, a RAID1 ZFS 8TB HDD pool that I use with PBS for backups that's showing 97% full, and a RAID6 ZFS 8TB HDD pool used as a secondary PBS backup that is also showing 97% full. Why is this? Both PBS instances are showing around 56% full when logged-in.