Hi,

on my Proxmox host I cannot run pct list anymore because it's endless and I don't have any output:

If I try to run it with strace I get one endless timeout but I cannot realize which program is creating it:

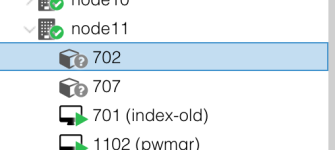

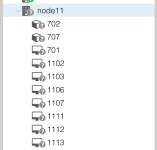

I don't have any errors in the syslog, but this node is displayed as unknown into the Proxmox GUI:

This is my pveversion:

Could you help me please?

on my Proxmox host I cannot run pct list anymore because it's endless and I don't have any output:

Code:

root@node11:~# pct list

(no return to console...)If I try to run it with strace I get one endless timeout but I cannot realize which program is creating it:

Code:

root@node11:~# strace pct list

execve("/usr/sbin/pct", ["pct", "list"], [/* 19 vars */]) = 0

brk(NULL) = 0x562d5ca9d000

access("/etc/ld.so.nohwcap", F_OK) = -1 ENOENT (No such file or directory)

mmap(NULL, 12288, PROT_READ|PROT_WRITE, MAP_PRIVATE|MAP_ANONYMOUS, -1, 0) = 0x7ff2ffe02000

access("/etc/ld.so.preload", R_OK) = -1 ENOENT (No such file or directory)

open("/etc/ld.so.cache", O_RDONLY|O_CLOEXEC) = 3

fstat(3, {st_mode=S_IFREG|0644, st_size=39906, ...}) = 0

mmap(NULL, 39906, PROT_READ, MAP_PRIVATE, 3, 0) = 0x7ff2ffdf8000

close(3) = 0

[...]

close(5) = 0

close(8) = 0

close(11) = 0

getpid() = 4241

close(6) = 0

select(16, [7 9], NULL, NULL, {tv_sec=1, tv_usec=0}) = 0 (Timeout)

select(16, [7 9], NULL, NULL, {tv_sec=1, tv_usec=0}) = 0 (Timeout)

select(16, [7 9], NULL, NULL, {tv_sec=1, tv_usec=0}) = 0 (Timeout)

select(16, [7 9], NULL, NULL, {tv_sec=1, tv_usec=0}) = 0 (Timeout)

select(16, [7 9], NULL, NULL, {tv_sec=1, tv_usec=0}) = 0 (Timeout)

select(16, [7 9], NULL, NULL, {tv_sec=1, tv_usec=0}) = 0 (Timeout)

[...]I don't have any errors in the syslog, but this node is displayed as unknown into the Proxmox GUI:

This is my pveversion:

Code:

root@node11:/# pveversion -v

proxmox-ve: 5.2-2 (running kernel: 4.13.4-1-pve)

pve-manager: 5.2-9 (running version: 5.2-9/4b30e8f9)

pve-kernel-4.15: 5.2-10

pve-kernel-4.15.18-7-pve: 4.15.18-27

pve-kernel-4.15.18-1-pve: 4.15.18-19

pve-kernel-4.13.13-5-pve: 4.13.13-38

pve-kernel-4.13.13-3-pve: 4.13.13-34

pve-kernel-4.13.13-2-pve: 4.13.13-33

pve-kernel-4.13.4-1-pve: 4.13.4-26

corosync: 2.4.2-pve5

criu: 2.11.1-1~bpo90

glusterfs-client: 3.8.8-1

ksm-control-daemon: not correctly installed

libjs-extjs: 6.0.1-2

libpve-access-control: 5.0-8

libpve-apiclient-perl: 2.0-5

libpve-common-perl: 5.0-40

libpve-guest-common-perl: 2.0-18

libpve-http-server-perl: 2.0-11

libpve-storage-perl: 5.0-30

libqb0: 1.0.1-1

lvm2: 2.02.168-pve6

lxc-pve: 3.0.2+pve1-2

lxcfs: 3.0.2-2

novnc-pve: 1.0.0-2

proxmox-widget-toolkit: 1.0-20

pve-cluster: 5.0-30

pve-container: 2.0-28

pve-docs: 5.2-8

pve-firewall: 3.0-14

pve-firmware: 2.0-5

pve-ha-manager: 2.0-5

pve-i18n: 1.0-6

pve-libspice-server1: 0.12.8-3

pve-qemu-kvm: 2.11.2-1

pve-xtermjs: 1.0-5

qemu-server: 5.0-36

smartmontools: 6.5+svn4324-1

spiceterm: 3.0-5

vncterm: 1.5-3Could you help me please?