Hi everyone,

I have a question regarding the specific behavior of Proxmox VE's High Availability (HA) and Watchdog mechanism.

Our production environment consists of a PVE cluster with several nodes distributed across different server rooms in the office building. Recently, due to major internal network maintenance, we knew that the backbone connection between these rooms would be disconnected for about 30 minutes.

To prepare for this, my primary goal was to ensure that even if the network is cut and nodes lose their cluster quorum, the currently running VMs would stay powered on (even if they are temporarily unreachable from the outside). Most importantly, I wanted to prevent the PVE nodes from being force-rebooted by the fencing mechanism, as a hard reboot is quite stressful for the hardware and the critical services running on it.

My strategy was as follows: before the scheduled maintenance, I manually changed the HA state of every managed VM from

However, once the network actually went down, the isolated node still performed a hard reboot after about a minute. The system log revealed the culprit: "

---

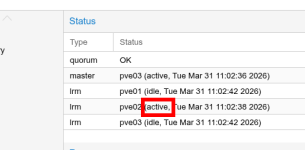

To investigate the root cause, I set up a simulation in my lab with three nodes (pve01, pve02, pve03) and reproduced the issue using an HA-managed VM (ID: 103) running on pve02:

1. Changing the HA State:I changed the state for VM 103 from started to ignored. The VM continued to run fine on pve02. In my understanding, since VM 103 was no longer under HA management, pve02 should not have been subject to the HA watchdog's fencing tests.

2. Simulating Network Failure:I disabled the network interface on pve02 to simulate a loss of cluster quorum and isolate the node.

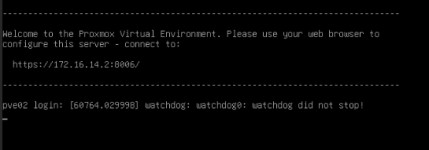

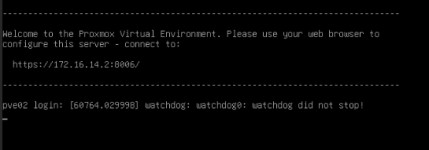

3. Watchdog Triggered Reboot:Within a minute of the disconnection, the pve02 console started flashing: "watchdog: watchdog0: watchdog did not stop!". Almost immediately after, the entire physical host rebooted. There was no graceful shutdown for the VM; it was a hard power-off.

My questions are:

I have a question regarding the specific behavior of Proxmox VE's High Availability (HA) and Watchdog mechanism.

Our production environment consists of a PVE cluster with several nodes distributed across different server rooms in the office building. Recently, due to major internal network maintenance, we knew that the backbone connection between these rooms would be disconnected for about 30 minutes.

To prepare for this, my primary goal was to ensure that even if the network is cut and nodes lose their cluster quorum, the currently running VMs would stay powered on (even if they are temporarily unreachable from the outside). Most importantly, I wanted to prevent the PVE nodes from being force-rebooted by the fencing mechanism, as a hard reboot is quite stressful for the hardware and the critical services running on it.

My strategy was as follows: before the scheduled maintenance, I manually changed the HA state of every managed VM from

started to ignored. My reasoning was that once a resource is set to ignored, the HA Manager should stop supervising these VMs, and therefore, there should be no reason for the watchdog to trigger a fencing operation.However, once the network actually went down, the isolated node still performed a hard reboot after about a minute. The system log revealed the culprit: "

kernel: watchdog: watchdog0: watchdog did not stop!". It is clear that the watchdog triggered because the node lost quorum, but I am confused as to why it remained active even though I had set all VM HA states to "ignored".---

To investigate the root cause, I set up a simulation in my lab with three nodes (pve01, pve02, pve03) and reproduced the issue using an HA-managed VM (ID: 103) running on pve02:

1. Changing the HA State:I changed the state for VM 103 from started to ignored. The VM continued to run fine on pve02. In my understanding, since VM 103 was no longer under HA management, pve02 should not have been subject to the HA watchdog's fencing tests.

2. Simulating Network Failure:I disabled the network interface on pve02 to simulate a loss of cluster quorum and isolate the node.

3. Watchdog Triggered Reboot:Within a minute of the disconnection, the pve02 console started flashing: "watchdog: watchdog0: watchdog did not stop!". Almost immediately after, the entire physical host rebooted. There was no graceful shutdown for the VM; it was a hard power-off.

My questions are:

- Why does the watchdog still trigger fencing even if all VMs are set to ignored?

- Is there any way to prevent a node from rebooting during a temporary network outage without completely deleting the HA configuration?