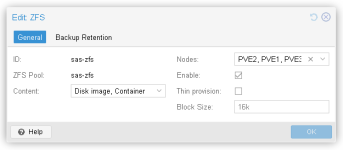

I have a 3 node cluster with a SSD ZFS pool that keeps increasing in size.

Each server has 24 SSD drives in a ZFS raid.

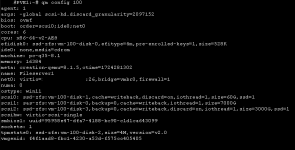

The 'autotrim' setting is set to on for each server node.

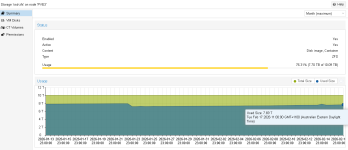

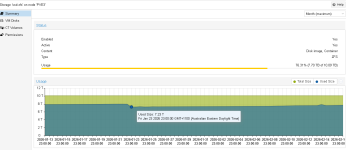

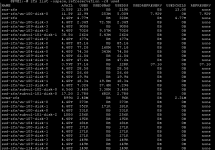

The two attachment show the space being slowly used up on the server node.

All disks on VMs on all server nodes have 'discard' set to 'on'.

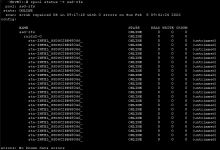

I also ran the command 'zpool status -t ssd-zfs' and get the result from the 'PVE3 SSD-ZFS Status' image. This says 'untrimmed'.

It is the same on PVE2, But PVE1 says 'trim unsupported' instead.

Can anyone help me with why this is happening.

Each server has 24 SSD drives in a ZFS raid.

The 'autotrim' setting is set to on for each server node.

The two attachment show the space being slowly used up on the server node.

All disks on VMs on all server nodes have 'discard' set to 'on'.

I also ran the command 'zpool status -t ssd-zfs' and get the result from the 'PVE3 SSD-ZFS Status' image. This says 'untrimmed'.

It is the same on PVE2, But PVE1 says 'trim unsupported' instead.

Can anyone help me with why this is happening.