Hello,

I have a three-node cluster with two rings.

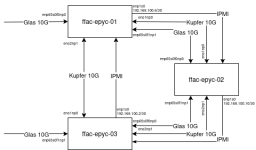

1. One full ring between the three nodes. Similar to the configuration shown here:

https://pve.proxmox.com/wiki/Full_Mesh_Network_for_Ceph_Server#Example

2. And one "uplink" ring of the "e0np0" interfaces as shown here (derived picture from the wiki-topology with the additional ring):

Image with the full topology (forget about the IPMI network here)

So as ceph is working well with this setup, just as documented here:

https://pve.proxmox.com/wiki/Full_Mesh_Network_for_Ceph_Server#Example

The question which is still open is:

How to manage the VM IP Addresses running on these hosts.

1. node1 and node3 have direct access to the uplink - can therefore put VMs into their vmbr0 and be done

2. node2 does not have direct access and must be routed via epyc03 and/or epyc01 - the VMs on this host should be able to use public IPs from the uplink broadcast domain.

How we currently solve this:

1. node03 puts e0np0 and e1np1 into vmbr0

2. node02 puts e1np1 into vmbr0

3. all VMs are on the respective vmbr0

Alternative option:

- just buy a switch and get proper uplink configured for node02 as well

I wonder what options are possible for such a setup. I prefer using onboard SDN EVPN stuff, but am also interested in other options and ideas for this problem.

I have a three-node cluster with two rings.

1. One full ring between the three nodes. Similar to the configuration shown here:

https://pve.proxmox.com/wiki/Full_Mesh_Network_for_Ceph_Server#Example

2. And one "uplink" ring of the "e0np0" interfaces as shown here (derived picture from the wiki-topology with the additional ring):

Code:

(WAN) | ┌───────────┐ | (WAN)

| │ Node2 │ |

| ├─────┬─────┤ |

| ┌─┤e0np0│e1np1├─┐ |

| | ├─────┬─────┤ | |

| | │eno21│eno10│ | |

| | └──┬──┴──┬──┘ | |

| │ │ │ | |

┌───────┬─────┐ │ │ │ │ ┌─────┬───────┐

│ │e0np0│ │ │ │ │ │e0np0│ │

│ ├─────┤ │ │ │ │ ├─────┤ │

│ │e1np1├───┘ │ │ └───┤e1np1| │

│ ├─────┤ │ │ ├─────┤ │

│ │eno10├────────┘ └────────┤eno21│ │

│ Node1 ├─────┤ ├─────┤ Node3 │

│ │eno21├───────────────────────┤eno10│ │

└───────┴─────┘ └─────┴───────┘Image with the full topology (forget about the IPMI network here)

So as ceph is working well with this setup, just as documented here:

https://pve.proxmox.com/wiki/Full_Mesh_Network_for_Ceph_Server#Example

The question which is still open is:

How to manage the VM IP Addresses running on these hosts.

1. node1 and node3 have direct access to the uplink - can therefore put VMs into their vmbr0 and be done

2. node2 does not have direct access and must be routed via epyc03 and/or epyc01 - the VMs on this host should be able to use public IPs from the uplink broadcast domain.

How we currently solve this:

1. node03 puts e0np0 and e1np1 into vmbr0

2. node02 puts e1np1 into vmbr0

3. all VMs are on the respective vmbr0

Alternative option:

- just buy a switch and get proper uplink configured for node02 as well

I wonder what options are possible for such a setup. I prefer using onboard SDN EVPN stuff, but am also interested in other options and ideas for this problem.