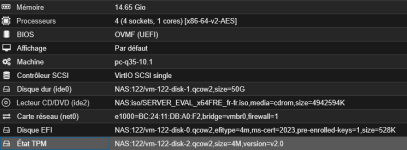

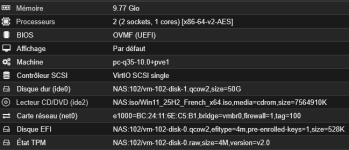

Hello, I have several Windows Server VMs, and the older ones with TPM in .raw format have no problems. Since the update, I wanted to try machines with TPM in .qcow2 format to take snapshots.

Unfortunately, after a while, these VMs have a problem when restarting and can no longer boot up.

When I turn on the VM, the start boot menu with the Proxmxo logo appears, followed by ‘Preparing for automatic recovery,’ and then the VM shuts down. It is impossible to start. I think it's because of .qcow2, as .raw files don't have any problems and two VMs in .qcow2 caused me the problem.

Any ideas? Thank you.

Unfortunately, after a while, these VMs have a problem when restarting and can no longer boot up.

When I turn on the VM, the start boot menu with the Proxmxo logo appears, followed by ‘Preparing for automatic recovery,’ and then the VM shuts down. It is impossible to start. I think it's because of .qcow2, as .raw files don't have any problems and two VMs in .qcow2 caused me the problem.

Any ideas? Thank you.

Last edited: