Thanks for the reply. The ESXi host is still there, but the VM is no longer in the list of VMs I can import. Not sure why?It does not remove the ESXi source, so just run the copy again. You should also be able to change the Nic to VirtIO. I also had to edit the network config within the VM and change the network device from ens160 to ens18.

New Import Wizard Available for Migrating VMware ESXi Based Virtual Machines

- Thread starter t.lamprecht

- Start date

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Hi, if the VM is no longer displayed, you need to rescan the datastore or reconnect to the ESXi.I'm doing some testing, migrating VMs from ESXi 8.0 Update 3 to PVE 8.3.3. It failed on my first test VM (migration worked, but no boot device), but worked on my second because I'd only installed VirtIO on the second VM. I deleted the migrated copy of my first VM from PVE, but now I can't find it listed as a migration source. The VM still exists on the VMware environment. Is a VM removed from the list once it's migrated? Or have I done something wrong? How can I migrate the VM again now that I've installed VirtIO installed? Thanks!

The boot device is normal Windows behavior.

It is best to start the Windows VMs the first time with an IDE/SATA connected OS disk. Connect further disks via VirtIO SCSI or, if it is a single disk VM, a small dummy disk as SCSI. Then install the Virtio drivers and then shut down the VM and connect the OS disk as SCSI. (Do not forget to select the boot device)

A disable/reenable of the ESXi host as a source fixed it.I can't explain that. Mine are still there and I can import multiple times.

Disabling/enabling the source worked, thanks! Thanks for the migration tips too.Hi, if the VM is no longer displayed, you need to rescan the datastore or reconnect to the ESXi.

The boot device is normal Windows behavior.

It is best to start the Windows VMs the first time with an IDE/SATA connected OS disk. Connect further disks via VirtIO SCSI or, if it is a single disk VM, a small dummy disk as SCSI. Then install the Virtio drivers and then shut down the VM and connect the OS disk as SCSI. (Do not forget to select the boot device)

Right, I haven't yet considered sshfs although I heard of it before. I tend to overcomplicate things in the hope of achieving better efficiency of transfer or somethingSounds interesting. What I have been using for manual imports is sshfs to mount the filesystem over ssh from proxmox to a vmware server and works surprisingly well. You might want to give that a try as it avoids the step of having to move to nfs and does give proxmox rw access to the vmfs filesystem (local or san) on vmware.

Hi,

I’m new to Proxmox, but have worked with vSphere for years. I’m experiencing the following issue, was told this has been resolved, but still see problems.

I’m using the ESXi storage type and attempting to import VMware VMs into Proxmox. When importing from an ESXi 7 (7.0.3, 24411414) host there are no issues. However, when trying to import form an ESXi 8 (8.0.3, 24414501) host I get the following error message when selecting a VM and clicking Import.

Storage ‘donovan’ is not activated (500)

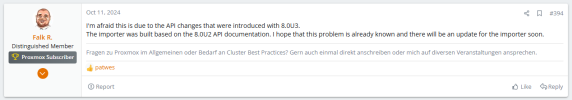

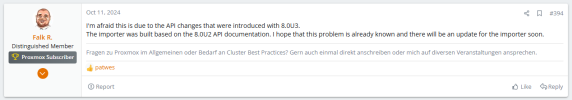

I suspect this is due to a change in the ESXi 8 API, which seems to be confirmed by Falk R. in October 2023 (https://forum.proxmox.com/threads/n...sxi-based-virtual-machines.144023/post-710869). Based on the most recent post in this thread it does not seem this issue has been resolved.

I’ve attached screenshots which may be helpful.

My ESXi host version is: 8.0.3, 24414501

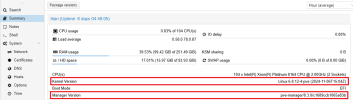

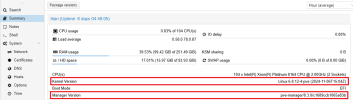

My Proxmox host version is: 8.3.0 (attached summary screenshot)

Can anyone confirm if this issue has been resolved by Proxmox?

Or offer any assistance or guidance?

I appreciate your help, as we begin our Proxmox journey.

I’m new to Proxmox, but have worked with vSphere for years. I’m experiencing the following issue, was told this has been resolved, but still see problems.

I’m using the ESXi storage type and attempting to import VMware VMs into Proxmox. When importing from an ESXi 7 (7.0.3, 24411414) host there are no issues. However, when trying to import form an ESXi 8 (8.0.3, 24414501) host I get the following error message when selecting a VM and clicking Import.

Storage ‘donovan’ is not activated (500)

I suspect this is due to a change in the ESXi 8 API, which seems to be confirmed by Falk R. in October 2023 (https://forum.proxmox.com/threads/n...sxi-based-virtual-machines.144023/post-710869). Based on the most recent post in this thread it does not seem this issue has been resolved.

I’ve attached screenshots which may be helpful.

My ESXi host version is: 8.0.3, 24414501

My Proxmox host version is: 8.3.0 (attached summary screenshot)

Can anyone confirm if this issue has been resolved by Proxmox?

Or offer any assistance or guidance?

I appreciate your help, as we begin our Proxmox journey.

Hello everyone,

I'm currently facing the same problem!

ESXi is on the latest version ( VMware ESXi, 8.0.3, 24414501) and I also get the error 500 Storage is not activated.

Can we already predict when there will be a solution to this?

Thanks!

I'm currently facing the same problem!

ESXi is on the latest version ( VMware ESXi, 8.0.3, 24414501) and I also get the error 500 Storage is not activated.

Can we already predict when there will be a solution to this?

Thanks!

Hi Jad238, BlackBird669!

I'm just testing importing from the latest ESXI (8.0.3, 24414501) and things seem to work for me. Is it possible that something has changed on the ESXI-side? I'm mainly thinking about credentials and possibly certificates?

Have you already tried taking the storage out of Datacenter->Storage and readding it (just to rule out the above)? Would you mind sharing the esxi-section of your PVE host's /etc/storage.cfg?

It might be helpful to monitor the logs on the ESXI-side (eg /var/log/hostd.log) during attachment of the ESXI-storage from PVE to get additional information about what might go wrong!

Best regards,

Daniel

I'm just testing importing from the latest ESXI (8.0.3, 24414501) and things seem to work for me. Is it possible that something has changed on the ESXI-side? I'm mainly thinking about credentials and possibly certificates?

Have you already tried taking the storage out of Datacenter->Storage and readding it (just to rule out the above)? Would you mind sharing the esxi-section of your PVE host's /etc/storage.cfg?

It might be helpful to monitor the logs on the ESXI-side (eg /var/log/hostd.log) during attachment of the ESXI-storage from PVE to get additional information about what might go wrong!

Best regards,

Daniel

Last edited:

Hi Daniel,

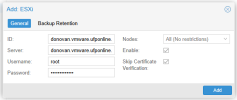

In my circumstance all the ESXi 7.x and 8.x hosts are part of the same vCenter, and are using the default CA associated with vSphere. There are no custom CAs or certificates on the hosts, or vCenter. We closely monitor and track vSphere changes, and there has not been a configuration change in months.

We have tried to remove and re-add the ESXi storage on Proxmox to the vSphere 8.x hosts multiple times with the same result as mentioned in the original post.

I’ve attached a screenshot of the current ESXi storage settings. I attempted to get the contents of the Proxmox /etc/storage.cfg file, but the file doesn’t seem to exist. I attached a screenshot of the console output when trying to cat /etc/storage.cfg.

It’s probably worth noting that I can easily import guests from ESXi 7.x hosts into the same Proxmox host as shown in the screenshots (TITAN).

In the mean time I will get ESXi log details and post here. I should have that tomorrow.

In my circumstance all the ESXi 7.x and 8.x hosts are part of the same vCenter, and are using the default CA associated with vSphere. There are no custom CAs or certificates on the hosts, or vCenter. We closely monitor and track vSphere changes, and there has not been a configuration change in months.

We have tried to remove and re-add the ESXi storage on Proxmox to the vSphere 8.x hosts multiple times with the same result as mentioned in the original post.

I’ve attached a screenshot of the current ESXi storage settings. I attempted to get the contents of the Proxmox /etc/storage.cfg file, but the file doesn’t seem to exist. I attached a screenshot of the console output when trying to cat /etc/storage.cfg.

It’s probably worth noting that I can easily import guests from ESXi 7.x hosts into the same Proxmox host as shown in the screenshots (TITAN).

In the mean time I will get ESXi log details and post here. I should have that tomorrow.

Attachments

Hi Daniel,

I’ve attached an excerpt from the ESXi 8.x host /var/log/hostd.log file as it relates to accessing the ESXi storage from the Proxmox GUI. This log captures activity on the ESXi 8.x host while taking the following actions through the Proxmox GUI.

The only error I see in the ESXi 8.x /var/log/hostd.log file is at exactly the moment I click Import in the Proxmox GUI (around line 739).

I’ve attached an excerpt from the ESXi 8.x host /var/log/hostd.log file as it relates to accessing the ESXi storage from the Proxmox GUI. This log captures activity on the ESXi 8.x host while taking the following actions through the Proxmox GUI.

- Clicking on the ESXi storage.

- Clicking a powered off VM and clicking Import

- Visually seeing the 'storage 'donovan.vmware.ufponline.net' is not activated (500)' error.

- Clicking OK to close the error

The only error I see in the ESXi 8.x /var/log/hostd.log file is at exactly the moment I click Import in the Proxmox GUI (around line 739).

2025-03-18T01:43:22.317Z In(166) Hostd[10946903]: [Originator@6876 sub=AdapterServer opID=req=000000fa39776250-0e5b sid=520fdb7f user=root] AdapterServer caught exception; <<520fdb7f-6b87-1646-b1af-d38ad4987a93, <TCP '127.0.0.1 : 8309'>, <TCP '127.0.0.1 : 31029'>>, 675c3afe-258c04e6-9820-506b4b09346c-datastorebrowser, vim.host.DatastoreBrowser.search, (null), (null)>, N3Vim5Fault20InvalidDatastorePath9ExceptionE(Fault cause: vim.fault.InvalidDatastorePath2025-03-18T01:43:22.320Z In(166) Hostd[10946852]: --> )2025-03-18T01:43:22.320Z In(166) Hostd[10946852]: --> [context]zKq7AVICAgAAACWJdAEMaG9zdGQAAOPJR2xpYnZtYWNvcmUuc28AASR5XWhvc3RkAAHVZWQB1bhkATTDZIKzUlkBbGlidmltLXR5cGVzLnNvAAG1lWIAHtssAOD/LAA7UFIDUngAbGlicHRocmVhZC5zby4wAAQ/Ug9saWJjLnNvLjYA[/context]2025-03-18T01:43:22.321Z Er(163) Hostd[10946902]: [Originator@6876 sub=HTTP server /folder req=000000fa39776250] DatastoreBrowser.search threw a InvalidDatastorePath faultAttachments

Hi everyone,

sorry for the late reply, i got rid of the problem by rebooting the ESXi and immediately after booting, I ran the importer, and it worked without any problems... but after ESXi had been running for a day or two, Proxmox couldn't connect again... so I had to restart the server again.

Why it all worked like that -> no idea.

Unfortunately, I also have to admit that we didn't switch to Proxmox, but to HyperV. We only had to put an older Linux VM on a separate Proxmox server.

I also had problems with the iSCSI multipath and the migration of Windows VMs in general with Proxmox.

But since we use Windows 85% of the time, that was the route we took.

But that's probably more my fault... iSCSI could be a problem with the older server hardware we tested on so as not to disrupt production...

I'll be setting up an additional server in the next 1-2 years, which I'll use to evaluate Proxmox again.

HyperV is relatively straightforward, but I'm not entirely happy with the failover yet...

So far, I've only been working with ESXi and was unfortunately forced to switch quickly due to an incorrectly documented end-of-support date from VMware... HyperV simply caused the least problems.

Still have a lot to learn here...

Thanks

Best Regards

sorry for the late reply, i got rid of the problem by rebooting the ESXi and immediately after booting, I ran the importer, and it worked without any problems... but after ESXi had been running for a day or two, Proxmox couldn't connect again... so I had to restart the server again.

Why it all worked like that -> no idea.

Unfortunately, I also have to admit that we didn't switch to Proxmox, but to HyperV. We only had to put an older Linux VM on a separate Proxmox server.

I also had problems with the iSCSI multipath and the migration of Windows VMs in general with Proxmox.

But since we use Windows 85% of the time, that was the route we took.

But that's probably more my fault... iSCSI could be a problem with the older server hardware we tested on so as not to disrupt production...

I'll be setting up an additional server in the next 1-2 years, which I'll use to evaluate Proxmox again.

HyperV is relatively straightforward, but I'm not entirely happy with the failover yet...

So far, I've only been working with ESXi and was unfortunately forced to switch quickly due to an incorrectly documented end-of-support date from VMware... HyperV simply caused the least problems.

Still have a lot to learn here...

Thanks

Best Regards

Hi Jad238,

sorry for the late reply and thanks a lot for the logs.

It looks as if the import was already running for your machine, my local esxi logs look quite similar -- this here is the final part before my vmdk test machine is created successfully on the PVE-side:

Before the ```AdapterServer caught exception;``` of your import things look very similar:

Can you check if you find a failed create job on the PVE-side? Here the last line of my tasklog (I imported the vmdk to VMID 171) looks like:

Best regards,

Daniel

sorry for the late reply and thanks a lot for the logs.

It looks as if the import was already running for your machine, my local esxi logs look quite similar -- this here is the final part before my vmdk test machine is created successfully on the PVE-side:

Code:

2025-03-21T13:38:44.852Z In(166) Hostd[132080]: [Originator@6876 sub=HTTP server /folder req=0000001d1473fe40 user=root] Got HTTP GET request for /folder//deb12/deb12-flat.vmdk?dcPath=ha-datacenter&dsName=local_disk_01

2025-03-21T13:38:44.853Z In(166) Hostd[132087]: [Originator@6876 sub=Vimsvc.TaskManager opID=req=0000001d1473fe40-05a7 sid=52d53462 user=root] Task Created : haTask--vim.SearchIndex.findByInventoryPath-1612

2025-03-21T13:38:44.853Z In(166) Hostd[132069]: [Originator@6876 sub=Vimsvc.TaskManager opID=req=0000001d1473fe40-05a7 sid=52d53462 user=root] Task Completed : haTask--vim.SearchIndex.findByInventoryPath-1612 Status success

2025-03-21T13:38:44.853Z In(166) Hostd[132075]: [Originator@6876 sub=DatastoreBrowser opID=req=0000001d1473fe40-05a9 sid=52d53462 user=root] DatastoreBrowserImpl::SearchInt MoId:67d7e787-a0153c7b-7edb-bc2411f1220a-datastorebrowser dsPath:[local_disk_01]/deb12 recursive:false

2025-03-21T13:38:44.902Z In(166) Hostd[132066]: [Originator@6876 sub=HTTP server /folder req=0000001d1473fe40] Sent OK response for GET /folder//deb12/deb12-flat.vmdk?dcPath=ha-datacenter&dsName=local_disk_01

2025-03-21T13:38:44.902Z In(166) Hostd[132066]: [Originator@6876 sub=Vimsvc.ha-eventmgr] Event 639 : File download from path '[local_disk_01]/deb12/deb12-flat.vmdk' was initiated from 'proxmox-simple-http-client/0.1@10.10.10.1' and completed with status ''Before the ```AdapterServer caught exception;``` of your import things look very similar:

Code:

2025-03-18T01:43:22.316Z In(166) Hostd[10946888]: [Originator@6876 sub=HTTP server /folder req=000000fa39776250 user=root] Got HTTP HEAD request for /folder//var/run/crx/infra/vCLS-4c4c4544-0042-5310-8030-b1c04f5a3233/vCLS-4c4c4544-0042-5310-8030-b1c04f5a3233.vmx?dcPath=ha-datacenter&dsName=

2025-03-18T01:43:22.316Z In(166) Hostd[10946893]: [Originator@6876 sub=Vimsvc.TaskManager opID=req=000000fa39776250-0e58 sid=520fdb7f user=root] Task Created : haTask--vim.SearchIndex.findByInventoryPath-12422411

2025-03-18T01:43:22.317Z In(166) Hostd[10946899]: [Originator@6876 sub=Vimsvc.TaskManager opID=req=000000fa39776250-0e58 sid=520fdb7f user=root] Task Completed : haTask--vim.SearchIndex.findByInventoryPath-12422411 Status success

2025-03-18T01:43:22.317Z In(166) Hostd[10946903]: [Originator@6876 sub=DatastoreBrowser opID=req=000000fa39776250-0e5b sid=520fdb7f user=root] DatastoreBrowserImpl::SearchInt MoId:675c3afe-258c04e6-9820-506b4b09346c-datastorebrowser dsPath:[]/var/run/crx/infra/vCLS-4c4c4544-0042-5310-8030-b1c04f5a3233 recursive:false

2025-03-18T01:43:22.317Z In(166) Hostd[10946903]: [Originator@6876 sub=AdapterServer opID=req=000000fa39776250-0e5b sid=520fdb7f user=root] AdapterServer caught exception; <<520fdb7f-6b87-1646-b1af-d38ad4987a93, <TCP '127.0.0.1 : 8309'>, <TCP '127.0.0.1 : 31029'>>, 675c3afe-258c04e6-9820-506b4b09346c-datastorebrowser, vim.host.DatastoreBrowser.search, (null), (null)>, N3Vim5Fault20InvalidDatastorePath9ExceptionE(Fault cause: vim.fault.InvalidDatastorePathCan you check if you find a failed create job on the PVE-side? Here the last line of my tasklog (I imported the vmdk to VMID 171) looks like:

Code:

transferred 16.0 GiB of 16.0 GiB (100.00%)

transferred 16.0 GiB of 16.0 GiB (100.00%)

cleaned up extracted image esxi8:ha-datacenter/local_disk_01/deb12/deb12.vmdk

scsi0: successfully created disk 'local:171/vm-171-disk-0.qcow2,size=16G'

TASK OKBest regards,

Daniel

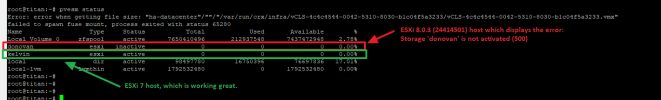

Hi Daniel,

I don’t see anything in the task history. The error occurs immediately upon clicking the import button. There is never an opportunity to begin the import, as the storage is not activated (500) prevents any further action.

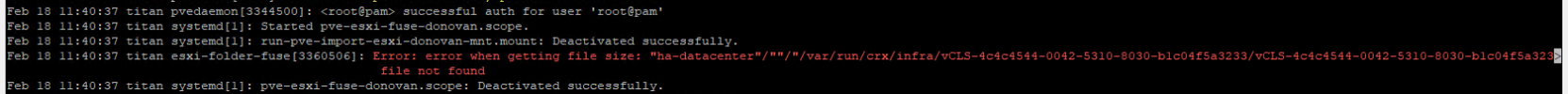

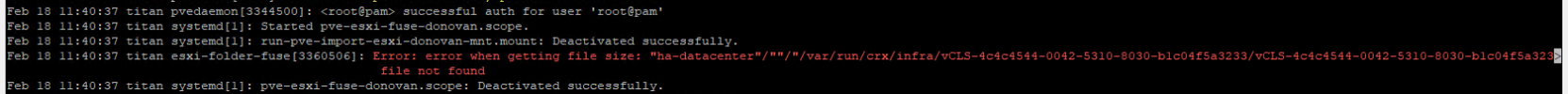

I do see this in the Proxmox system log at the exact time I select Import in the top of the window (see screenshot for reference).

I don’t see anything in the task history. The error occurs immediately upon clicking the import button. There is never an opportunity to begin the import, as the storage is not activated (500) prevents any further action.

I do see this in the Proxmox system log at the exact time I select Import in the top of the window (see screenshot for reference).

Mar 21 17:22:37 titan systemd[1]: Started pve-esxi-fuse-donovan.vmware.ufponline.net.scope.Mar 21 17:22:37 titan systemd[1]: run-pve-import-esxi-donovan.vmware.ufponline.net-mnt.mount: Deactivated successfully.Mar 21 17:22:37 titan esxi-folder-fuse[2996240]: Error: error when getting file size: "ha-datacenter"/""/"/var/run/crx/infra/vCLS-4c4c4544-0042-5310-8030-b1c04f5a3233/vCLS-4c4c4544-0042-5310-8030-b1c04f5a3233.vmx" file not foundMar 21 17:22:37 titan systemd[1]: pve-esxi-fuse-donovan.vmware.ufponline.net.scope: Deactivated successfully.Attachments

Hi jad238,

I'm a little confused by the path that's being tried to be queried -- I do not yet understand how a call to:

"ha-datacenter/DONOVAN_Local_Volume_02/EAGLE/EAGLE.vmx"

would translate to:

"ha-datacenter"/""/"/var/run/crx/infra/vCLS-4c4c4544-0042-5310-8030-b1c04f5a3233/vCLS-4c4c4544-0042-5310-8030-b1c04f5a3233.vmx"

Could you please share the storage configuration of your PVE-host -- it's at '/etc/pve/storage.cfg' (sorry, I mistyped the path earlier)?

Please also check, if you have multiple mounts hanging that might cause conflicts:

Best,

Daniel

I'm a little confused by the path that's being tried to be queried -- I do not yet understand how a call to:

"ha-datacenter/DONOVAN_Local_Volume_02/EAGLE/EAGLE.vmx"

would translate to:

"ha-datacenter"/""/"/var/run/crx/infra/vCLS-4c4c4544-0042-5310-8030-b1c04f5a3233/vCLS-4c4c4544-0042-5310-8030-b1c04f5a3233.vmx"

Could you please share the storage configuration of your PVE-host -- it's at '/etc/pve/storage.cfg' (sorry, I mistyped the path earlier)?

Please also check, if you have multiple mounts hanging that might cause conflicts:

Code:

findmnt | grep "/run/pve/import/esxi"Best,

Daniel

Hi Daniel,

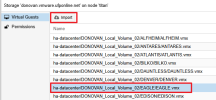

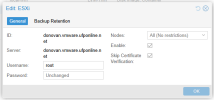

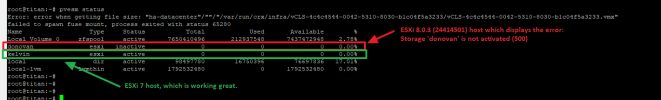

I’ve attached some screenshots for context. Basically, the ESXi 8.x host FQDN is donovan.vmware.ufponline.net and has a local VMFS volume named DONOVAN_Local_Volume_02. The VM we are trying to migrate (though trying to migrate any VM on ESXi 8.x hosts fails) is EAGLE (Linux guest, powered off without any snapshots).

On the proxmox side we have added the “ESXi” storage and have it attached directly to the ESXi 8.x host, and not vCenter.

I’ve attached the output of the findmnt | grep "/run/pve/import/esxi" command in the screenshot below. There are two ESXi storage mounts. One is to the ESXi 8.x host which is donovan.vmware.ufponline.net and seen in the first line of output. The second is to the ESXi 7.x host "kelvin" (which works perfectly for migration) and is seen in the second line of output. Both ESXi hosts are in the same vCenter and datacenter, but different clusters.

I also attached the content of the /etc/pve/storage.cfg file as well in a screenshot below.

Neither of the ESXi 7.x or 8.x hosts have been rebooted in recent months.

I’ve also tried the migration from the same ESXi hosts but on another Proxmox host at matching patch levels and the result is the same.

I’ve attached some screenshots for context. Basically, the ESXi 8.x host FQDN is donovan.vmware.ufponline.net and has a local VMFS volume named DONOVAN_Local_Volume_02. The VM we are trying to migrate (though trying to migrate any VM on ESXi 8.x hosts fails) is EAGLE (Linux guest, powered off without any snapshots).

On the proxmox side we have added the “ESXi” storage and have it attached directly to the ESXi 8.x host, and not vCenter.

I’ve attached the output of the findmnt | grep "/run/pve/import/esxi" command in the screenshot below. There are two ESXi storage mounts. One is to the ESXi 8.x host which is donovan.vmware.ufponline.net and seen in the first line of output. The second is to the ESXi 7.x host "kelvin" (which works perfectly for migration) and is seen in the second line of output. Both ESXi hosts are in the same vCenter and datacenter, but different clusters.

I also attached the content of the /etc/pve/storage.cfg file as well in a screenshot below.

Neither of the ESXi 7.x or 8.x hosts have been rebooted in recent months.

I’ve also tried the migration from the same ESXi hosts but on another Proxmox host at matching patch levels and the result is the same.

Attachments

Hi jad238,

I assume all storages listed by the command 'pvesm status' from your PVE-Host show 'active' or 'disabled' states?

Could you please post the output of the esxi-8 host's manifest file, or attach the file itself?

Possibly we can find a reason for the weird mappings there.

Just to make sure -- the VMFS-disks are actual disks on the ESXI-host, not mounted remotes?

I assume all storages listed by the command 'pvesm status' from your PVE-Host show 'active' or 'disabled' states?

Could you please post the output of the esxi-8 host's manifest file, or attach the file itself?

Code:

cat /run/pve/import/esxi/donovan.vmware.ufponline.net/manifest.jsonPossibly we can find a reason for the weird mappings there.

Just to make sure -- the VMFS-disks are actual disks on the ESXI-host, not mounted remotes?