On my proxmox machine I got two SSD drives natively connected to it. First disk is for proxmox OS, the other I want to use in VMs.

Basically, I want to use half of this second SSD drive in one VM, and other half in another, preferably with auto-adjusting sizes (in case one VM needs more than a half, I cannot predict which will need more at this point).

In proxmox, I've set the second SSD up as an LVM-thin disk.

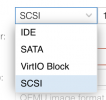

In VM, I added a new hard disk using SCSI Bus/Device and pointed it to the LVM-Think storege.

Unfortuantely, I'm getting real bad bottlenecks, `sysbench --test=fileio` executed in VM has told me:

While on the proxmox host (on the first SSD), I'm seeing:

That's pretty much an order of magnitude difference. Toggling the "SSD emulation" doesn't help.

What should I change in my workflow to get better results?

Basically, I want to use half of this second SSD drive in one VM, and other half in another, preferably with auto-adjusting sizes (in case one VM needs more than a half, I cannot predict which will need more at this point).

In proxmox, I've set the second SSD up as an LVM-thin disk.

In VM, I added a new hard disk using SCSI Bus/Device and pointed it to the LVM-Think storege.

Unfortuantely, I'm getting real bad bottlenecks, `sysbench --test=fileio` executed in VM has told me:

Code:

Throughput:

read, MiB/s: 10.77

written, MiB/s: 7.18While on the proxmox host (on the first SSD), I'm seeing:

Code:

Throughput:

read, MiB/s: 71.33

written, MiB/s: 47.56That's pretty much an order of magnitude difference. Toggling the "SSD emulation" doesn't help.

What should I change in my workflow to get better results?