I completely wiped out my system and started from nothing after I assumed I made a mistake.

I thought because I mixed SSD and SAS drives in LVM it caused my IO issues.

This time I completely left the SSD drives untouched and installed proxmox on the large SAS raid 5 array on the hw controller.

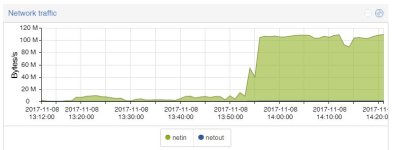

I made a backup of my VM on NAS and set up a dedicated network between the NAS and server on a separate network.

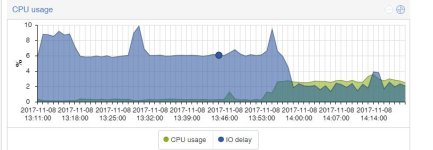

Tried to restore the VM and it starts off really well, 100mb/s transfer and then after 1min or less the IO delay spikes up to 8/9% and the transfer rates drop to 10 or less mb/s.

I tried doing a dd test of the drive just to see what that does (while it was still restoring the vm backup super slowly)

root@pve:~# dd if=/dev/zero of=/root/testfile bs=1G count=1 oflag=direct

1+0 records in

1+0 records out

1073741824 bytes (1.1 GB, 1.0 GiB) copied, 7.5917 s, 141 MB/s

root@pve:~# dd if=/dev/zero of=/root/testfile bs=1G count=1 oflag=dsync

1+0 records in

1+0 records out

1073741824 bytes (1.1 GB, 1.0 GiB) copied, 7.65142 s, 140 MB/s

Doesn't seem to be the raid controller or drive that's the problem, could it be the network drivers/setup?

Any idea's on how I can figure out why the IO delays jump up and the system gets really slow?

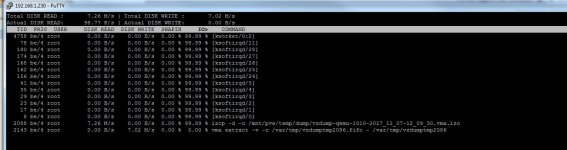

*edit* I ran iotop and got some 99.99% hits on ks... something processes, any ideas?

I thought because I mixed SSD and SAS drives in LVM it caused my IO issues.

This time I completely left the SSD drives untouched and installed proxmox on the large SAS raid 5 array on the hw controller.

I made a backup of my VM on NAS and set up a dedicated network between the NAS and server on a separate network.

Tried to restore the VM and it starts off really well, 100mb/s transfer and then after 1min or less the IO delay spikes up to 8/9% and the transfer rates drop to 10 or less mb/s.

I tried doing a dd test of the drive just to see what that does (while it was still restoring the vm backup super slowly)

root@pve:~# dd if=/dev/zero of=/root/testfile bs=1G count=1 oflag=direct

1+0 records in

1+0 records out

1073741824 bytes (1.1 GB, 1.0 GiB) copied, 7.5917 s, 141 MB/s

root@pve:~# dd if=/dev/zero of=/root/testfile bs=1G count=1 oflag=dsync

1+0 records in

1+0 records out

1073741824 bytes (1.1 GB, 1.0 GiB) copied, 7.65142 s, 140 MB/s

Doesn't seem to be the raid controller or drive that's the problem, could it be the network drivers/setup?

Any idea's on how I can figure out why the IO delays jump up and the system gets really slow?

*edit* I ran iotop and got some 99.99% hits on ks... something processes, any ideas?