proxmox (VSphere VMWare to Proxmox migration)

Hello,

We are in the process of migrating our existing VMWare VSphere VMs to proxmox environment. All our VMs disks are placed under common storage across esxi.

As per the documentation, already done these steps,

No vCenter is available.

Shutdown VM (SuSE Linux 10)

Convert the .vmdk disk file to the proxmox compatible .qcow2 image. ( qemu-img convert -p -f vmdk -O qcow2 "<Name>.vmdk" "<Name>.qcow2)

Then attach this .qcow2 image to a new VM created.

Disk controller set to SCSI0

Set the boot order to scsi0

And tried to boot

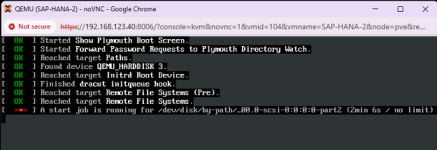

The grub menu is displayed and the VM started booting but fails as it can not find /dev/sda2 the root disk

Following is already been tried,

Tried different Disk controllers. LSI 53C895A, VirtIO SCSI, VirtIO SCSI Single

But nothing seem to detect the sda.

One observation is, With LSI 53C895a and Disk added as ide0, the system fails to detect sda but if i searched under ls /dev/ i can see the disk is recognised as hda2 , hda3 etc... that's it.

What could be the reason? or how do i resolve this issue with Proxmox. For historical reasons we have to continue running old SLES10 Operating systems in our envionment.

Update: Other SLES15 Systems faced similar problem but after adding the disk with ide0 and not scsi, the system could boot successfully. But with older OS like SLES10 , this method does not seem to work. I have attached the error i get after booting. Also attached the .vmx file of the VM in the VSphere environment.

Thanks.

Regards.

Hello,

We are in the process of migrating our existing VMWare VSphere VMs to proxmox environment. All our VMs disks are placed under common storage across esxi.

As per the documentation, already done these steps,

No vCenter is available.

Shutdown VM (SuSE Linux 10)

Convert the .vmdk disk file to the proxmox compatible .qcow2 image. ( qemu-img convert -p -f vmdk -O qcow2 "<Name>.vmdk" "<Name>.qcow2)

Then attach this .qcow2 image to a new VM created.

Disk controller set to SCSI0

Set the boot order to scsi0

And tried to boot

The grub menu is displayed and the VM started booting but fails as it can not find /dev/sda2 the root disk

Following is already been tried,

Tried different Disk controllers. LSI 53C895A, VirtIO SCSI, VirtIO SCSI Single

But nothing seem to detect the sda.

One observation is, With LSI 53C895a and Disk added as ide0, the system fails to detect sda but if i searched under ls /dev/ i can see the disk is recognised as hda2 , hda3 etc... that's it.

What could be the reason? or how do i resolve this issue with Proxmox. For historical reasons we have to continue running old SLES10 Operating systems in our envionment.

Update: Other SLES15 Systems faced similar problem but after adding the disk with ide0 and not scsi, the system could boot successfully. But with older OS like SLES10 , this method does not seem to work. I have attached the error i get after booting. Also attached the .vmx file of the VM in the VSphere environment.

Thanks.

Regards.