Latest activity

-

UUmbraAtrox replied to the thread [SOLVED] Issues with Intel ARC A770M GPU Passthrough on NUC12SNKi72 (vfio-pci not ready after FLR or bus reset).So turns out "stuck in D3" was my PSU kicking the bucket. Haven't dared to update windows drivers past 32.0.101.8331 or upgrade card firmware, guess that's how it's going to be for a while. Dunno if it helps someone: i've changed my hookscript...

-

Nnetworkguy3 reacted to louie1961's post in the thread Recommendations for offsite backup of small local PBS? with

Like.

Do you have any friends who homelab? Maybe you can set up a site to site VPN or even a tailscale network, and back your stuff up to your friend's lab and you do the same for your friend.

Like.

Do you have any friends who homelab? Maybe you can set up a site to site VPN or even a tailscale network, and back your stuff up to your friend's lab and you do the same for your friend. -

SSepiDre replied to the thread [SOLVED] Internal SDN network cannot reach local network.Sorry for the late reply, got plenty around my head. I solved it like that (it drove me insane) Restart networking services of the proxmox host vpn connection lost and could not get up at all Restart the whole proxmox host no .10.0 network vm...

-

UUmbraAtrox replied to the thread [SOLVED] Anti-Cheat KVM Settings.SMbios from host. Everything gone but disks. LSI all variants - no Win11 drivers megaraid & megaraid-gen2 - drivers yes but no disks Sata - can't pass vendor/SN (screencap) virtio, virtio-single, and pvscsi no longer boot with ",vendor=..."...

-

DDatawolk BV replied to the thread ZFS performance on NVMe drives is significantly worse than i expected.Just a heads up: disabling CPU idle states (C6P, C6, and C1E) gave me a 6.5x boost in FSync performance. Might be worth trying if you're seeing sync-related slowdowns. root@PVE-A-00:~# pveperf /VM-DISK CPU BOGOMIPS: 460800.00 REGEX/SECOND...

-

DDatawolk BV replied to the thread ZFS performance on NVMe drives is significantly worse than i expected.These commands have already been a huge help. But I don't think I'm getting the most out of them yet. # C6 en C6P uitschakelen (state 3 en 4) for cpu in /sys/devices/system/cpu/cpu*/cpuidle/state3/disable; do echo 1 > $cpu; done for cpu in...

-

MLVM on iSCSI LUNs from a SAN (https://pve.proxmox.com/wiki/Storage:_LVM#pvesm_lvm_config) Pay attention to "snapshot-as-volume-chain" for snapshots. Maybe using multipath. If you are using Truenas 25.04+ as storage "ZFS over iSCSI" using the new...

-

Mmouk reacted to bbgeek17's post in the thread tips for shared storage that 'has it all' :-) with

Like.

Hi @mouk , It's not just not cluster-safe, it's not supported by PVE and you have to go out of your way to work around all the bumpers put in place to stop you. There are a number of commercial vendors in this space. It all depends on your...

Like.

Hi @mouk , It's not just not cluster-safe, it's not supported by PVE and you have to go out of your way to work around all the bumpers put in place to stop you. There are a number of commercial vendors in this space. It all depends on your... -

mir replied to the thread tips for shared storage that 'has it all' :-).LVM on iSCSI LUNs from a SAN (https://pve.proxmox.com/wiki/Storage:_LVM#pvesm_lvm_config) Pay attention to "snapshot-as-volume-chain" for snapshots. Maybe using multipath. If you are using Truenas 25.04+ as storage "ZFS over iSCSI" using the new...

mir replied to the thread tips for shared storage that 'has it all' :-).LVM on iSCSI LUNs from a SAN (https://pve.proxmox.com/wiki/Storage:_LVM#pvesm_lvm_config) Pay attention to "snapshot-as-volume-chain" for snapshots. Maybe using multipath. If you are using Truenas 25.04+ as storage "ZFS over iSCSI" using the new... -

bbgeek17 replied to the thread tips for shared storage that 'has it all' :-).Hi @mouk , It's not just not cluster-safe, it's not supported by PVE and you have to go out of your way to work around all the bumpers put in place to stop you. There are a number of commercial vendors in this space. It all depends on your...

bbgeek17 replied to the thread tips for shared storage that 'has it all' :-).Hi @mouk , It's not just not cluster-safe, it's not supported by PVE and you have to go out of your way to work around all the bumpers put in place to stop you. There are a number of commercial vendors in this space. It all depends on your... -

Mmouk posted the thread tips for shared storage that 'has it all' :-) in Proxmox VE: Installation and configuration.Hi all, As many, we are also contemplating a move from broadcom/vmware to proxmox, and are starting with a PoC now. I ran proxmox in the past with ceph cluster, so I know how great that combination it, but ceph is (now) not going to happen where...

-

JJohannes S reacted to bbgeek17's post in the thread Problem with SANs on a three node cluster with

Like.

You keep missing the point that the ZFS exists on the storage device side. The storage device, in your case Ubuntu, has a ZFS pool implemented by you, manually. You specify the name of that pool in PVE config to let PVE know what you named that...

Like.

You keep missing the point that the ZFS exists on the storage device side. The storage device, in your case Ubuntu, has a ZFS pool implemented by you, manually. You specify the name of that pool in PVE config to let PVE know what you named that... -

JJohannes S reacted to alexskysilk's post in the thread Problem with SANs on a three node cluster with

Like.

As I mentioned before, options 1 and 2 are available to you. option 3 is not, at least not in a non hackey way. options 1 and 2 work the same no matter whether you choose to use one, the other, or both, and dont interact/interfere with each other...

Like.

As I mentioned before, options 1 and 2 are available to you. option 3 is not, at least not in a non hackey way. options 1 and 2 work the same no matter whether you choose to use one, the other, or both, and dont interact/interfere with each other... -

Ccyruspy replied to the thread Interest in VPP (Vector Packet Processing) as a dataplane option for Proxmox.Why forget about filtering instead of implementing it with VPP?

-

GGhosthawk replied to the thread Problem with SANs on a three node cluster.Oh yeah, I completely overlooked the part about the ZFS pool on the Ubuntu server. You are right. Yes, that was the list what I found and then we are on the same page, thanks. I use LIO (Linux) and it is supported. I tested it in the test...

-

Ccarles89 reacted to bbgeek17's post in the thread Problem with SANs on a three node cluster with

Like.

ZFS over iSCSI in PVE is a specific scheme that implies: a) There is an external storage device b) You can login to this server via SSH as root (this excludes Dell, Netapp, Hitachi, etc) c) There is internal storage in that server (HDD, SAS...

Like.

ZFS over iSCSI in PVE is a specific scheme that implies: a) There is an external storage device b) You can login to this server via SSH as root (this excludes Dell, Netapp, Hitachi, etc) c) There is internal storage in that server (HDD, SAS... -

Ccarles89 reacted to alexskysilk's post in the thread Problem with SANs on a three node cluster with

Like.

a SAN would be a shared device of some sort. when you say you have "two" SANs, do you mean you have two boxes serving independent iscsi luns, or two boxes in a failover capacity (meaning one set of luns?) In either case, this becomes a simple...

Like.

a SAN would be a shared device of some sort. when you say you have "two" SANs, do you mean you have two boxes serving independent iscsi luns, or two boxes in a failover capacity (meaning one set of luns?) In either case, this becomes a simple... -

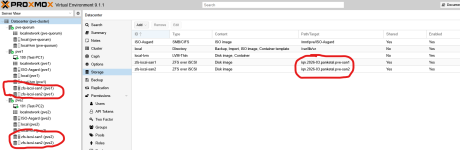

GGhosthawk replied to the thread Problem with SANs on a three node cluster.I had already configured Option 2, and of course they have different iqns. That worked fine so far (see image). The part I'm still not sure about seems to be this: “and use a logical mirror on top of them (mdadm.)” I'll have to look up how to use...

-

UdoB reacted to alexskysilk's post in the thread Problem with SANs on a three node cluster with

UdoB reacted to alexskysilk's post in the thread Problem with SANs on a three node cluster with Like.

As I mentioned before, options 1 and 2 are available to you. option 3 is not, at least not in a non hackey way. options 1 and 2 work the same no matter whether you choose to use one, the other, or both, and dont interact/interfere with each other...

Like.

As I mentioned before, options 1 and 2 are available to you. option 3 is not, at least not in a non hackey way. options 1 and 2 work the same no matter whether you choose to use one, the other, or both, and dont interact/interfere with each other...