Latest activity

-

OOnslow replied to the thread [SOLVED] Why does my VM backup to PBS hang and show "dirty-bitmap status: created new"?.Hi, @pulipulichen You posted only a screenshot and to make matters worse, it doesn't show all the logs' content (the wrapped parts). If we could see the log in the CODE blocks (this < / > icon above), maybe we could help more. Anyway, what I...

-

G_gabriel replied to the thread [SOLVED] Why does my VM backup to PBS hang and show "dirty-bitmap status: created new"?.Full read of VM's source disk is required when VM is shutdown. Then Dirty map skip full read only on next backup, because dirty map is managed by qemu process of the VM

-

OOnslow replied to the thread Can't use keyboard during install Dell Poweredge R730.Welcome, @Zexan Hard to guess, because you haven't given the details, e.g. what exactly happens in which stage of installation. Anyway, you check whether the same problem exists with installers of other systems, like Debian, Ubuntu or when...

-

Rrungekutta replied to the thread SR-IOV success stories?.Resurrecting this old thread.... I've come back to the topic and been running SR-IOV for a few weeks now without any issues but with noticeable improvements in latency. This time I kept things clean and simple: One 25Gb PF for host (including...

-

gurubert reacted to aaron's post in the thread How to precisely check the actual disk usage of Ceph RBD? with

gurubert reacted to aaron's post in the thread How to precisely check the actual disk usage of Ceph RBD? with Like.

rbd -p <pool> du would be the quickest way

Like.

rbd -p <pool> du would be the quickest way -

Ttriumphtruth replied to the thread [SOLVED] PVE 8.4.17 Recover from Crashed Upgrade.I tried several other things as well to identify the issue but nothing is helping. At one time I thought of upgrading things, but that is also not possible. Problem is I can't even find logs for the services. systemctl start pvestatd or systemctl...

-

Ttriumphtruth replied to the thread [SOLVED] PVE 8.4.17 Recover from Crashed Upgrade.Yes I followed them to deleted the nag and tried apt --fix-broken install . I couldn't identify any other thing I should have followed.

-

JJohannes S reacted to Bu66as's post in the thread Adguard LXC 100% Auslastung auf Proxmox with

Like.

@nitrosont, dein top-Output zeigt das Problem ziemlich eindeutig — es ist kein CPU-Problem, sondern Speicher: MiB Mem: 256.0 total, 1.2 free, 253.3 used — RAM praktisch voll MiB Swap: 256.0 total, 0.1 free, 255.9 used — Swap ebenfalls voll...

Like.

@nitrosont, dein top-Output zeigt das Problem ziemlich eindeutig — es ist kein CPU-Problem, sondern Speicher: MiB Mem: 256.0 total, 1.2 free, 253.3 used — RAM praktisch voll MiB Swap: 256.0 total, 0.1 free, 255.9 used — Swap ebenfalls voll... -

JDie Allocated Pages bestätigen das Bild: 1.673.851 Pages × 4 MiB (Standard-Pagesize MSA 2060) = ~6,39 TiB — das deckt sich mit den ~7,0 TB auf Pool-Ebene. OCFS2 meldet 3,8T belegt → ca. 2,5 TiB sind stale-Allocations, die vom Filesystem...

-

JDass die MSA-Belegung nach Scrub-Abschluss unverändert bei 94% steht obwohl ~500 GB per UNMAP gemeldet wurden, ist auffällig. Die Datenträgergruppenbereinigung ist primär ein Integritätscheck (Parity-Verify) — die UNMAP-Verarbeitung läuft davon...

-

JJohannes S reacted to Bu66as's post in the thread Langsame 4k Performance in Windows 11 VM with

Like.

@Joe77, die winsat-Ergebnisse mit v3 vs v4 sind praktisch identisch (Random 16K Read 179 vs 192 MB/s) — CPU-Typ und VBS sind damit als Ursache ausgeschlossen. Dass eine einzelne SATA-SSD bei @boisbleu 831 MB/s Random Read liefert und deine drei...

Like.

@Joe77, die winsat-Ergebnisse mit v3 vs v4 sind praktisch identisch (Random 16K Read 179 vs 192 MB/s) — CPU-Typ und VBS sind damit als Ursache ausgeschlossen. Dass eine einzelne SATA-SSD bei @boisbleu 831 MB/s Random Read liefert und deine drei... -

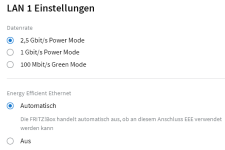

AAn.drea replied to the thread [SOLVED] Nach Update auf 8.3.5 regelmäßige Abstürze: Edit: cause intel e1000e networkcard.Je nach Box auch für die Ethernet-Ports: Liebe Grüße Andrea

-

Ttre4B replied to the thread ZFS rpool isn't imported automatically after update from PVE9.0.10 to latest PVE9.1 and kernel 6.17 with viommu=virtio.Mine is doing this too. I've tried the above but no dice, still stops at the initramfs and makes me type zpool import rpool, after which an exit makes it boot perfectly. I try to fix this every now and again but it has been doing it for a while...

-

fiona replied to the thread vmdk disks import failure with zeroinit.For reference, for other people stumbling upon here, there also is a bug report: https://bugzilla.proxmox.com/show_bug.cgi?id=5315 which mentions: The Migrate to Proxmox VE wiki article mentions something similar (for encryption):

fiona replied to the thread vmdk disks import failure with zeroinit.For reference, for other people stumbling upon here, there also is a bug report: https://bugzilla.proxmox.com/show_bug.cgi?id=5315 which mentions: The Migrate to Proxmox VE wiki article mentions something similar (for encryption): -

Ttscret replied to the thread [TUTORIAL] PoC 2 Node HA Cluster with Shared iSCSI GFS2.@iwik Thanks for your reply. We had few issues... but this week with the upgrades we had a lost quorum which ended in a crash. We are also testing the lvm over iscsi which was introduced in PVE 9. As for now - NFS based Share would be the best...

-

BBu66as replied to the thread Netzwerk Probleme bei einem Nginx Proxy Manager CT.Hallo @nimblefox, Das OpenResty-Problem ist repo-seitig. Debian Trixie verwendet sqv zur Signaturprüfung, und seit 2026-02-01 werden SHA1-basierte Binding-Signaturen abgelehnt. Der OpenResty-Signing-Key nutzt noch SHA1 — das muss upstream gefixt...

-

OOnslow replied to the thread [SOLVED] PVE 8.4.17 Recover from Crashed Upgrade.Have you studied the threads I linked to? And possibly other similar? Have you tried the actions described there?

-

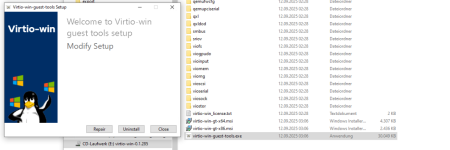

SSaron replied to the thread [SOLVED] Proxmox VE - VM Disk Hot-Add Not Detected by Guest OS.Hotplug is supported in Proxmox VE. In your VMs settings you can configure which hardware types have hotplug enabled (for disks its enabled by default). For IDE disks hotplug seems not to work and for SCSI disks this only seems to work with...

-

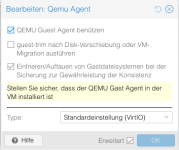

GG.punkt replied to the thread [SOLVED] No Guest Agent configured - Kein Shutdown das Maschine.

-

GG.punkt replied to the thread [SOLVED] No Guest Agent configured - Kein Shutdown das Maschine.Hallo, der Thread ist zwar schon etwas älter, aber ich würde ihn gern fortführen. Ich bin ein absoluter Neuling bei Proxmox und ein Umsteiger von VMWare. Ich habe eine Maschine von unserem ESX importiert, diese läuft auch in Proxmox. In der...