a bit of a Proxmox noob so sorry if I'm missing anything, having trouble determining the issue here and appreciate the help.

I have a Raid 10 configured on my Proxmox server that's made up of 4 hard drive, 2 WD drives and 2 Seagate drives. A while back I was seeing some weird slowness issues with applications on the drives, and then saw that the ZFS pool was showing up as degraded. Specifically, one drive was showing up as Degraded, with the message "too many errors"

I had them hooked up to my server using a cheap NVME to SFF-8087 adapter, then a SFF-8087 to 4x SATA adapter, so assumed this was the issue. Replaced it with an nvme to sata adapter, and unfortunately that didn't change anything.

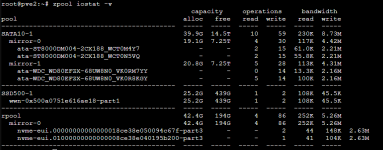

Here's a screenshot of the result from running zpool iostat -v as well, specifically SATA10 is the problem pool

Anything I can try to fix this? Hoping it's not an issue with the drive itself, but I can also look into buying a replacement if needed

I have a Raid 10 configured on my Proxmox server that's made up of 4 hard drive, 2 WD drives and 2 Seagate drives. A while back I was seeing some weird slowness issues with applications on the drives, and then saw that the ZFS pool was showing up as degraded. Specifically, one drive was showing up as Degraded, with the message "too many errors"

I had them hooked up to my server using a cheap NVME to SFF-8087 adapter, then a SFF-8087 to 4x SATA adapter, so assumed this was the issue. Replaced it with an nvme to sata adapter, and unfortunately that didn't change anything.

Here's a screenshot of the result from running zpool iostat -v as well, specifically SATA10 is the problem pool

Anything I can try to fix this? Hoping it's not an issue with the drive itself, but I can also look into buying a replacement if needed