Introducing a new server running with 4.1-1 we have to upgrade our 3 servers running 3.4-6.

Following exactly the wiki "Upgrade from 3.x to 4.0" (cut and paste) the upgrade failed and

the server hangs in shell.

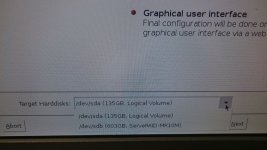

All VMs on that server have been moved to the new server. So we decided to install a fresh

Proxmox 4 from the CD. This also failed and rebooted. During the normal install procedure we could only

see one device (sda) and not the second (sdb) where the 3.4 was installed. Next we tried

the debug install. At the "#' we could not enter anything. The system hung.

I copied some information from the sister server which is identical.

==============================

Server

IBM x3850M2

lspci

04:00.0 SCSI storage controller: LSI Logic / Symbios Logic SAS1078 PCI-Express Fusion-MPT SAS (rev 03)

18:00.0 RAID bus controller: LSI Logic / Symbios Logic MegaRAID SAS 1078 (rev 04)

fdisk -l

Disk /dev/sda: 1168.0 GB, 1167996223488 bytes

255 heads, 63 sectors/track, 142000 cylinders, total 2281242624 sectors

Units = sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk identifier: 0x00000000

Disk /dev/sda doesn't contain a valid partition table

Disk /dev/sdb: 146.0 GB, 145999527936 bytes

255 heads, 63 sectors/track, 17750 cylinders, total 285155328 sectors

Units = sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk identifier: 0x000aeeb7

Device Boot Start End Blocks Id System

/dev/sdb1 * 2048 1048575 523264 83 Linux

/dev/sdb2 1048576 285155327 142053376 8e Linux LVM

======================

Is it possible that a modul for the raids is missing or what could be the reason?

The IBM servers are very reliable and have 128 GByte of memory.

Dieter

Following exactly the wiki "Upgrade from 3.x to 4.0" (cut and paste) the upgrade failed and

the server hangs in shell.

All VMs on that server have been moved to the new server. So we decided to install a fresh

Proxmox 4 from the CD. This also failed and rebooted. During the normal install procedure we could only

see one device (sda) and not the second (sdb) where the 3.4 was installed. Next we tried

the debug install. At the "#' we could not enter anything. The system hung.

I copied some information from the sister server which is identical.

==============================

Server

IBM x3850M2

lspci

04:00.0 SCSI storage controller: LSI Logic / Symbios Logic SAS1078 PCI-Express Fusion-MPT SAS (rev 03)

18:00.0 RAID bus controller: LSI Logic / Symbios Logic MegaRAID SAS 1078 (rev 04)

fdisk -l

Disk /dev/sda: 1168.0 GB, 1167996223488 bytes

255 heads, 63 sectors/track, 142000 cylinders, total 2281242624 sectors

Units = sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk identifier: 0x00000000

Disk /dev/sda doesn't contain a valid partition table

Disk /dev/sdb: 146.0 GB, 145999527936 bytes

255 heads, 63 sectors/track, 17750 cylinders, total 285155328 sectors

Units = sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk identifier: 0x000aeeb7

Device Boot Start End Blocks Id System

/dev/sdb1 * 2048 1048575 523264 83 Linux

/dev/sdb2 1048576 285155327 142053376 8e Linux LVM

======================

Is it possible that a modul for the raids is missing or what could be the reason?

The IBM servers are very reliable and have 128 GByte of memory.

Dieter