Hi all,

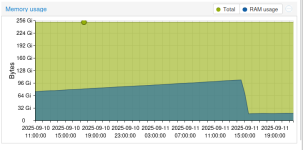

I have recently added a host to a cluster, it has a newer kernel version than the others and I am noticing a slow and gradual memory leak which is consuming around an additional 500MB a minute. This continues until the host grinds to a halt and becomes un-operational. This host is identical in spec to the others in the cluster, of which they are not experiencing the issue. This is a Ceph cluster, with OSDs on each host. Looking in htop, no processes are using extreme amounts of memory which points to a kernel memory leak. I am using ZFS for boot disks. ARC looks good:

I'm going to try upgrading this host to a newer kernel version to see if that helps the issue. A reboot does not seem to fix this and the leak continues once rebooted.

Any suggestions?

New Host:

Other Hosts (No memory leak):

The existing hosts are awaiting a reboot to apply the new kernel which I am obviously obstaining from for now.

I have recently added a host to a cluster, it has a newer kernel version than the others and I am noticing a slow and gradual memory leak which is consuming around an additional 500MB a minute. This continues until the host grinds to a halt and becomes un-operational. This host is identical in spec to the others in the cluster, of which they are not experiencing the issue. This is a Ceph cluster, with OSDs on each host. Looking in htop, no processes are using extreme amounts of memory which points to a kernel memory leak. I am using ZFS for boot disks. ARC looks good:

Code:

arc_summary -s arc

------------------------------------------------------------------------

ZFS Subsystem Report Mon Jul 28 12:52:21 2025

Linux 6.8.12-13-pve 2.2.8-pve1

Machine: redacted (x86_64) 2.2.8-pve1

ARC status: HEALTHY

Memory throttle count: 0

ARC size (current): 4.1 % 672.8 MiB

Target size (adaptive): 73.7 % 11.8 GiB

Min size (hard limit): 73.7 % 11.8 GiB

Max size (high water): 1:1 16.0 GiB

Anonymous data size: < 0.1 % 280.0 KiB

Anonymous metadata size: 0.0 % 0 Bytes

MFU data target: 37.5 % 241.8 MiB

MFU data size: 26.3 % 169.6 MiB

MFU ghost data size: 0 Bytes

MFU metadata target: 12.5 % 80.6 MiB

MFU metadata size: 4.1 % 26.5 MiB

MFU ghost metadata size: 0 Bytes

MRU data target: 37.5 % 241.8 MiB

MRU data size: 59.5 % 383.7 MiB

MRU ghost data size: 0 Bytes

MRU metadata target: 12.5 % 80.6 MiB

MRU metadata size: 10.0 % 64.8 MiB

MRU ghost metadata size: 0 Bytes

Uncached data size: 0.0 % 0 Bytes

Uncached metadata size: 0.0 % 0 Bytes

Bonus size: 0.6 % 3.8 MiB

Dnode cache target: 10.0 % 1.6 GiB

Dnode cache size: 0.9 % 14.1 MiB

Dbuf size: 1.0 % 6.7 MiB

Header size: 0.4 % 2.4 MiB

L2 header size: 0.0 % 0 Bytes

ABD chunk waste size: 0.2 % 1.1 MiB

ARC hash breakdown:

Elements max: 9.5k

Elements current: 99.7 % 9.5k

Collisions: 19

Chain max: 1

Chains: 2

ARC misc:

Deleted: 17

Mutex misses: 0

Eviction skips: 1

Eviction skips due to L2 writes: 0

L2 cached evictions: 0 Bytes

L2 eligible evictions: 279.0 KiB

L2 eligible MFU evictions: 0.0 % 0 Bytes

L2 eligible MRU evictions: 100.0 % 279.0 KiB

L2 ineligible evictions: 4.0 KiB

Code:

lscpu

Architecture: x86_64

CPU op-mode(s): 32-bit, 64-bit

Address sizes: 46 bits physical, 48 bits virtual

Byte Order: Little Endian

CPU(s): 48

On-line CPU(s) list: 0-47

Vendor ID: GenuineIntel

BIOS Vendor ID: Intel(R) Corporation

Model name: Intel(R) Xeon(R) Gold 6136 CPU @ 3.00GHz

BIOS Model name: Intel(R) Xeon(R) Gold 6136 CPU @ 3.00GHz CPU @ 3.0GHz

BIOS CPU family: 179

CPU family: 6

Model: 85

Thread(s) per core: 2

Core(s) per socket: 12

Socket(s): 2

Stepping: 4

CPU(s) scaling MHz: 75%

CPU max MHz: 3700.0000

CPU min MHz: 1200.0000

BogoMIPS: 6000.00

Flags: fpu vme de pse tsc msr pae mce cx8 apic sep mtrr pge mca cmov pat pse36 clflush dts acpi mmx fxsr sse sse2 ss ht tm pbe syscall nx pdpe1gb rdtscp lm constant_tsc art arch_perfmon pebs bts rep_good nopl xtopology

nonstop_tsc cpuid aperfmperf pni pclmulqdq dtes64 monitor ds_cpl vmx smx est tm2 ssse3 sdbg fma cx16 xtpr pdcm pcid dca sse4_1 sse4_2 x2apic movbe popcnt tsc_deadline_timer aes xsave avx f16c rdrand lahf_lm abm 3

dnowprefetch cpuid_fault epb cat_l3 cdp_l3 pti intel_ppin ssbd mba ibrs ibpb stibp tpr_shadow flexpriority ept vpid ept_ad fsgsbase tsc_adjust bmi1 hle avx2 smep bmi2 erms invpcid rtm cqm mpx rdt_a avx512f avx512

dq rdseed adx smap clflushopt clwb intel_pt avx512cd avx512bw avx512vl xsaveopt xsavec xgetbv1 xsaves cqm_llc cqm_occup_llc cqm_mbm_total cqm_mbm_local dtherm ida arat pln pts hwp hwp_act_window hwp_pkg_req vnmi

pku ospke md_clear flush_l1d arch_capabilities

Virtualization features:

Virtualization: VT-x

Caches (sum of all):

L1d: 768 KiB (24 instances)

L1i: 768 KiB (24 instances)

L2: 24 MiB (24 instances)

L3: 49.5 MiB (2 instances)

NUMA:

NUMA node(s): 2

NUMA node0 CPU(s): 0-11,24-35

NUMA node1 CPU(s): 12-23,36-47

Vulnerabilities:

Gather data sampling: Mitigation; Microcode

Itlb multihit: KVM: Mitigation: VMX disabled

L1tf: Mitigation; PTE Inversion; VMX conditional cache flushes, SMT vulnerable

Mds: Mitigation; Clear CPU buffers; SMT vulnerable

Meltdown: Mitigation; PTI

Mmio stale data: Mitigation; Clear CPU buffers; SMT vulnerable

Reg file data sampling: Not affected

Retbleed: Mitigation; IBRS

Spec rstack overflow: Not affected

Spec store bypass: Mitigation; Speculative Store Bypass disabled via prctl

Spectre v1: Mitigation; usercopy/swapgs barriers and __user pointer sanitization

Spectre v2: Mitigation; IBRS; IBPB conditional; STIBP conditional; RSB filling; PBRSB-eIBRS Not affected; BHI Not affected

Srbds: Not affected

Tsx async abort: Mitigation; Clear CPU buffers; SMT vulnerable

Code:

cat /proc/cmdline

initrd=\EFI\proxmox\6.8.12-13-pve\initrd.img-6.8.12-13-pve root=ZFS=rpool/ROOT/pve-1 boot=zfsAny suggestions?

New Host:

Code:

pveversion -v

proxmox-ve: 8.4.0 (running kernel: 6.8.12-13-pve)

pve-manager: 8.4.5 (running version: 8.4.5/57892e8e686cb35b)

proxmox-kernel-helper: 8.1.4

proxmox-kernel-6.8.12-13-pve-signed: 6.8.12-13

proxmox-kernel-6.8: 6.8.12-13

proxmox-kernel-6.8.12-9-pve-signed: 6.8.12-9

ceph: 19.2.2-pve1~bpo12+1

ceph-fuse: 19.2.2-pve1~bpo12+1

corosync: 3.1.9-pve1

criu: 3.17.1-2+deb12u1

frr-pythontools: 10.2.2-1+pve1

glusterfs-client: 10.3-5

ifupdown2: 3.2.0-1+pmx11

ksm-control-daemon: 1.5-1

libjs-extjs: 7.0.0-5

libknet1: 1.30-pve2

libproxmox-acme-perl: 1.6.0

libproxmox-backup-qemu0: 1.5.2

libproxmox-rs-perl: 0.3.5

libpve-access-control: 8.2.2

libpve-apiclient-perl: 3.3.2

libpve-cluster-api-perl: 8.1.2

libpve-cluster-perl: 8.1.2

libpve-common-perl: 8.3.2

libpve-guest-common-perl: 5.2.2

libpve-http-server-perl: 5.2.2

libpve-network-perl: 0.11.2

libpve-rs-perl: 0.9.4

libpve-storage-perl: 8.3.6

libspice-server1: 0.15.1-1

lvm2: 2.03.16-2

lxc-pve: 6.0.0-1

lxcfs: 6.0.0-pve2

novnc-pve: 1.6.0-2

proxmox-backup-client: 3.4.3-1

proxmox-backup-file-restore: 3.4.3-1

proxmox-backup-restore-image: 0.7.0

proxmox-firewall: 0.7.1

proxmox-kernel-helper: 8.1.4

proxmox-mail-forward: 0.3.3

proxmox-mini-journalreader: 1.5

proxmox-offline-mirror-helper: 0.6.7

proxmox-widget-toolkit: 4.3.12

pve-cluster: 8.1.2

pve-container: 5.3.0

pve-docs: 8.4.0

pve-edk2-firmware: 4.2025.02-4~bpo12+1

pve-esxi-import-tools: 0.7.4

pve-firewall: 5.1.2

pve-firmware: 3.16-3

pve-ha-manager: 4.0.7

pve-i18n: 3.4.5

pve-qemu-kvm: 9.2.0-7

pve-xtermjs: 5.5.0-2

qemu-server: 8.4.1

smartmontools: 7.3-pve1

spiceterm: 3.3.0

swtpm: 0.8.0+pve1

vncterm: 1.8.0

zfsutils-linux: 2.2.8-pve1Other Hosts (No memory leak):

Code:

proxmox-ve: 8.4.0 (running kernel: 6.8.12-5-pve)

pve-manager: 8.4.5 (running version: 8.4.5/57892e8e686cb35b)

proxmox-kernel-helper: 8.1.4

proxmox-kernel-6.8.12-13-pve-signed: 6.8.12-13

proxmox-kernel-6.8: 6.8.12-13

proxmox-kernel-6.8.12-10-pve-signed: 6.8.12-10

proxmox-kernel-6.8.12-8-pve-signed: 6.8.12-8

proxmox-kernel-6.8.12-5-pve-signed: 6.8.12-5

proxmox-kernel-6.8.12-4-pve-signed: 6.8.12-4

ceph: 19.2.2-pve1~bpo12+1

ceph-fuse: 19.2.2-pve1~bpo12+1

corosync: 3.1.9-pve1

criu: 3.17.1-2+deb12u1

glusterfs-client: 10.3-5

ifupdown2: 3.2.0-1+pmx11

ksm-control-daemon: 1.5-1

libjs-extjs: 7.0.0-5

libknet1: 1.30-pve2

libproxmox-acme-perl: 1.6.0

libproxmox-backup-qemu0: 1.5.2

libproxmox-rs-perl: 0.3.5

libpve-access-control: 8.2.2

libpve-apiclient-perl: 3.3.2

libpve-cluster-api-perl: 8.1.2

libpve-cluster-perl: 8.1.2

libpve-common-perl: 8.3.2

libpve-guest-common-perl: 5.2.2

libpve-http-server-perl: 5.2.2

libpve-network-perl: 0.11.2

libpve-rs-perl: 0.9.4

libpve-storage-perl: 8.3.6

libspice-server1: 0.15.1-1

lvm2: 2.03.16-2

lxc-pve: 6.0.0-1

lxcfs: 6.0.0-pve2

novnc-pve: 1.6.0-2

proxmox-backup-client: 3.4.3-1

proxmox-backup-file-restore: 3.4.3-1

proxmox-backup-restore-image: 0.7.0

proxmox-firewall: 0.7.1

proxmox-kernel-helper: 8.1.4

proxmox-mail-forward: 0.3.3

proxmox-mini-journalreader: 1.5

proxmox-offline-mirror-helper: 0.6.7

proxmox-widget-toolkit: 4.3.12

pve-cluster: 8.1.2

pve-container: 5.3.0

pve-docs: 8.4.0

pve-edk2-firmware: 4.2025.02-4~bpo12+1

pve-esxi-import-tools: 0.7.4

pve-firewall: 5.1.2

pve-firmware: 3.16-3

pve-ha-manager: 4.0.7

pve-i18n: 3.4.5

pve-qemu-kvm: 9.2.0-7

pve-xtermjs: 5.5.0-2

qemu-server: 8.4.1

smartmontools: 7.3-pve1

spiceterm: 3.3.0

swtpm: 0.8.0+pve1

vncterm: 1.8.0

zfsutils-linux: 2.2.8-pve1

Code:

cat /proc/cmdline

initrd=\EFI\proxmox\6.8.12-5-pve\initrd.img-6.8.12-5-pve root=ZFS=rpool/ROOT/pve-1 boot=zfs

Last edited: