I am trying to migrate a vm back to its indended node and I get the following error.

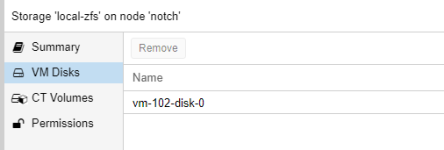

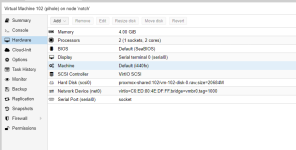

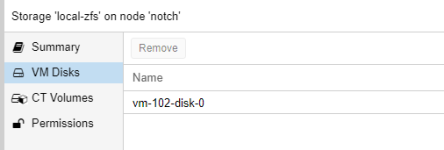

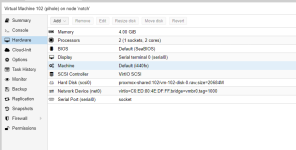

After some searching I found that for some reason there was a local vm disk on the local zfs storage target even though the vm has its disks on a nfs share over the network.

There is no file on the disk with this vm disk on it and I cannot migrate the vm because this disk image exists. I'm not sure what to do?

Bash:

2022-04-03 19:34:33 starting migration of VM 102 to node 'kratos' (10.0.0.61)

2022-04-03 19:34:33 replicating disk images

2022-04-03 19:34:33 start replication job

2022-04-03 19:34:33 guest => VM 102, running => 1925

2022-04-03 19:34:33 volumes =>

2022-04-03 19:34:34 (remote_prepare_local_job) no volumes specified

2022-04-03 19:34:34 end replication job with error: command '/usr/bin/ssh -e none -o 'BatchMode=yes' -o 'HostKeyAlias=kratos' root@10.0.0.61 -- pvesr prepare-local-job 102-0 --last_sync 0' failed: exit code 255

2022-04-03 19:34:34 ERROR: command '/usr/bin/ssh -e none -o 'BatchMode=yes' -o 'HostKeyAlias=kratos' root@10.0.0.61 -- pvesr prepare-local-job 102-0 --last_sync 0' failed: exit code 255

2022-04-03 19:34:34 aborting phase 1 - cleanup resources

2022-04-03 19:34:34 ERROR: migration aborted (duration 00:00:02): command '/usr/bin/ssh -e none -o 'BatchMode=yes' -o 'HostKeyAlias=kratos' root@10.0.0.61 -- pvesr prepare-local-job 102-0 --last_sync 0' failed: exit code 255

TASK ERROR: migration abortedAfter some searching I found that for some reason there was a local vm disk on the local zfs storage target even though the vm has its disks on a nfs share over the network.

There is no file on the disk with this vm disk on it and I cannot migrate the vm because this disk image exists. I'm not sure what to do?