I'm migrating a zfs pool from a non-proxmox managed system to a proxmox managed system.

Here is a bit of a two parter question

1. how to reliably pass a disc to the VM so that ZFS can use it again?

I have been able to forward a whole disk to a VM (like my boot drive) and a 2-disk mirror, to the VM with no issue. It just took it as is. However, with my 5 disk array, 2 disks don't show up. In the host, I can import the drives no problem but the guest system isn't. In addition, many of the drives overall report generic names like (sda) as opposed to the serial/uuid/whatever more unique when i do a `zpool status`

2. How can I change a ZFS pool that was originally managed by a bare metal install to one that is proxmox managed?

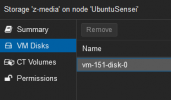

I can import an array. cool. But I can't have any of my VMs or containers use it as it is not in the GUI.

Here is a bit of a two parter question

1. how to reliably pass a disc to the VM so that ZFS can use it again?

I have been able to forward a whole disk to a VM (like my boot drive) and a 2-disk mirror, to the VM with no issue. It just took it as is. However, with my 5 disk array, 2 disks don't show up. In the host, I can import the drives no problem but the guest system isn't. In addition, many of the drives overall report generic names like (sda) as opposed to the serial/uuid/whatever more unique when i do a `zpool status`

2. How can I change a ZFS pool that was originally managed by a bare metal install to one that is proxmox managed?

I can import an array. cool. But I can't have any of my VMs or containers use it as it is not in the GUI.