I have been trying to debug a weird issue with with a secondary NIC+bridge. I use a Mellanox ConnectX-3 10Gbit interface card for the secondary NIC.

I am trying to set up two vmbrX pointing to two different NICs where both are on different subnet, the VLAN is handled at switch to make debugging easier.

I have the following network config:

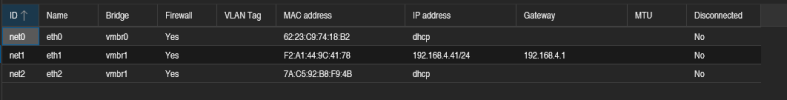

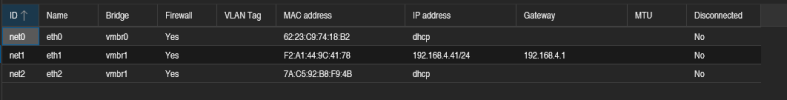

My LXC container I use for testing has the following network configuration.

The first problem was that I encountered that DHCP wouldn't work, so I tried the above configuration where I added two interfaces to the LXC container one static and one with DHCP enabled.

I tried the following senarios (ping plus tcpdump along the way):

Tcpdump senario #2 & #4:

My first guess was that er was something wrong with the network/subnet, but if that was the case the WebBUI would also not be reachable but it is.

That would suggest that there is something wrong with the NIC/brigde. but again the WebGUI on that bridge works fine.

Have used this setup on different hardware where it works without a problem, so this problem has me quite confused.

I hope there is someone that can shed some light on this weird issue.

I am trying to set up two vmbrX pointing to two different NICs where both are on different subnet, the VLAN is handled at switch to make debugging easier.

I have the following network config:

Code:

root@z77x:~# cat /etc/network/interfaces

auto lo

iface lo inet loopback

auto enp2s0

iface enp2s0 inet manual

#Mellanox

iface enp2s0v0 inet manual

iface enp2s0v1 inet manual

iface enp2s0v2 inet manual

iface enp2s0v3 inet manual

auto enp0s31f6

iface enp0s31f6 inet manual

#Onboard

auto vmbr0

iface vmbr0 inet static

address 192.168.1.11/24

gateway 192.168.1.1

bridge-ports enp0s31f6

bridge-stp off

bridge-fd 0

bridge-vlan-aware yes

bridge-vids 2-4094

#Default Ethernet

auto vmbr1

iface vmbr1 inet static

address 192.168.4.2/24

bridge-ports enp2s0

bridge-stp off

bridge-fd 0

#ConnectX-3My LXC container I use for testing has the following network configuration.

The first problem was that I encountered that DHCP wouldn't work, so I tried the above configuration where I added two interfaces to the LXC container one static and one with DHCP enabled.

- eth0 - works as expected

- eth1 - has weird packet loss (i think this is related to why eth2 DHCP wont work)

- eth2 - wont get a DHCP address.

Code:

Gateway(192.168.4.1) - Switch - enp2s0 - vmbr1(192.168.4.2) - VM/LXC(192.168.4.41)

- Computer(192.168.4.180)I tried the following senarios (ping plus tcpdump along the way):

- Gateway -> WebGui(192.168.4.2) works

- Gateway -> Container(192.168.4.41) nothing, ping output just stays empty.

- Computer -> webgui works

- Computer -> Container same as #2

- Container -> Webgui ping works (had to disable firewall)

- Container -> Gateway same as #2

- Container -> Computer same as #2

tcpdump -ni <interface> icmpTcpdump senario #2 & #4:

- gateway/computer request packets

- enp2s0 no packets enter

- vmbr1 no packets enter

- vmbr1 - request packets

- enp2s0 - request packets

- gateway/computer - request packets

- gateway/computer - response packets

- enp2s0 - nothing

- vmbr1 - nothing

My first guess was that er was something wrong with the network/subnet, but if that was the case the WebBUI would also not be reachable but it is.

That would suggest that there is something wrong with the NIC/brigde. but again the WebGUI on that bridge works fine.

Have used this setup on different hardware where it works without a problem, so this problem has me quite confused.

I hope there is someone that can shed some light on this weird issue.

Code:

root@z77x:~# ethtool enp2s0

Settings for enp2s0:

Supported ports: [ FIBRE ]

Supported link modes: 1000baseX/Full

10000baseCR/Full

10000baseSR/Full

Supported pause frame use: Symmetric Receive-only

Supports auto-negotiation: No

Supported FEC modes: Not reported

Advertised link modes: 1000baseX/Full

10000baseCR/Full

10000baseSR/Full

Advertised pause frame use: Symmetric

Advertised auto-negotiation: No

Advertised FEC modes: Not reported

Speed: 10000Mb/s

Duplex: Full

Auto-negotiation: off

Port: FIBRE

PHYAD: 0

Transceiver: internal

Supports Wake-on: d

Wake-on: d

Current message level: 0x00000014 (20)

link ifdown

Link detected: yes

Code:

root@z77x:~# ethtool -i enp2s0

driver: mlx4_en

version: 4.0-0

firmware-version: 2.40.5030

expansion-rom-version:

bus-info: 0000:02:00.0

supports-statistics: yes

supports-test: yes

supports-eeprom-access: no

supports-register-dump: no

supports-priv-flags: yes

Code:

root@z77x:~# ethtool -k enp2s0

Features for enp2s0:

rx-checksumming: on

tx-checksumming: on

tx-checksum-ipv4: on

tx-checksum-ip-generic: off [fixed]

tx-checksum-ipv6: on

tx-checksum-fcoe-crc: off [fixed]

tx-checksum-sctp: off [fixed]

scatter-gather: on

tx-scatter-gather: on

tx-scatter-gather-fraglist: off [fixed]

tcp-segmentation-offload: on

tx-tcp-segmentation: on

tx-tcp-ecn-segmentation: off [fixed]

tx-tcp-mangleid-segmentation: off

tx-tcp6-segmentation: on

generic-segmentation-offload: on

generic-receive-offload: on

large-receive-offload: off [fixed]

rx-vlan-offload: on

tx-vlan-offload: on

ntuple-filters: off [fixed]

receive-hashing: on

highdma: on [fixed]

rx-vlan-filter: on [fixed]

vlan-challenged: off [fixed]

tx-lockless: off [fixed]

netns-local: off [fixed]

tx-gso-robust: off [fixed]

tx-fcoe-segmentation: off [fixed]

tx-gre-segmentation: off [fixed]

tx-gre-csum-segmentation: off [fixed]

tx-ipxip4-segmentation: off [fixed]

tx-ipxip6-segmentation: off [fixed]

tx-udp_tnl-segmentation: off [fixed]

tx-udp_tnl-csum-segmentation: off [fixed]

tx-gso-partial: off [fixed]

tx-tunnel-remcsum-segmentation: off [fixed]

tx-sctp-segmentation: off [fixed]

tx-esp-segmentation: off [fixed]

tx-udp-segmentation: off [fixed]

tx-gso-list: off [fixed]

fcoe-mtu: off [fixed]

tx-nocache-copy: off

loopback: off

rx-fcs: off

rx-all: off [fixed]

tx-vlan-stag-hw-insert: off

rx-vlan-stag-hw-parse: on

rx-vlan-stag-filter: on [fixed]

l2-fwd-offload: off [fixed]

hw-tc-offload: off [fixed]

esp-hw-offload: off [fixed]

esp-tx-csum-hw-offload: off [fixed]

rx-udp_tunnel-port-offload: off [fixed]

tls-hw-tx-offload: off [fixed]

tls-hw-rx-offload: off [fixed]

rx-gro-hw: off [fixed]

tls-hw-record: off [fixed]

rx-gro-list: off

macsec-hw-offload: off [fixed]

rx-udp-gro-forwarding: off

hsr-tag-ins-offload: off [fixed]

hsr-tag-rm-offload: off [fixed]

hsr-fwd-offload: off [fixed]

hsr-dup-offload: off [fixed]root@z77x:~# pveversion -v

proxmox-ve: 8.0.2 (running kernel: 6.2.16-6-pve)

pve-manager: 8.0.4 (running version: 8.0.4/d258a813cfa6b390)

proxmox-kernel-helper: 8.0.3

pve-kernel-5.15: 7.4-4

pve-kernel-5.13: 7.1-9

proxmox-kernel-6.2.16-6-pve: 6.2.16-7

proxmox-kernel-6.2: 6.2.16-7

pve-kernel-5.15.108-1-pve: 5.15.108-2

pve-kernel-5.15.74-1-pve: 5.15.74-1

pve-kernel-5.13.19-6-pve: 5.13.19-15

pve-kernel-5.13.19-2-pve: 5.13.19-4

ceph-fuse: 16.2.11+ds-2

corosync: 3.1.7-pve3

criu: 3.17.1-2

glusterfs-client: 10.3-5

ifupdown2: 3.2.0-1+pmx3

ksm-control-daemon: 1.4-1

libjs-extjs: 7.0.0-3

libknet1: 1.25-pve1

libproxmox-acme-perl: 1.4.6

libproxmox-backup-qemu0: 1.4.0

libproxmox-rs-perl: 0.3.1

libpve-access-control: 8.0.4

libpve-apiclient-perl: 3.3.1

libpve-common-perl: 8.0.7

libpve-guest-common-perl: 5.0.4

libpve-http-server-perl: 5.0.4

libpve-rs-perl: 0.8.5

libpve-storage-perl: 8.0.2

libspice-server1: 0.15.1-1

lvm2: 2.03.16-2

lxc-pve: 5.0.2-4

lxcfs: 5.0.3-pve3

novnc-pve: 1.4.0-2

proxmox-backup-client: 3.0.2-1

proxmox-backup-file-restore: 3.0.2-1

proxmox-kernel-helper: 8.0.3

proxmox-mail-forward: 0.2.0

proxmox-mini-journalreader: 1.4.0

proxmox-offline-mirror-helper: 0.6.2

proxmox-widget-toolkit: 4.0.6

pve-cluster: 8.0.3

pve-container: 5.0.4

pve-docs: 8.0.4

pve-edk2-firmware: 3.20230228-4

pve-firewall: 5.0.3

pve-firmware: 3.7-1

pve-ha-manager: 4.0.2

pve-i18n: 3.0.5

pve-qemu-kvm: 8.0.2-4

pve-xtermjs: 4.16.0-3

qemu-server: 8.0.6

smartmontools: 7.3-pve1

spiceterm: 3.3.0

swtpm: 0.8.0+pve1

vncterm: 1.8.0

zfsutils-linux: 2.1.12-pve1

proxmox-ve: 8.0.2 (running kernel: 6.2.16-6-pve)

pve-manager: 8.0.4 (running version: 8.0.4/d258a813cfa6b390)

proxmox-kernel-helper: 8.0.3

pve-kernel-5.15: 7.4-4

pve-kernel-5.13: 7.1-9

proxmox-kernel-6.2.16-6-pve: 6.2.16-7

proxmox-kernel-6.2: 6.2.16-7

pve-kernel-5.15.108-1-pve: 5.15.108-2

pve-kernel-5.15.74-1-pve: 5.15.74-1

pve-kernel-5.13.19-6-pve: 5.13.19-15

pve-kernel-5.13.19-2-pve: 5.13.19-4

ceph-fuse: 16.2.11+ds-2

corosync: 3.1.7-pve3

criu: 3.17.1-2

glusterfs-client: 10.3-5

ifupdown2: 3.2.0-1+pmx3

ksm-control-daemon: 1.4-1

libjs-extjs: 7.0.0-3

libknet1: 1.25-pve1

libproxmox-acme-perl: 1.4.6

libproxmox-backup-qemu0: 1.4.0

libproxmox-rs-perl: 0.3.1

libpve-access-control: 8.0.4

libpve-apiclient-perl: 3.3.1

libpve-common-perl: 8.0.7

libpve-guest-common-perl: 5.0.4

libpve-http-server-perl: 5.0.4

libpve-rs-perl: 0.8.5

libpve-storage-perl: 8.0.2

libspice-server1: 0.15.1-1

lvm2: 2.03.16-2

lxc-pve: 5.0.2-4

lxcfs: 5.0.3-pve3

novnc-pve: 1.4.0-2

proxmox-backup-client: 3.0.2-1

proxmox-backup-file-restore: 3.0.2-1

proxmox-kernel-helper: 8.0.3

proxmox-mail-forward: 0.2.0

proxmox-mini-journalreader: 1.4.0

proxmox-offline-mirror-helper: 0.6.2

proxmox-widget-toolkit: 4.0.6

pve-cluster: 8.0.3

pve-container: 5.0.4

pve-docs: 8.0.4

pve-edk2-firmware: 3.20230228-4

pve-firewall: 5.0.3

pve-firmware: 3.7-1

pve-ha-manager: 4.0.2

pve-i18n: 3.0.5

pve-qemu-kvm: 8.0.2-4

pve-xtermjs: 4.16.0-3

qemu-server: 8.0.6

smartmontools: 7.3-pve1

spiceterm: 3.3.0

swtpm: 0.8.0+pve1

vncterm: 1.8.0

zfsutils-linux: 2.1.12-pve1

Last edited: