#!/bin/bash

# Arguments from Proxmox: $1 = VMID, $2 = phase (pre-start, post-start, pre-stop, post-stop)

VMID=$1

PHASE=$2

# PCI addresses from lspci

GPU="0000:03:00.0" # VGA PCI address

AUDIO="0000:04:00.0" # Audio PCI address

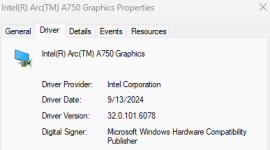

GPU_ID="8086 56a1" # Vendor:Device for GPU

AUDIO_ID="8086 4f90" # For audio

HOST_VGA_DRIVER="xe"

HOST_AUDIO_DRIVER="snd_hda_intel"

if [ "$PHASE" = "pre-start" ]; then

# Before VM start: Detach from host drivers, bind to VFIO

echo "Detaching GPU $GPU and audio $AUDIO from host drivers..."

# Unbind GPU and audio if bound

echo "$GPU" > /sys/bus/pci/devices/"$GPU"/driver/unbind 2>/dev/null

echo "$AUDIO" > /sys/bus/pci/devices/"$AUDIO"/driver/unbind 2>/dev/null

# Bind to VFIO

echo "$GPU_ID" > /sys/bus/pci/drivers/vfio-pci/new_id 2>/dev/null

echo "$AUDIO_ID" > /sys/bus/pci/drivers/vfio-pci/new_id 2>/dev/null

echo "GPU bound to VFIO for VM $VMID."

echo "Arc hook: Disabling reset methods for $GPU and $AUDIO"

echo > /sys/bus/pci/devices/$GPU/reset_method

echo > /sys/bus/pci/devices/$AUDIO/reset_method

fi

if [ "$PHASE" = "post-stop" ]; then

# After VM shutdown: Unbind from VFIO, rebind to host

echo "Rebinding GPU $GPU and audio $AUDIO to host drivers..."

# Unbind from VFIO

echo "$GPU" > /sys/bus/pci/drivers/vfio-pci/unbind 2>/dev/null

echo "$AUDIO" > /sys/bus/pci/drivers/vfio-pci/unbind 2>/dev/null

# Remove IDs from VFIO (optional?)

echo "$GPU_ID" > /sys/bus/pci/drivers/vfio-pci/remove_id 2>/dev/null

echo "$AUDIO_ID" > /sys/bus/pci/drivers/vfio-pci/remove_id 2>/dev/null

# Remove devices and rescan PCI bus (Turns out it didn't need those)

#echo 1 > /sys/bus/pci/devices/"$GPU"/remove 2>/dev/null

#echo 1 > /sys/bus/pci/devices/"$AUDIO"/remove 2>/dev/null

#echo 1 > /sys/bus/pci/rescan

# Rebind to host drivers

echo "$GPU" > /sys/bus/pci/drivers/"$HOST_VGA_DRIVER"/bind 2>/dev/null

echo "$AUDIO" > /sys/bus/pci/drivers/"$HOST_AUDIO_DRIVER"/bind 2>/dev/null

echo "GPU rebound to host for VM $VMID."

fi