I have a Proxmox server equipped with four NVIDIA H200 NVL GPUs.

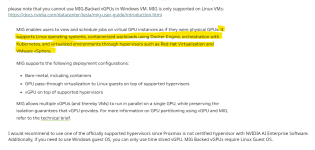

Is it possible to allocate GPU resources to individual VMs in a vGPU-like manner?

If so, are there any documented methods or successful real-world cases of this configuration?

Is it possible to allocate GPU resources to individual VMs in a vGPU-like manner?

If so, are there any documented methods or successful real-world cases of this configuration?