Hi all.

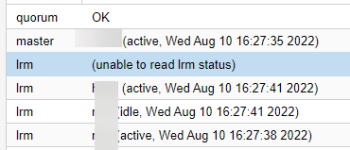

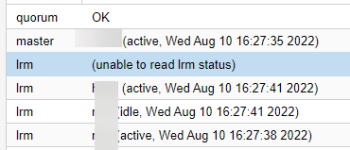

I have previously removed 1 node from the cluster, but recently we noticed that HA is giving an error (unable to read lrm status)

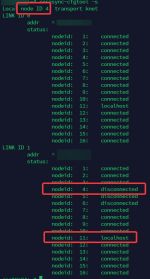

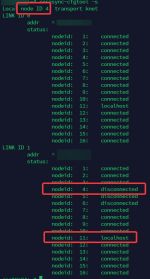

When I switched to check corosync, I noticed that they misdetected its own node ID information. For example. node ID:04 but its corosync is receiving ID:11 resulting in ID:04 disconnected.

This wrong detection of localhost information only happens on some nodes, I think that is the cause of HA failure. Is there any way to deal with that?

I have previously removed 1 node from the cluster, but recently we noticed that HA is giving an error (unable to read lrm status)

When I switched to check corosync, I noticed that they misdetected its own node ID information. For example. node ID:04 but its corosync is receiving ID:11 resulting in ID:04 disconnected.

This wrong detection of localhost information only happens on some nodes, I think that is the cause of HA failure. Is there any way to deal with that?