Hi everybody,

I am a new proxmox user!

I have strange (bad) perfomance results between native fresh windows and VM on proxmox.

my config:

Raid config:

RAID 10 on MegaRAID SAS 9480-8i8e (hardware raid)

1 Virtual & 24 Physical Drives

Used 21.826 TB of 21.826 TB Available

24xST2000NM0001

I do a lot of different tests and get results with proxmox VM slower x5-6 times

for example

native windows

READ 6600MB/s

WRITE 6600MB/s

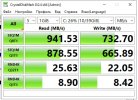

proxmox VM windows

READ 940MB/s

WRITE 730MB/s

Can somebody help me?

I have no idea why it happens and how i can solve this issue.

I am a new proxmox user!

I have strange (bad) perfomance results between native fresh windows and VM on proxmox.

my config:

Code:

root@proxmox:~# qm config 100

agent: 1

boot: order=sata0;ide2;net0

cores: 8

cpu: x86-64-v2-AES

ide2: local:iso/Windows_Server_2019.iso,media=cdrom,size=5146960K

machine: pc-i440fx-8.0

memory: 8192

meta: creation-qemu=8.0.2,ctime=1690304691

name: win

net0: e1000=AA:1E:96:C2:A7:31,bridge=vmbr0,firewall=1

numa: 0

ostype: win10

sata0: my_cat:100/vm-100-disk-0.qcow2,backup=0,cache=writeback,size=40G,ssd=1

sata1: my_cat:100/vm-100-disk-1.qcow2,backup=0,cache=writeback,iops_rd=6000,iops_rd_max=6000,iops_wr=6000,iops_wr_max=6000,mbps_rd=6000,mbps_rd_max=6000,mbps_wr=6000,mbps_wr_max=6000,size=12G,ssd=1

scsihw: virtio-scsi-single

smbios1: uuid=15aa7e8f-06a2-453d-a25f-ae976cae8062

sockets: 1

vmgenid: 2e0e030b-f401-4b95-8277-43059f24ee4d

root@proxmox:~# pveversion -v

proxmox-ve: 8.0.1 (running kernel: 6.2.16-3-pve)

pve-manager: 8.0.3 (running version: 8.0.3/bbf3993334bfa916)

pve-kernel-6.2: 8.0.2

pve-kernel-6.2.16-3-pve: 6.2.16-3

ceph-fuse: 17.2.6-pve1+3

corosync: 3.1.7-pve3

criu: 3.17.1-2

glusterfs-client: 10.3-5

ifupdown2: 3.2.0-1+pmx2

ksm-control-daemon: 1.4-1

libjs-extjs: 7.0.0-3

libknet1: 1.25-pve1

libproxmox-acme-perl: 1.4.6

libproxmox-backup-qemu0: 1.4.0

libproxmox-rs-perl: 0.3.0

libpve-access-control: 8.0.3

libpve-apiclient-perl: 3.3.1

libpve-common-perl: 8.0.5

libpve-guest-common-perl: 5.0.3

libpve-http-server-perl: 5.0.3

libpve-rs-perl: 0.8.3

libpve-storage-perl: 8.0.1

libspice-server1: 0.15.1-1

lvm2: 2.03.16-2

lxc-pve: 5.0.2-4

lxcfs: 5.0.3-pve3

novnc-pve: 1.4.0-2

proxmox-backup-client: 2.99.0-1

proxmox-backup-file-restore: 2.99.0-1

proxmox-kernel-helper: 8.0.2

proxmox-mail-forward: 0.1.1-1

proxmox-mini-journalreader: 1.4.0

proxmox-widget-toolkit: 4.0.5

pve-cluster: 8.0.1

pve-container: 5.0.3

pve-docs: 8.0.3

pve-edk2-firmware: 3.20230228-4

pve-firewall: 5.0.2

pve-firmware: 3.7-1

pve-ha-manager: 4.0.2

pve-i18n: 3.0.4

pve-qemu-kvm: 8.0.2-3

pve-xtermjs: 4.16.0-3

qemu-server: 8.0.6

smartmontools: 7.3-pve1

spiceterm: 3.3.0

swtpm: 0.8.0+pve1

vncterm: 1.8.0

zfsutils-linux: 2.1.12-pve1

root@proxmox:~# numactl --hardware

available: 2 nodes (0-1)

node 0 cpus: 0 1 2 3 4 5 6 7 16 17 18 19 20 21 22 23

node 0 size: 48276 MB

node 0 free: 47293 MB

node 1 cpus: 8 9 10 11 12 13 14 15 24 25 26 27 28 29 30 31

node 1 size: 48320 MB

node 1 free: 46715 MB

node distances:

node 0 1

0: 10 20

1: 20 10

root@proxmox:~# lscpu

Architecture: x86_64

CPU op-mode(s): 32-bit, 64-bit

Address sizes: 46 bits physical, 48 bits virtual

Byte Order: Little Endian

CPU(s): 32

On-line CPU(s) list: 0-31

Vendor ID: GenuineIntel

BIOS Vendor ID: Intel

Model name: Intel(R) Xeon(R) CPU E5-2670 0 @ 2.60GHz

BIOS Model name: Intel(R) Xeon(R) CPU E5-2670 0 @ 2.60GHz CPU @ 2.6GHz

BIOS CPU family: 179

CPU family: 6

Model: 45

Thread(s) per core: 2

Core(s) per socket: 8

Socket(s): 2

Stepping: 7

CPU(s) scaling MHz: 96%

CPU max MHz: 3300.0000

CPU min MHz: 1200.0000

BogoMIPS: 5187.48

Flags: fpu vme de pse tsc msr pae mce cx8 apic sep mtrr pge mca cmov pat pse36 clflush dts acpi mmx fxsr sse sse2 ss ht tm pbe syscall nx pdpe1gb rdtscp lm constant_tsc arch_perfmon pebs bts rep_good nopl xtopology nons

top_tsc cpuid aperfmperf pni pclmulqdq dtes64 monitor ds_cpl vmx smx est tm2 ssse3 cx16 xtpr pdcm pcid dca sse4_1 sse4_2 x2apic popcnt tsc_deadline_timer aes xsave avx lahf_lm epb pti ssbd ibrs ibpb stibp tpr_sha

dow vnmi flexpriority ept vpid xsaveopt dtherm ida arat pln pts md_clear flush_l1d

Virtualization features:

Virtualization: VT-x

Caches (sum of all):

L1d: 512 KiB (16 instances)

L1i: 512 KiB (16 instances)

L2: 4 MiB (16 instances)

L3: 40 MiB (2 instances)

NUMA:

NUMA node(s): 2

NUMA node0 CPU(s): 0-7,16-23

NUMA node1 CPU(s): 8-15,24-31

Vulnerabilities:

Itlb multihit: KVM: Mitigation: VMX disabled

L1tf: Mitigation; PTE Inversion; VMX conditional cache flushes, SMT vulnerable

Mds: Mitigation; Clear CPU buffers; SMT vulnerable

Meltdown: Mitigation; PTI

Mmio stale data: Unknown: No mitigations

Retbleed: Not affected

Spec store bypass: Mitigation; Speculative Store Bypass disabled via prctl

Spectre v1: Mitigation; usercopy/swapgs barriers and __user pointer sanitization

Spectre v2: Mitigation; Retpolines, IBPB conditional, IBRS_FW, STIBP conditional, RSB filling, PBRSB-eIBRS Not affected

Srbds: Not affected

Tsx async abort: Not affected

root@proxmox:~# lspci | grep RAID

02:00.0 RAID bus controller: Hewlett-Packard Company Smart Array Gen8 Controllers (rev 01)

04:00.0 RAID bus controller: Broadcom / LSI MegaRAID Tri-Mode SAS3516 (rev 01) // Controller ID: 0 AVAGO MegaRAID SAS 9480-8i8e 0x500062b204c3f5c0Raid config:

RAID 10 on MegaRAID SAS 9480-8i8e (hardware raid)

1 Virtual & 24 Physical Drives

Used 21.826 TB of 21.826 TB Available

24xST2000NM0001

I do a lot of different tests and get results with proxmox VM slower x5-6 times

for example

native windows

READ 6600MB/s

WRITE 6600MB/s

proxmox VM windows

READ 940MB/s

WRITE 730MB/s

Can somebody help me?

I have no idea why it happens and how i can solve this issue.

Attachments

Last edited: