We have 2 proxmox hosts for around 20vms which are all running Win11 and we want to use WSL2 which requires virtualization support.

Now when I enable nested virtualization as a flag in the hardware config for the machine it doesnt work (windows shows no virtualiation feature enabled). When I set CPU type to host users report that the system gets extremly laggy (which I was able to confirm myself).

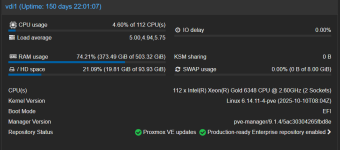

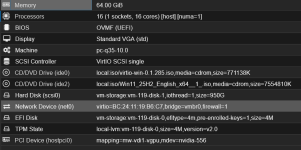

I attached pictures of the summary tab of one of the hosts and the hardware tab from one vm.

We do use nvidia vgpus which is why i pinned the kernel 6.14.

Storage is a RAID 5 with SSDs (hardware raid controller)

Does anyone have suggestions what i can do differently or is this a known issue?

P.S. Current config of VM is shown as is with no virtualization enabled.

Now when I enable nested virtualization as a flag in the hardware config for the machine it doesnt work (windows shows no virtualiation feature enabled). When I set CPU type to host users report that the system gets extremly laggy (which I was able to confirm myself).

I attached pictures of the summary tab of one of the hosts and the hardware tab from one vm.

We do use nvidia vgpus which is why i pinned the kernel 6.14.

Storage is a RAID 5 with SSDs (hardware raid controller)

Does anyone have suggestions what i can do differently or is this a known issue?

P.S. Current config of VM is shown as is with no virtualization enabled.