Latest activity

-

Hharunk replied to the thread [SOLVED] S3 Hetzner Garbage collect fails with `error reading a body from connection`.Sorry for the mixup! those posts were created yesterday but i didn't see them in my feed. Today morning i checked the forum again to ensure that these posts are not visible before creating a new one.

-

Ggargakumar reacted to fabian's post in the thread Opt-in Linux 7.0 Kernel for Proxmox VE 9 available on test and no-subscription with

Like.

yes, please open a new thread (feel free to mention @ me) and include a journal of the working kernel and details about your setup and hardware, in particular where your rootfs is stored ;)

Like.

yes, please open a new thread (feel free to mention @ me) and include a journal of the working kernel and details about your setup and hardware, in particular where your rootfs is stored ;) -

fiona reacted to harrydus's post in the thread LXC Container do not start after latest Kernel Upgrade to 7.0.2-2 with

fiona reacted to harrydus's post in the thread LXC Container do not start after latest Kernel Upgrade to 7.0.2-2 with Like.

It seems that there is a race condition regarding systemd-tmpfiles and several ZFS related services. We are using ZFS on LUKS and a systemd service to unlock the LUKS partitions. Curiously this didn't happened before. I'll try to fix this.

Like.

It seems that there is a race condition regarding systemd-tmpfiles and several ZFS related services. We are using ZFS on LUKS and a systemd service to unlock the LUKS partitions. Curiously this didn't happened before. I'll try to fix this. -

Ggargakumar posted the thread [solved] Kernel Panic when upgrading from pve 9.1.6 to 9.1.9 (kernel 7.0.x) - amdgpu drivers in Proxmox VE: Installation and configuration.As discussed on the 7.0 kernel thread, I'm opening a new thread to investigate the issue. Yesterday I updated pve from 9.1.6 to 9.1.9, which installed a 7.0.x kernel. However on reboot I got a Kernel Panic : unable to mount root fs on...

-

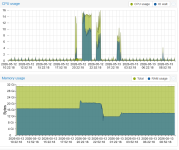

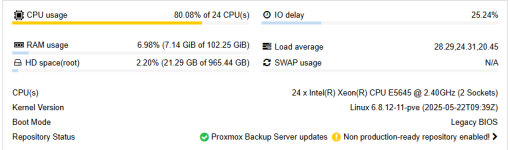

Hharunk replied to the thread S3 Hetzner failures with Gargbage collection.The PBS is a standalone machine and according to the statistics page (see image below), there are no memory constraints. The s3 garbage collect runs at 2:30 everyday and here is the system summary from the Server administration page. Both cpu and...

-

fiona replied to the thread Secure Boot – Microsoft UEFI CA 2023 Certificate Not Included in EFI Disk.Hi @r.wegmann, the second orange line just means that the change cannot be applied immediately while the VM is running. It will be applied the next time, the VM is started or rebooted from the UI (reboot within the VM is not enough). If the...

fiona replied to the thread Secure Boot – Microsoft UEFI CA 2023 Certificate Not Included in EFI Disk.Hi @r.wegmann, the second orange line just means that the change cannot be applied immediately while the VM is running. It will be applied the next time, the VM is started or rebooted from the UI (reboot within the VM is not enough). If the... -

Wwagnbeu0 replied to the thread Host freezing requiring physical reboot.Hi, i´m having the same behaviour on my machine: Lenove ThinkCentre 715 64 GB RAM When running kernel Linux 6.8.12-13-pve it absolutely runs smooth, when booting into any other kernel the system freezes after some minutes or ours. so what was...

-

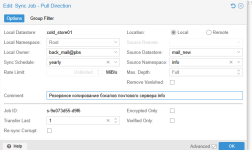

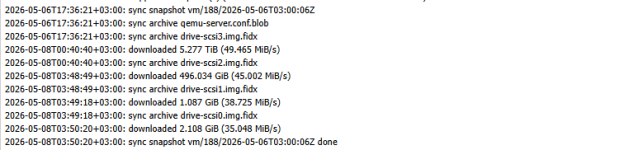

DWe have an issue: the data transfer rate during sync job is extremely slow, 20-50 MB/s. The hard drives used are a 3.5" Toshiba MG10-F 22 TB MG10SFA22TE SAS 12Gb/s hard drive. Connected via a 12Gb/s HBA There is no load on them during the data...

-

UdoB reacted to lucavornheder's post in the thread Configering a Cluster with only one node (first) with

UdoB reacted to lucavornheder's post in the thread Configering a Cluster with only one node (first) with Like.

Hi, yes, this is absolutely possible. I would do it the same way.

Like.

Hi, yes, this is absolutely possible. I would do it the same way. -

UdoB replied to the thread Configering a Cluster with only one node (first).Sure! To check some aspects, usual problems and possible showstoppers read this forum ;-) and those wiki pages: https://pve.proxmox.com/wiki/Migrate_to_Proxmox_VE https://pve.proxmox.com/wiki/Advanced_Migration_Techniques_to_Proxmox_VE Good...

UdoB replied to the thread Configering a Cluster with only one node (first).Sure! To check some aspects, usual problems and possible showstoppers read this forum ;-) and those wiki pages: https://pve.proxmox.com/wiki/Migrate_to_Proxmox_VE https://pve.proxmox.com/wiki/Advanced_Migration_Techniques_to_Proxmox_VE Good... -

RolandK replied to the thread Opt-in Linux 7.0 Kernel for Proxmox VE 9 available on test and no-subscription.also getting these. have opened https://bugzilla.proxmox.com/show_bug.cgi?id=7585 for it

RolandK replied to the thread Opt-in Linux 7.0 Kernel for Proxmox VE 9 available on test and no-subscription.also getting these. have opened https://bugzilla.proxmox.com/show_bug.cgi?id=7585 for it -

Wwagnbeu0 replied to the thread e1000e eno1: Detected Hardware Unit Hang:.@celemine1gig Thank you for the update. I know that this problem occurs primarily with INtel NIC, but as ou can see it happens again and again - so this is why I am also looking for a permanent solution even if I have no Intel NIC. Maybe the...

-

SteveITS replied to the thread CEPH cluster_network defaults to a single node IP rather than to a subnet.AFAIK that’s normal in PVE. And equal?

SteveITS replied to the thread CEPH cluster_network defaults to a single node IP rather than to a subnet.AFAIK that’s normal in PVE. And equal? -

fiona replied to the thread Live Migrations get slower over time.Hi, please share the VM configuration of an affected VM qm config ID with the numerical ID of the VM as well as the output of pveversion -v and full system journal from the source and target node from the boot until and including the problematic...

fiona replied to the thread Live Migrations get slower over time.Hi, please share the VM configuration of an affected VM qm config ID with the numerical ID of the VM as well as the output of pveversion -v and full system journal from the source and target node from the boot until and including the problematic... -

YYaZoal replied to the thread Unexpected reboot.Is this node in a cluster? Please share journalctl log include ~4 before the reboot observed, for example: root@pve ~# journalctl --since "2026-05-09 00:00" --until "2026-05-09 12:00" | gzip > /tmp/$(hostname)-syslog.txt.gz For a cluster, it is...

-

Ssachinh replied to the thread proxmox (VSphere VMWare to Proxmox migration).Thanks for reply. Hostbus change is what very obvious thing to try. As same problem was resolved with new Linux Version i.e. by changing the Hostbus to IDE as I mentioned in my first post. But that solution is not working here anymore. Actually...

-

Mmkevenaar replied to the thread Proxmox with 48 nodes.3 nodes (HPE DL360 Gen10) On-board 1GE link to oob/mgmt switch for "link 0". 2 2-port 25/10GE NICs with 1 port each in an LACP (802.3ad) bonding to Nexus switches (in a VPC setup) with 10GE DAC cables 802.1q. "link 1" is running on an SDN VLAN...

-

TWe are currently setting up two separate PVE clusters. Both are HCI clusters running Ceph. The servers are distributed across two colocation facilities in the same city, connected via a low-latency data centre interconnect. One cluster consists...

-

mariol replied to the thread [SOLVED] Super slow, timeout, and VM stuck while backing up, after updated to PVE 9.1.1 and PBS 4.0.20.Kernel 7.0.0-3-pve is also working fine with this

mariol replied to the thread [SOLVED] Super slow, timeout, and VM stuck while backing up, after updated to PVE 9.1.1 and PBS 4.0.20.Kernel 7.0.0-3-pve is also working fine with this -

Llucavornheder replied to the thread Configering a Cluster with only one node (first).Hi, yes, this is absolutely possible. I would do it the same way.