Latest activity

-

TTightrope2718 replied to the thread Unable to get Arc Pro B50 to function.Thanks for your suggestions. I forgot to add that the failure of the B50 isn't occurring when the VM starts up. I have had the VM completely disabled. My goal was to do passthrough (only one VM needs the GPU), but since vfio wasn't working, I...

-

AAxodouble replied to the thread Backups hang reliably when jumbo frames enabled on PBS VM.Sorry for the necro; Spent a bit researching this, came to discover that this seems to be a known issue, albeit not very well known, for people who are interested in a (albeit hacky) fix, here is a github repo I found that seeks to resolve this...

-

BBlueloop replied to the thread Bridge stops forwarding packets over the bond..I think you have answered your own question, ie it does look like a bug if you can revert a change and get it working again! However that does assume that no other changes have happened reasonably recently. I use bonding quite a lot, generally...

-

Aakanealw reacted to PeterZwgatPX's post in the thread [SOLVED] PBS 4.1: Push Sync Jobs Fail, No Output in Task Viewer & Connection Error with

Like.

I had some time to take a closer look at the problem with the missing logs in Task Viewer. Since I access the GUI of my PBS via a Traefik reverse proxy, I searched for the cause of the error in my Traefik config and found out that the problem...

Like.

I had some time to take a closer look at the problem with the missing logs in Task Viewer. Since I access the GUI of my PBS via a Traefik reverse proxy, I searched for the cause of the error in my Traefik config and found out that the problem... -

Ccarlitos009 replied to the thread PROXMOX 8.3.0 hangs for no apparent reason.Thank you so much. I will check the hardware now. This is surprising as this is fairly new.

-

OOnslow reacted to portedaix's post in the thread Backup speed regression: 2h20m → 8h39m after PVE 8→9 / QEMU 9.2→10.1 / Ceph 18→19 upgrade with

Like.

Hi, That was a beginner mistake. Real root cause (found 2026-05-06): discard=on was missing from scsi1 in the QEMU config. For over a year, every ext4 TRIM was silently dropped by QEMU and never propagated down to Ceph → 2.7 TiB of orphaned RBD...

Like.

Hi, That was a beginner mistake. Real root cause (found 2026-05-06): discard=on was missing from scsi1 in the QEMU config. For over a year, every ext4 TRIM was silently dropped by QEMU and never propagated down to Ceph → 2.7 TiB of orphaned RBD... -

OOnslow replied to the thread Error with upgrading from Proxmox 8 to 9.Hi, @mickyface Could it be one more case of the situation solved in this thread? https://forum.proxmox.com/threads/pbs-4-upgrade-on-a-dell-nx3230-doesnt-fully-boot.183192/post-851045

-

JJohannes S replied to the thread Use Disk PassThrough or Raid-0 for PVE with Ceph (Testsystem).It depends on your usecase. If you want to have still a working cluster if two nodes get down (due to maintenance or failure) you will need more than three nodes for the reasons Udo explained in his writeup. But if you are fine with just being...

-

JJohannes S reacted to Martin.B.'s post in the thread Use Disk PassThrough or Raid-0 for PVE with Ceph (Testsystem) with

Like.

It will be to see the difference of using ceph on lokal storage vs. PVE nodes without local storage and some kind of remote storage. If any of this will be used in future production environment there will not be much "load" on it. Not CPU nor...

Like.

It will be to see the difference of using ceph on lokal storage vs. PVE nodes without local storage and some kind of remote storage. If any of this will be used in future production environment there will not be much "load" on it. Not CPU nor... -

JJohannes S reacted to alexskysilk's post in the thread Use Disk PassThrough or Raid-0 for PVE with Ceph (Testsystem) with

Like.

This is a major point. using ceph on this sort of POC will not teach you how to actually use ceph in production since you wont be able to actually put the type of load on it that would be meaningful. Is your intention to use this knowledge to put...

Like.

This is a major point. using ceph on this sort of POC will not teach you how to actually use ceph in production since you wont be able to actually put the type of load on it that would be meaningful. Is your intention to use this knowledge to put... -

JJohannes S reacted to Martin.B.'s post in the thread Use Disk PassThrough or Raid-0 for PVE with Ceph (Testsystem) with

Like.

Thanks for your fast answers, looks like i will go this way and only use 2 of the hardware machines and build the ceph cluster virtual. The machines do only have 32GB RAM and an older 6 core CPU, so maybe using 2 hardware machines will be better...

Like.

Thanks for your fast answers, looks like i will go this way and only use 2 of the hardware machines and build the ceph cluster virtual. The machines do only have 32GB RAM and an older 6 core CPU, so maybe using 2 hardware machines will be better... -

JJohannes S reacted to aaron's post in the thread Use Disk PassThrough or Raid-0 for PVE with Ceph (Testsystem) with

Like.

This is what we use for many internal tests, and also in the hands-on labs for our trainings. Good for functionality and behavior tests as long as performance is not a factor!

Like.

This is what we use for many internal tests, and also in the hands-on labs for our trainings. Good for functionality and behavior tests as long as performance is not a factor! -

JJohannes S reacted to UdoB's post in the thread Use Disk PassThrough or Raid-0 for PVE with Ceph (Testsystem) with

Like.

Sure! For teaching/learning/debugging this works great. Example: in my Homelab I have one specific cluster member with 64 GiB Ram and 2 TB local storage for VMs. I was able to create six virtual PVE nodes with 8 GiB Ram each, construct a...

Like.

Sure! For teaching/learning/debugging this works great. Example: in my Homelab I have one specific cluster member with 64 GiB Ram and 2 TB local storage for VMs. I was able to create six virtual PVE nodes with 8 GiB Ram each, construct a... -

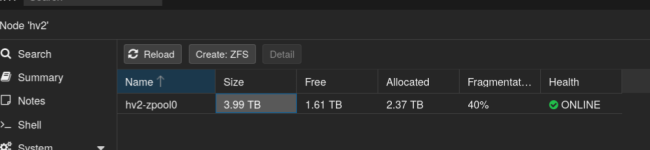

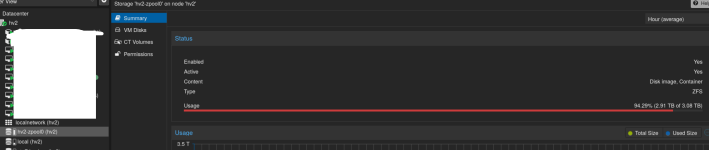

JThese basically show similar things to zpool status and zfs list. RAIDZ has padding overhead and the GUI also mixes different units (TB vs TiB) and that's where the discrepancy comes from. This was talked about here: -...

-

Mmosespray posted the thread community.proxmox.proxmox_kvm not setting VLAN Tag on update in Proxmox VE: Installation and configuration.Hello, I am trying to fully automate my disaster recovery solution for my Proxmox homelab. I am using Proxmox Virtual Environment 9.1.9 and ansible [core 2.20.5]. I am also using the latest community.proxmox.promox library. I have a set of...

-

Kknewman replied to the thread Confused About my ZFS Storage.Thanks for the quick reply! The links are very helpful.

-

KThese basically show similar things to zpool status and zfs list. RAIDZ has padding overhead and the GUI also mixes different units (TB vs TiB) and that's where the discrepancy comes from. This was talked about here: -...

-

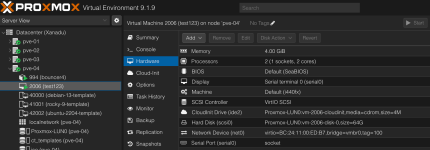

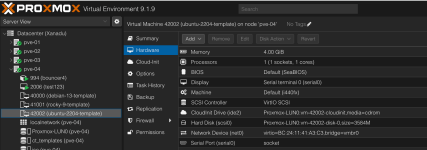

Kknewman posted the thread Confused About my ZFS Storage in Proxmox VE: Installation and configuration.Hello, I'm hoping someone more knowledgeable than me can help me understand how much space I actually have left on my Proxmox ZFS pool. If I do a zpool list -v I get an ouput saying my pool is 3.62T in size with 2.16T allocated and 1.47T free...

-

powersupport replied to the thread Unable to remove a snapshot.Hi, It looks like the tape-restored snapshot may still have some locked or protected metadata. You may try: Running datastore verify Running garbage collection Checking for active tasks/locks Restarting proxmox-backup-proxy If normal removal...

powersupport replied to the thread Unable to remove a snapshot.Hi, It looks like the tape-restored snapshot may still have some locked or protected metadata. You may try: Running datastore verify Running garbage collection Checking for active tasks/locks Restarting proxmox-backup-proxy If normal removal... -

OOk, I now succeeded: as a last step, one does indeed have to repair the partition table. Here's a short writup on how I finally did it to shrink a VM hard drive from 120G (14% used by Ubuntu server 24.04 LTS) to 56G on a ZFS volume: First of...