Latest activity

-

Wwla posted the thread Proxmox VE with TrueNAS Proxmox VE Storage Plugin issue - when powered-off VMs are moved in Proxmox VE: Installation and configuration.PVE 9.1.6 with iSCSI Trunenas Plugin 2.06 Configuration: ┌────────────────────┐ │ PVE1 9.1.6 │vmbr0: iscsi(VLAN 110) 192.168.110.12/24 │ NIC0 x───────────────────────────────────────── │ with │ mgmt(VLAN...

-

Yyorilouz reacted to Chris's post in the thread Proxmox-backup-client (3.4.6): "unclosed encoder dropped" / "upload failed" during pxar backup (Following #170211 patches) with

Like.

The high number of files might be the reason, and the error completely lacks the error context (need to check what's wrong there and improve this). Did you try to bump entries-max already? --entries-max <integer> (default=1048576) Max...

Like.

The high number of files might be the reason, and the error completely lacks the error context (need to check what's wrong there and improve this). Did you try to bump entries-max already? --entries-max <integer> (default=1048576) Max... -

Yyorilouz replied to the thread Proxmox-backup-client (3.4.6): "unclosed encoder dropped" / "upload failed" during pxar backup (Following #170211 patches).Thanks for the technical details! Looking at the code you shared (lines 681-687), it seems there is a specific error message: exceeded allowed number of file entries. However, in my case, I never saw this message in the logs. I only got unclosed...

-

Hhaarm123 reacted to Falk R.'s post in the thread Wie kann ich im Cluster Ausfallsicherheit herstellen? with

Like.

Nein, hier geht es nicht ums Forum sondern dass falsche Fakten da stehen. Ganz einfach. Dann verlinke das nächste Mal einfach das offizielle Wiki, da steht es auch korrekt.

Like.

Nein, hier geht es nicht ums Forum sondern dass falsche Fakten da stehen. Ganz einfach. Dann verlinke das nächste Mal einfach das offizielle Wiki, da steht es auch korrekt. -

Llucavornheder replied to the thread Configure migration settings network to share the same network of Ceph.Yeah, you can switch the network in the Datacenter menu under Options. Just keep in mind that 2x10G isn’t a lot for Ceph, if a migration starts running, you can hit bottlenecks pretty fast. I think, there’s also an option to limit the migration...

-

Llucavornheder replied to the thread What’s your workflow for documenting complex network setups for clients?.I’ve had good experience with NetBox. Getting started is pretty complex, but once you get the hang of it, it’s very customizable and especially great for automation.

-

UdoB replied to the thread [SOLVED] Questions regarding Corosync and Q-Device.Great! Just make sure to use two independent physical connections. All VLAN in a single trunk will possibly fail at the same time... Sure. The limiting factor is (high) latency. And because that is often induced by congestion it should be...

UdoB replied to the thread [SOLVED] Questions regarding Corosync and Q-Device.Great! Just make sure to use two independent physical connections. All VLAN in a single trunk will possibly fail at the same time... Sure. The limiting factor is (high) latency. And because that is often induced by congestion it should be... -

Pproxuser77 replied to the thread Einführung von Einschränkungen / Kennzeichnungspflicht von KI-Beiträgen.Das Problem ist, dass die KI häufig nicht den gesamten Kontext erfasst, egal, was dir die Marketingabteilung von Anthropic oder OpenAI weismachen will. Ein detaillierter Fehlerreport zu einer theoretischen Schwachstelle, die im konkreten...

-

Llucavornheder replied to the thread [SOLVED] Questions regarding Corosync and Q-Device.Because Corosync is running in unicast mode, you can keep everything in one network. That said, it’s cleaner to have a separate network for each cluster. On the host that runs Corosync, I’d also install Proxmox as a single node and host the...

-

Yyorilouz replied to the thread Proxmox-backup-client (3.4.6): "unclosed encoder dropped" / "upload failed" during pxar backup (Following #170211 patches).Hi Christian, That was exactly it! Increasing the --entries-max parameter to 2097152 solved the issue completely. The backup now completes successfully. Since this directory contains ~1.4M files, the default limit was indeed the bottleneck...

-

Llucavornheder replied to the thread Best practice Windows provisioning.Hi, wir haben schon viel mit CloudInit/Cloudbase gemacht. Erfordert viel Einarbeitung, aber wenn es läuft, dann läuft es. Cloudbase steuert auch nur das sysprep und alle anderen tools die ich kenne, tun dies genauso.

-

OOnslow replied to the thread Can't figure out permissions to access PVE storage content API.If I understand your issue right, you want to verify things at PBS side. So permissions at PBS matter.

-

OOnslow replied to the thread [SOLVED] How to remove failed iSCSI multipath paths in Proxmox VE?.Hi, @pulipulichen In a similar situation I have cleared faulty devices with, e.g.: echo 1 > /sys/block/sdq/device/delete

-

SSkye0 replied to the thread Can't figure out permissions to access PVE storage content API.Those are permissions for the PBS api, whereas I was trying to make calls to the PVE api. If there is a similar api to get the verification status on PBS I suppose I could query both systems, but it seems more straightforward to just query PVE...

-

RRiHe reacted to Deerom's post in the thread Unable to migrate & start VM due to problematic TPM-disks with

Like.

Hello, Recently we've experienced problematic behavior regarding TPM-disks within Proxmox 8.4.16, in our 30 node cluster ONE single node is unable to do the following: - Start new VMs which have a TPM-disk configured, error [1] - Live-migrate...

Like.

Hello, Recently we've experienced problematic behavior regarding TPM-disks within Proxmox 8.4.16, in our 30 node cluster ONE single node is unable to do the following: - Start new VMs which have a TPM-disk configured, error [1] - Live-migrate... -

OOnslow replied to the thread Can't figure out permissions to access PVE storage content API.Hi, @Skye0 You may want to have a look at https://pbs.proxmox.com/docs/user-management.html#api-tokens and https://pbs.proxmox.com/docs/user-management.html#access-control

-

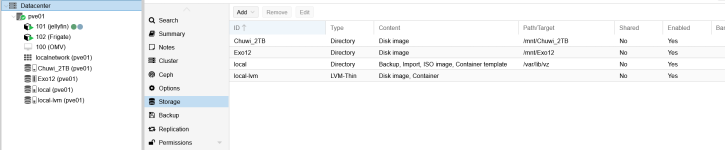

HHamlet replied to the thread Make a Proxmox NTFS HDD accessible under Windows network (Windows Explorer).Thank you very much Alexskysilk ! I am sorry for asking, I have been trying to understand but I cannot see how to to serve SMB at the PVE level. Here is my current situation : I basically used /etc/fstab to UUID mount my two HDDs (Chuwi_2TB...

-

spirit replied to the thread Ceph - VM with high IO wait.Strange that you also have high memory pressure "PSI some memory". do you have enable numa option on the vm ? you can also look at host numa stat # apt install numactl # numstat and look if you don't have a lot of "numa_miss" vs "numa_hit"...

spirit replied to the thread Ceph - VM with high IO wait.Strange that you also have high memory pressure "PSI some memory". do you have enable numa option on the vm ? you can also look at host numa stat # apt install numactl # numstat and look if you don't have a lot of "numa_miss" vs "numa_hit"... -

Uuzumo replied to the thread [SOLVED] Anti-Cheat KVM Settings.That's amazing. I can't do it right away, but I'll try installing it on the test machine sometime next weekend.