Latest activity

-

Ttka222 posted the thread best practices network for the PVE Cluster in Proxmox VE: Installation and configuration.hi guys, i just installed 3 brand new DELL R660 servers with 2 x 25 GbE SFP+ , 2 x 1 GbE adn 2 x 32G FC servers. The srorage array will be a NVMe Dorado 6000V6 on the FC 32G. Multipath conf and LVM thick shared volumes are ok, but i have...

-

Bbooodpoooq20 replied to the thread Ceph 20.2 Tentacle Release Available as test preview and Ceph 18.2 Reef soon to be fully EOL.DONOT UPGRADE TO TENTACLE!!! It contains a critical bug and made my cluster show scrub errors (my scenario is upgrading existed ec pool to fastec) HEALTH_ERR: 3862709 scrub errors for detail you can read this article...

-

shanreich replied to the thread Confused regarding guest isolation on cluster.The guests need the gateway to reach the internet, I assume? Then you'd need to allow traffic from / to the gateway / internet explicitly, and drop other traffic (from guest <-> guest).

shanreich replied to the thread Confused regarding guest isolation on cluster.The guests need the gateway to reach the internet, I assume? Then you'd need to allow traffic from / to the gateway / internet explicitly, and drop other traffic (from guest <-> guest). -

ggoller replied to the thread Proxmox VE update to 9.1.5, now the network card is slow.Is the slowdown from inside of the VM to the router or also from the host to the router? Some other stuff to check: # check ring-buffer utilization ethtool -g eno4 # check interrupt coalescing ethtool -c eno4 # check if there are any...

ggoller replied to the thread Proxmox VE update to 9.1.5, now the network card is slow.Is the slowdown from inside of the VM to the router or also from the host to the router? Some other stuff to check: # check ring-buffer utilization ethtool -g eno4 # check interrupt coalescing ethtool -c eno4 # check if there are any... -

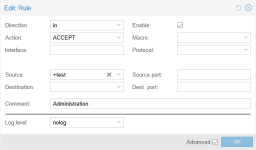

Eetfz replied to the thread Confused regarding guest isolation on cluster.I created this rule, where the source is an IP set containing my PC network. (not the same as the network on which PVE runs) After enabling some logging, I could see that ceph traffic was getting dropped, which I suppose makes sense, but I...

-

Kkmentzelos replied to the thread LVM on shared storage.Right, yes, that was me asking the same question earlier I’ve been testing different setups (Ceph, ZFS replication, DRBD shared storage), and at the moment I feel confident setting up a primary/primary DRBD (plain DRBD, not LINSTOR) with LVM...

-

RRodolfoRibeiro posted the thread Request: SAS HBA LUN Sharing Between Proxmox Cluster Hosts (Like VMware) in Proxmox VE: Installation and configuration.Hi Proxmox team and community, In Brazil, a very common virtualization setup is a 2-host cluster with direct SAS storage connection via HBA cards (e.g., LSI/Avago HBAs). This architecture allows both hosts to share the same virtual LUNs from...

-

RRoxyProxy posted the thread Storage for small clusters, any good solutions? in Proxmox VE: Installation and configuration.Hi there, the Title may be a bit deceptive as I know there are good solutions that work for many but for me/my workplace we face a bit of a dilemma. I know I'm opening this can of worms again and this is also partly me venting a bit of my...

-

Kkmentzelos replied to the thread LVM on shared storage.alright, thanks. I will check.

-

Aalia80 replied to the thread Proxmox VE update to 9.1.5, now the network card is slow.ethtool eno4 Settings for eno4: Supported ports: [ TP ] Supported link modes: 10baseT/Half 10baseT/Full 100baseT/Half 100baseT/Full 1000baseT/Half 1000baseT/Full...

-

SSwifty.hu replied to the thread xe_guc kernel driver failing on Linux 6.14.8-3-bpo12-pve Proxmox PVE 8.4.16.Another unhappy Intel B580 user here. I have the same error with the kernel 6.17.9-1-pve. @cdeck : Could you please show us your firmware versions? fwdupdmgr get-devices gives the following info: ├─Arc B580: │ │ Device ID...

-

Ppiotrpierzchala replied to the thread Space reclamation on thin provisioning after removing file from debian 13 with qcow2 disk.Right... Before moving disk (with 'qm move-disk') qm monitor ('info block') shows that specific disk uses '(...) "driver": "qcow2" (...)' after running 'qm move-disk' it shows: '(...) "driver": "zeroinit" (...)'... ...which I believe is a...

-

JJohannes S reacted to mouse51180's post in the thread [SOLVED] Datacenter\Backup Server License Question with

Like.

You can ignore this. The information about our license was misread and I see the confusion on my part now. Thank you.

Like.

You can ignore this. The information about our license was misread and I see the confusion on my part now. Thank you. -

JJohannes S reacted to SteveITS's post in the thread [SOLVED] Datacenter\Backup Server License Question with

Like.

PBS’s key is entered into PBS. PDM as I understand it doesn’t have a key but depends on the percentage of licensed PVE nodes connected.

Like.

PBS’s key is entered into PBS. PDM as I understand it doesn’t have a key but depends on the percentage of licensed PVE nodes connected. -

JSee https://forum.proxmox.com/threads/cluster-aware-fs-for-shared-datastores.164933/#post-840004

-

JWorking off the previous answer, here is a guide on how to work with cloud-init images: https://www.thomas-krenn.com/en/wiki/Cloud_Init_Templates_in_Proxmox_VE_-_Quickstart .

-

JI usually use ubuntu cloud-init images from here: https://cloud-images.ubuntu.com/noble/current/ Though that's ubuntu server, so they don't come with a GUI. Admin user and password, as well as network config you can set via CloudInit.

-

aaron replied to the thread LVM on shared storage.See https://forum.proxmox.com/threads/cluster-aware-fs-for-shared-datastores.164933/#post-840004

aaron replied to the thread LVM on shared storage.See https://forum.proxmox.com/threads/cluster-aware-fs-for-shared-datastores.164933/#post-840004 -

JThe Proxmox VE cluster puts a storage lock in place so the other nodes know not to modify the metadata. As with any other shared storage, there is only ever one active VM process accessing the data. Either the source VM, or after the handover...

-

Fftzh75 replied to the thread Proxmox VE 9.1.1 dnsmasq issue.Hi, I'm experiencing another issue. I installed the DHCPMASQ in one node for vxlan zone. I created a service "dnsmasq@vnet100.service" and enabled it. But every time, when i change a network config and press the "Apply configuration" button...