Latest activity

-

gurubert replied to the thread Pardon my less-than-intelligent question, but is there a way to install Proxmox on a Ceph cluster?.No no no. You got your math wrong. To achieve the same availability as EC with k=6 and m=2 you need triple replication (three copies) meaning a storage efficiency of 33%. It is rarely necessary to go beyond 4 copies.

gurubert replied to the thread Pardon my less-than-intelligent question, but is there a way to install Proxmox on a Ceph cluster?.No no no. You got your math wrong. To achieve the same availability as EC with k=6 and m=2 you need triple replication (three copies) meaning a storage efficiency of 33%. It is rarely necessary to go beyond 4 copies. -

gurubert reacted to alexskysilk's post in the thread Pardon my less-than-intelligent question, but is there a way to install Proxmox on a Ceph cluster? with

gurubert reacted to alexskysilk's post in the thread Pardon my less-than-intelligent question, but is there a way to install Proxmox on a Ceph cluster? with Like.

"lower" and "higher" are subjective. Ceph achieves HA using raw capacity. suit yourself. this is not a recommended deployment. You are far better served by just having two SEPERATE VMs each serving all those functions without any ceph at all-...

Like.

"lower" and "higher" are subjective. Ceph achieves HA using raw capacity. suit yourself. this is not a recommended deployment. You are far better served by just having two SEPERATE VMs each serving all those functions without any ceph at all-... -

gurubert reacted to alexskysilk's post in the thread Pardon my less-than-intelligent question, but is there a way to install Proxmox on a Ceph cluster? with

gurubert reacted to alexskysilk's post in the thread Pardon my less-than-intelligent question, but is there a way to install Proxmox on a Ceph cluster? with Like.

The number of OSDs isn't relevant to a pool as long as it is larger then the minimum required by the crush rule. For example, If you have an EC profile of K=8,N=2 rule, you need a minimum of 10 OSDs DISTRIBUTED ACROSS 10 NODES. so 1 OSD per node...

Like.

The number of OSDs isn't relevant to a pool as long as it is larger then the minimum required by the crush rule. For example, If you have an EC profile of K=8,N=2 rule, you need a minimum of 10 OSDs DISTRIBUTED ACROSS 10 NODES. so 1 OSD per node... -

UdoB replied to the thread Host reboots during backups.You can limit ARC: https://pve.proxmox.com/wiki/ZFS_on_Linux#sysadmin_zfs_limit_memory_usage (While the ARC has the capability to shrink on demand, this mechanism is often to slow for a sudden request --> OOM)

UdoB replied to the thread Host reboots during backups.You can limit ARC: https://pve.proxmox.com/wiki/ZFS_on_Linux#sysadmin_zfs_limit_memory_usage (While the ARC has the capability to shrink on demand, this mechanism is often to slow for a sudden request --> OOM) -

MMarkusKo replied to the thread PVE 9.1 Installation on Dell R515.Shouldn't it be "nomodeset"?

-

BBu66as replied to the thread merkwürtiges Netzwerkproblem beim Zugriff auf PVE.@garfield2008 , Gut, damit ist die Ursache bestätigt. Was sich geändert hat: Vermutlich haben bei der Neuinstallation/dem Update die OVS-Bridges oder Bonds die MTU 9000 von den NICs übernommen (OVS negotiiert die MTU automatisch anhand der...

-

Ssgw replied to the thread OCFS2(unsupported): Frage zu Belegung.Sehr informativ, danke. Leider noch keine Bewegung, der Scrub vom Sonntag dauerte vorhin immer noch an. Habe den abgebrochen, da tat sich nix in der Anzeige der Belegung, auch nicht nach fstrim (diesmal wurden etwa 200GB frei gegeben). Nun ist...

-

MMarco83 replied to the thread Proxmox SDN Traffic breakout Interface and routing.Yes correct... I configured my SDN underlay network via ‘Fabrics’ using OSPF, and everything works perfectly. The traffic runs exactly over my dedicated VLAN into my switch fabric. But my problem now is: when I attach a guest VM (with SNAT or...

-

Ddork replied to the thread Snapshot causes VM to become unresponsive..Hi Fiona, i was able to resolve this issue with qcow2, and evade the downtime while taking\deleting snapshots, can you send me direct message?

-

NNexces replied to the thread Is a 3-node Full Mesh Setup For Ceph and Corosync Good or Bad.3 x AMD EPYC 7713, 512GB RAM, 2x1TB SSD (RAID 1, OS), 5 x 3.84TB NVMe (Ceph) Networking: - 2 port embedded 1G NIC: 1 port for public internet access, 1 port for private network - both connected to switch ports acting as access ports to different...

-

SSvenTej replied to the thread Secure Boot – Microsoft UEFI CA 2023 Certificate Not Included in EFI Disk.Hey there, I am currently running a WIN2019 Server on Proxmox. On my tasklist is the UEFI certificate stuff ;-) In one of the previous posts I see, that for new instances the setting is applied. But for the existing one? Fiona stated, that...

-

NNemesiz replied to the thread High latency on proxmox ceph cluster.Try to turn off write cache.

-

Vviruslab replied to the thread Proxmox randomly reboots while doing backup job.My Proxmox node reboots during backup once a month or two. No errors in kernel or journal Sometimes warning about memory pressure, but ram mostly occupied by zfs.

-

Vviruslab replied to the thread Host reboots during backups.My Proxmox node reboots during backup once a month or two. No errors in kernel or journal Sometimes warning about memory pressure, but ram mostly occupied by zfs.

-

Bb0nef1sh replied to the thread Unexpected Reboots on current Kernel.i installed and pinned that kernel, still getting reboots sadly, maybe it is hardware after all

-

JDas passiert bei CTs leider üblicherweise nicht. Zumindestens nicht vor Beendigung. Bitte nicht. Snapshots ersetzen ein richtiges Backup genau so wenig wie RAID. Ich empfehle immer beides. Schau dir auch mal cv4pve-autosnap an Schau auch mal den...

-

JJohannes S reacted to garfield2008's post in the thread merkwürtiges Netzwerkproblem beim Zugriff auf PVE with

Like.

Ich glaub ich habe die Lösung. Habe gerade mal bei der VM die MTU auf 1500 begrenzt und siehe da, ich komme mit allen Protokollen auf alle PVE/PBS. Bleibt also die Frage, was hat sich vor einem halben Jahr geändert?

Like.

Ich glaub ich habe die Lösung. Habe gerade mal bei der VM die MTU auf 1500 begrenzt und siehe da, ich komme mit allen Protokollen auf alle PVE/PBS. Bleibt also die Frage, was hat sich vor einem halben Jahr geändert? -

JJohannes S reacted to deimosian's post in the thread enable CONFIG_RUST for Proxmox kernel with

Like.

Then the next thread will be asking how to disable it.

Like.

Then the next thread will be asking how to disable it. -

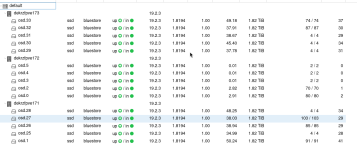

Ffreaknils posted the thread High latency on proxmox ceph cluster in Proxmox VE: Installation and configuration.Hi there, we are running an Proxmoy PVE Ceph cluster. Current configuration is: 3 Nodes Each 2 x 10GBit/s LACP to 4 switches (only for ceph) 2 x Intel Xeon E5-2640 2,6GHzs 192GB RAM Each 5 Crucial SATA SSD CT2000MX500SSD1 on HBA But currently...

-

JJohannes S reacted to alexskysilk's post in the thread Pardon my less-than-intelligent question, but is there a way to install Proxmox on a Ceph cluster? with

Like.

"lower" and "higher" are subjective. Ceph achieves HA using raw capacity. suit yourself. this is not a recommended deployment. You are far better served by just having two SEPERATE VMs each serving all those functions without any ceph at all-...

Like.

"lower" and "higher" are subjective. Ceph achieves HA using raw capacity. suit yourself. this is not a recommended deployment. You are far better served by just having two SEPERATE VMs each serving all those functions without any ceph at all-...