Latest activity

-

Ttomptj replied to the thread [SOLVED] Tasks fail with Too many open files (os error 24).I had a similar problem. I disabled the cache in virtiofsd when mounting the filesystem to the VM. Option: --cache=none The problem stopped occurring.

-

ZZolt replied to the thread [SOLVED] PVE 9 - GRUB stops the machine immediately.Thanks for the tips thatgermandude and glockmane. I hit the same issue last night. What was frustrating is that the server cleared all pre-checks before I did the upgrade. I have another two PVE servers that have failures on the pre-checks...

-

Hhuitheme replied to the thread Plugging in or unplugging the network cable will cause the PVE system to completely lose network connectivity..The complete log of today's communication outage.

-

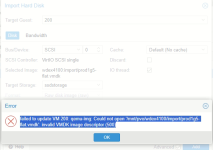

vaysala507 replied to the thread Imposible to migrate VM from vmware to proxmox.Hello can you solve you problem? i have same problem to move vmdk from vmware 6.5 to pve 9 failed to update VM 200: qemu-img: Could not open '/mnt/pve/wdex4100/import/prod1g5-flat.vmdk': invalid VMDK image descriptor (500)

vaysala507 replied to the thread Imposible to migrate VM from vmware to proxmox.Hello can you solve you problem? i have same problem to move vmdk from vmware 6.5 to pve 9 failed to update VM 200: qemu-img: Could not open '/mnt/pve/wdex4100/import/prod1g5-flat.vmdk': invalid VMDK image descriptor (500) -

JAssumption: each NIC is assigned in a bridge. That's default and OK. There are several solutions for your wish, for example limiting "Listen" for the relevant processes or establishing iptables rules. But approach "zero" is by far the...

-

JJohannes S reacted to fstrankowski's post in the thread Immich LXC Server bringt CPU auf 100°C sobald immich Aufgaben laufen with

Like.

Ist alles normal. Immich hat nunmal im Hintergrund viele Tasks laufen wie Erstellung von Miniaturansichten etc. Das braucht CPU und erzeugt Last wie du siehst. Last muss abgeführt werden, das geht über die Temperatur. Mini-PCs sind nicht für...

Like.

Ist alles normal. Immich hat nunmal im Hintergrund viele Tasks laufen wie Erstellung von Miniaturansichten etc. Das braucht CPU und erzeugt Last wie du siehst. Last muss abgeführt werden, das geht über die Temperatur. Mini-PCs sind nicht für... -

vaysala507 posted the thread Error to Import VMDK to PVE9 in Proxmox VE: Installation and configuration.Hello people, first time happy new year 2026, now i tray to migrate vm from vmware 6.5, i download with winscp vmdk of VM host in Vmware 6.5, and upload to PVE9 with same Winscp, run command qm importdisk vm-id backup-name.vmdk disk-name...

vaysala507 posted the thread Error to Import VMDK to PVE9 in Proxmox VE: Installation and configuration.Hello people, first time happy new year 2026, now i tray to migrate vm from vmware 6.5, i download with winscp vmdk of VM host in Vmware 6.5, and upload to PVE9 with same Winscp, run command qm importdisk vm-id backup-name.vmdk disk-name... -

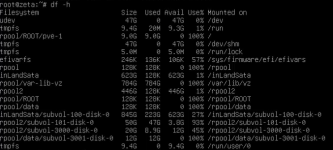

ACheck with something like this du -shc /* | sort -h # Go deeper du -shc /bigdirectory/* | sort -h # And so on... du -shc /bigdirectory/.../* | sort -h For ZFS zfs list -ospace is often a better choice than df.

-

AYou should be able to edit the thread to mark it solved.

-

SSteveITS replied to the thread [SOLVED] no space left on device.You should be able to edit the thread to mark it solved.

-

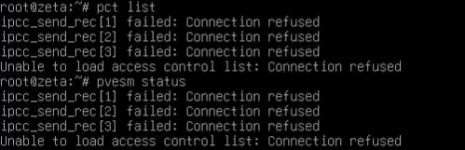

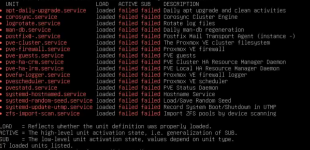

AAndy Prime replied to the thread [SOLVED] no space left on device.@Impact Thank you for your response! I was able to dive into my rpool and found the backup dump maxed out. after deleting one of the largest ones I was able to reboot and clear half of the failed services. The rest were related to the PVE and...

-

AAndy Prime posted the thread [SOLVED] no space left on device in Proxmox VE: Installation and configuration.hi! looking for some hel. Im not sure what is clogging my rpool but its made the GUI unreachable and a whole list of services on failed. would love some direction.

-

UUHL replied to the thread Feature request: set LXC MTU from web GUI network menu option.This is still not solved on 9.1.x. I got a LXC with 1400 MTU setup via Web, and then on the LXC the network comes up with MTU 1400.

-

Kkensan replied to the thread Is proxmox affected by ths one ?? - Systemd vsock sshd - Vulnerability.that file is part of the global config file for the ssh client. it has nothing to do with sshd. My understanding of https://www.openwall.com/lists/oss-security/2025/12/28/4 is that the generator in question will run on the VM and setup a socket...

-

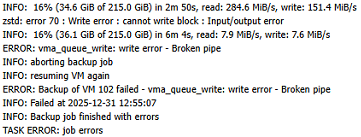

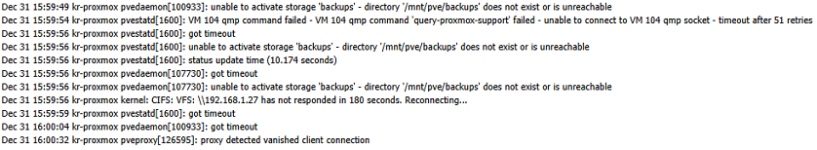

Aaccidentally32megabytes replied to the thread Backups to Synology NAS fail about halfway through.More info: The backup target definitely has space left on it (2TB used out of 12TB). I also get this zstd error . Another corroboration that the NAS goes "offline" from the point of view of ProxMox More logs:

-

robm posted the thread VM live migration issue - api error (status = 400: api error (status = 596: )) in Datacenter Manager: Installation and configuration.Tried the live VM migration between clusters, but got the error: api error (status = 400: api error (status = 596: )) What is required to be configured in each cluster to perform these type of migrations? Root ssh keys authorized? Firewall...

robm posted the thread VM live migration issue - api error (status = 400: api error (status = 596: )) in Datacenter Manager: Installation and configuration.Tried the live VM migration between clusters, but got the error: api error (status = 400: api error (status = 596: )) What is required to be configured in each cluster to perform these type of migrations? Root ssh keys authorized? Firewall... -

SSteveITS replied to the thread VM isolation on the same subnet/Vlan. Migration Planning.I would think that would work, for instance on that VLAN, allow traffic to/from 10.10.10.5-10.10.10.10 and presumably its gateway, and deny others. A VNet firewall does require the new nftables firewall which is in preview. Or as I mentioned...

-

Ggyptazy replied to the thread CPU masking für Hosts.Ich habe die Tage mal dazu das Tool “ProxCLMC” (Prox CPU Live Migration Checker) geschrieben, da dies immer mal wieder aufkam. Wie @Falk R. schon schrieb, erfolgt dies auf VM Basis. Die Idee ist, dies in eine Pipeline einzubinden, um das...

-

KKingneutron reacted to _gabriel's post in the thread I need a graphical interface on my PVE server with

Like.

https://pve.proxmox.com/wiki/Developer_Workstations_with_Proxmox_VE_and_X11

Like.

https://pve.proxmox.com/wiki/Developer_Workstations_with_Proxmox_VE_and_X11 -

SSteveITS replied to the thread Server 2022 defrag/trim pegging CPU and RAM.Thanks for the thread. Just wanted to note the change requires a "power off" (shutdown) of the VM.