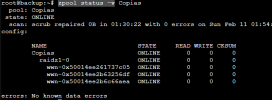

Hello, we have received this error message from one of the disks in the ZFS system. I have run a "zpool status -v name" and I don't see any error. Can I take any more action? Thanks, best regards.

The number of I/O errors associated with a ZFS device exceeded

acceptable levels. ZFS has marked the device as faulted.

impact: Fault tolerance of the pool may be compromised.

eid: 29

class: statechange

state: FAULTED

host: backup

time: 2024-02-28 15:03:46+0100

vpath: /dev/disk/by-id/ata-INTEL_SSDSC2BW120A4_PHDA435001511207GN-part3

vguid: 0x00C8769FC869172D

pool: rpool (0x8A4E837096270D08)

The number of I/O errors associated with a ZFS device exceeded

acceptable levels. ZFS has marked the device as faulted.

impact: Fault tolerance of the pool may be compromised.

eid: 29

class: statechange

state: FAULTED

host: backup

time: 2024-02-28 15:03:46+0100

vpath: /dev/disk/by-id/ata-INTEL_SSDSC2BW120A4_PHDA435001511207GN-part3

vguid: 0x00C8769FC869172D

pool: rpool (0x8A4E837096270D08)

Last edited: