Moin zusammen,

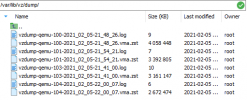

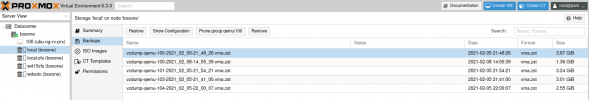

Ich habe 4 VM´s auch einem PVE Node am laufen gehabt. Nun Habe ich einen neuen PVE Node erstellet (neuer server). Auf dem neuen Node habe ein Cluster angelegt, diesem wollte ich mit den alten Node beitreten. Dafür habe ich vier Backup der VM´s auf dem alten Node erstellet. Die VM´s gelöscht, einen Restore getestet, OK.

Nachdem ich dem erstellten Cluster beigetreten bin, haben sich die Storagebezeichnungen des alten Nodes geändert !!!!

Wieso ???

beim Neuen habe ich eine nvme M.2 als ZFS (rpool usw.)

beim Alten hatte ich default local-lvm, als lvm-thin. Nach dem Join stand da plötzlich auch was von local-zfs ??? mit status unknown

ich habe dann diese Anleitung befolgt:

https://dannyda.com/2020/05/10/how-...-proxmox-ve-pve-and-some-lvm-basics-commands/

Jetzt habe über die /etc/pve/storage.cfg es sowei wieder hinbekommen, dass "thin-lvm" wider da ist, natürlich nicht das Originale, das habe ich gelöscht und neu erstellt.

****lvmthin: local-lvm

thinpool data

vgname pve

content rootdir,images*****

Dann habe ich versucht die VM Backup´s wieder herzustellen, leider ohne Erfolg.

hier das LOG:

restore vma archive: zstd -q -d -c /var/lib/vz/dump/vzdump-qemu-104-2021_02_05-22_00_07.vma.zst | vma extract -v -r /var/tmp/vzdumptmp3053.fifo - /var/tmp/vzdumptmp3053

CFG: size: 395 name: qemu-server.conf

DEV: dev_id=1 size: 34359738368 devname: drive-virtio0

CTIME: Fri Feb 5 22:00:10 2021

Logical volume "vm-100-disk-0" created.

new volume ID is 'local-lvm:vm-100-disk-0'

map 'drive-virtio0' to '/dev/pve/vm-100-disk-0' (write zeros = 0)

progress 1% (read 343605248 bytes, duration 3 sec)

progress 2% (read 687210496 bytes, duration 5 sec)

progress 3% (read 1030815744 bytes, duration 5 sec)

progress 4% (read 1374420992 bytes, duration 7 sec)

progress 5% (read 1718026240 bytes, duration 9 sec)

progress 6% (read 2061631488 bytes, duration 13 sec)

progress 7% (read 2405236736 bytes, duration 17 sec)

progress 8% (read 2748841984 bytes, duration 19 sec)

progress 9% (read 3092381696 bytes, duration 21 sec)

progress 10% (read 3435986944 bytes, duration 22 sec)

progress 11% (read 3779592192 bytes, duration 22 sec)

progress 12% (read 4123197440 bytes, duration 22 sec)

progress 13% (read 4466802688 bytes, duration 22 sec)

progress 14% (read 4810407936 bytes, duration 23 sec)

progress 15% (read 5154013184 bytes, duration 23 sec)

progress 16% (read 5497618432 bytes, duration 23 sec)

progress 17% (read 5841158144 bytes, duration 24 sec)

progress 18% (read 6184763392 bytes, duration 24 sec)

progress 19% (read 6528368640 bytes, duration 24 sec)

progress 20% (read 6871973888 bytes, duration 24 sec)

progress 21% (read 7215579136 bytes, duration 24 sec)

progress 22% (read 7559184384 bytes, duration 25 sec)

progress 23% (read 7902789632 bytes, duration 26 sec)

progress 24% (read 8246394880 bytes, duration 29 sec)

progress 25% (read 8589934592 bytes, duration 34 sec)

progress 26% (read 8933539840 bytes, duration 37 sec)

progress 27% (read 9277145088 bytes, duration 38 sec)

progress 28% (read 9620750336 bytes, duration 42 sec)

progress 29% (read 9964355584 bytes, duration 44 sec)

progress 30% (read 10307960832 bytes, duration 44 sec)

progress 31% (read 10651566080 bytes, duration 44 sec)

progress 32% (read 10995171328 bytes, duration 44 sec)

progress 33% (read 11338776576 bytes, duration 44 sec)

progress 34% (read 11682316288 bytes, duration 44 sec)

progress 35% (read 12025921536 bytes, duration 44 sec)

progress 36% (read 12369526784 bytes, duration 46 sec)

progress 37% (read 12713132032 bytes, duration 46 sec)

progress 38% (read 13056737280 bytes, duration 46 sec)

progress 39% (read 13400342528 bytes, duration 46 sec)

progress 40% (read 13743947776 bytes, duration 46 sec)

progress 41% (read 14087553024 bytes, duration 46 sec)

progress 42% (read 14431092736 bytes, duration 49 sec)

progress 43% (read 14774697984 bytes, duration 52 sec)

progress 44% (read 15118303232 bytes, duration 55 sec)

progress 45% (read 15461908480 bytes, duration 60 sec)

progress 46% (read 15805513728 bytes, duration 63 sec)

progress 47% (read 16149118976 bytes, duration 65 sec)

progress 48% (read 16492724224 bytes, duration 68 sec)

progress 49% (read 16836329472 bytes, duration 81 sec)

progress 50% (read 17179869184 bytes, duration 85 sec)

progress 51% (read 17523474432 bytes, duration 88 sec)

progress 52% (read 17867079680 bytes, duration 89 sec)

progress 53% (read 18210684928 bytes, duration 91 sec)

progress 54% (read 18554290176 bytes, duration 92 sec)

progress 55% (read 18897895424 bytes, duration 92 sec)

progress 56% (read 19241500672 bytes, duration 92 sec)

progress 57% (read 19585105920 bytes, duration 92 sec)

progress 58% (read 19928711168 bytes, duration 92 sec)

progress 59% (read 20272250880 bytes, duration 92 sec)

progress 60% (read 20615856128 bytes, duration 93 sec)

progress 61% (read 20959461376 bytes, duration 96 sec)

progress 62% (read 21303066624 bytes, duration 96 sec)

progress 63% (read 21646671872 bytes, duration 97 sec)

progress 64% (read 21990277120 bytes, duration 97 sec)

progress 65% (read 22333882368 bytes, duration 97 sec)

progress 66% (read 22677487616 bytes, duration 97 sec)

progress 67% (read 23021027328 bytes, duration 97 sec)

progress 68% (read 23364632576 bytes, duration 97 sec)

progress 69% (read 23708237824 bytes, duration 97 sec)

progress 70% (read 24051843072 bytes, duration 97 sec)

progress 71% (read 24395448320 bytes, duration 97 sec)

progress 72% (read 24739053568 bytes, duration 97 sec)

progress 73% (read 25082658816 bytes, duration 97 sec)

progress 74% (read 25426264064 bytes, duration 97 sec)

progress 75% (read 25769803776 bytes, duration 97 sec)

progress 76% (read 26113409024 bytes, duration 97 sec)

progress 77% (read 26457014272 bytes, duration 97 sec)

progress 78% (read 26800619520 bytes, duration 97 sec)

progress 79% (read 27144224768 bytes, duration 97 sec)

progress 80% (read 27487830016 bytes, duration 97 sec)

progress 81% (read 27831435264 bytes, duration 97 sec)

progress 82% (read 28175040512 bytes, duration 97 sec)

progress 83% (read 28518645760 bytes, duration 97 sec)

progress 84% (read 28862185472 bytes, duration 97 sec)

progress 85% (read 29205790720 bytes, duration 97 sec)

progress 86% (read 29549395968 bytes, duration 97 sec)

progress 87% (read 29893001216 bytes, duration 97 sec)

progress 88% (read 30236606464 bytes, duration 97 sec)

progress 89% (read 30580211712 bytes, duration 97 sec)

progress 90% (read 30923816960 bytes, duration 97 sec)

progress 91% (read 31267422208 bytes, duration 97 sec)

progress 92% (read 31610961920 bytes, duration 97 sec)

progress 93% (read 31954567168 bytes, duration 97 sec)

progress 94% (read 32298172416 bytes, duration 97 sec)

progress 95% (read 32641777664 bytes, duration 97 sec)

progress 96% (read 32985382912 bytes, duration 97 sec)

progress 97% (read 33328988160 bytes, duration 97 sec)

progress 98% (read 33672593408 bytes, durationwd 97 sec)

progress 99% (read 34016198656 bytes, duration 97 sec)

progress 100% (read 34359738368 bytes, duration 97 sec)

vma: restore failed - vma blk_flush drive-virtio0 failed

/bin/bash: line 1: 3055 Done zstd -q -d -c /var/lib/vz/dump/vzdump-qemu-104-2021_02_05-22_00_07.vma.zst

3056 Trace/breakpoint trap | vma extract -v -r /var/tmp/vzdumptmp3053.fifo - /var/tmp/vzdumptmp3053

device-mapper: message ioctl on (253:4) failed: Operation not supported

Failed to process message "delete 1".

Failed to suspend pve/data with queued messages.

unable to cleanup 'local-lvm:vm-100-disk-0' - lvremove 'pve/vm-100-disk-0' error: Failed to update pool pve/data.

no lock found trying to remove 'create' lock

TASK ERROR: command 'set -o pipefail && zstd -q -d -c /var/lib/vz/dump/vzdump-qemu-104-2021_02_05-22_00_07.vma.zst | vma extract -v -r /var/tmp/vzdumptmp3053.fifo - /var/tmp/vzdumptmp3053' failed: exit code 133

Mir ist schon klar, dass dem Restroreprozess irgendwas an dem neu angelegten Storage nicht schmeckt ---- bloß was ??

Habe keine Lust die VM´neu zu basteln...

Ich habe 4 VM´s auch einem PVE Node am laufen gehabt. Nun Habe ich einen neuen PVE Node erstellet (neuer server). Auf dem neuen Node habe ein Cluster angelegt, diesem wollte ich mit den alten Node beitreten. Dafür habe ich vier Backup der VM´s auf dem alten Node erstellet. Die VM´s gelöscht, einen Restore getestet, OK.

Nachdem ich dem erstellten Cluster beigetreten bin, haben sich die Storagebezeichnungen des alten Nodes geändert !!!!

Wieso ???

beim Neuen habe ich eine nvme M.2 als ZFS (rpool usw.)

beim Alten hatte ich default local-lvm, als lvm-thin. Nach dem Join stand da plötzlich auch was von local-zfs ??? mit status unknown

ich habe dann diese Anleitung befolgt:

https://dannyda.com/2020/05/10/how-...-proxmox-ve-pve-and-some-lvm-basics-commands/

Jetzt habe über die /etc/pve/storage.cfg es sowei wieder hinbekommen, dass "thin-lvm" wider da ist, natürlich nicht das Originale, das habe ich gelöscht und neu erstellt.

****lvmthin: local-lvm

thinpool data

vgname pve

content rootdir,images*****

Dann habe ich versucht die VM Backup´s wieder herzustellen, leider ohne Erfolg.

hier das LOG:

restore vma archive: zstd -q -d -c /var/lib/vz/dump/vzdump-qemu-104-2021_02_05-22_00_07.vma.zst | vma extract -v -r /var/tmp/vzdumptmp3053.fifo - /var/tmp/vzdumptmp3053

CFG: size: 395 name: qemu-server.conf

DEV: dev_id=1 size: 34359738368 devname: drive-virtio0

CTIME: Fri Feb 5 22:00:10 2021

Logical volume "vm-100-disk-0" created.

new volume ID is 'local-lvm:vm-100-disk-0'

map 'drive-virtio0' to '/dev/pve/vm-100-disk-0' (write zeros = 0)

progress 1% (read 343605248 bytes, duration 3 sec)

progress 2% (read 687210496 bytes, duration 5 sec)

progress 3% (read 1030815744 bytes, duration 5 sec)

progress 4% (read 1374420992 bytes, duration 7 sec)

progress 5% (read 1718026240 bytes, duration 9 sec)

progress 6% (read 2061631488 bytes, duration 13 sec)

progress 7% (read 2405236736 bytes, duration 17 sec)

progress 8% (read 2748841984 bytes, duration 19 sec)

progress 9% (read 3092381696 bytes, duration 21 sec)

progress 10% (read 3435986944 bytes, duration 22 sec)

progress 11% (read 3779592192 bytes, duration 22 sec)

progress 12% (read 4123197440 bytes, duration 22 sec)

progress 13% (read 4466802688 bytes, duration 22 sec)

progress 14% (read 4810407936 bytes, duration 23 sec)

progress 15% (read 5154013184 bytes, duration 23 sec)

progress 16% (read 5497618432 bytes, duration 23 sec)

progress 17% (read 5841158144 bytes, duration 24 sec)

progress 18% (read 6184763392 bytes, duration 24 sec)

progress 19% (read 6528368640 bytes, duration 24 sec)

progress 20% (read 6871973888 bytes, duration 24 sec)

progress 21% (read 7215579136 bytes, duration 24 sec)

progress 22% (read 7559184384 bytes, duration 25 sec)

progress 23% (read 7902789632 bytes, duration 26 sec)

progress 24% (read 8246394880 bytes, duration 29 sec)

progress 25% (read 8589934592 bytes, duration 34 sec)

progress 26% (read 8933539840 bytes, duration 37 sec)

progress 27% (read 9277145088 bytes, duration 38 sec)

progress 28% (read 9620750336 bytes, duration 42 sec)

progress 29% (read 9964355584 bytes, duration 44 sec)

progress 30% (read 10307960832 bytes, duration 44 sec)

progress 31% (read 10651566080 bytes, duration 44 sec)

progress 32% (read 10995171328 bytes, duration 44 sec)

progress 33% (read 11338776576 bytes, duration 44 sec)

progress 34% (read 11682316288 bytes, duration 44 sec)

progress 35% (read 12025921536 bytes, duration 44 sec)

progress 36% (read 12369526784 bytes, duration 46 sec)

progress 37% (read 12713132032 bytes, duration 46 sec)

progress 38% (read 13056737280 bytes, duration 46 sec)

progress 39% (read 13400342528 bytes, duration 46 sec)

progress 40% (read 13743947776 bytes, duration 46 sec)

progress 41% (read 14087553024 bytes, duration 46 sec)

progress 42% (read 14431092736 bytes, duration 49 sec)

progress 43% (read 14774697984 bytes, duration 52 sec)

progress 44% (read 15118303232 bytes, duration 55 sec)

progress 45% (read 15461908480 bytes, duration 60 sec)

progress 46% (read 15805513728 bytes, duration 63 sec)

progress 47% (read 16149118976 bytes, duration 65 sec)

progress 48% (read 16492724224 bytes, duration 68 sec)

progress 49% (read 16836329472 bytes, duration 81 sec)

progress 50% (read 17179869184 bytes, duration 85 sec)

progress 51% (read 17523474432 bytes, duration 88 sec)

progress 52% (read 17867079680 bytes, duration 89 sec)

progress 53% (read 18210684928 bytes, duration 91 sec)

progress 54% (read 18554290176 bytes, duration 92 sec)

progress 55% (read 18897895424 bytes, duration 92 sec)

progress 56% (read 19241500672 bytes, duration 92 sec)

progress 57% (read 19585105920 bytes, duration 92 sec)

progress 58% (read 19928711168 bytes, duration 92 sec)

progress 59% (read 20272250880 bytes, duration 92 sec)

progress 60% (read 20615856128 bytes, duration 93 sec)

progress 61% (read 20959461376 bytes, duration 96 sec)

progress 62% (read 21303066624 bytes, duration 96 sec)

progress 63% (read 21646671872 bytes, duration 97 sec)

progress 64% (read 21990277120 bytes, duration 97 sec)

progress 65% (read 22333882368 bytes, duration 97 sec)

progress 66% (read 22677487616 bytes, duration 97 sec)

progress 67% (read 23021027328 bytes, duration 97 sec)

progress 68% (read 23364632576 bytes, duration 97 sec)

progress 69% (read 23708237824 bytes, duration 97 sec)

progress 70% (read 24051843072 bytes, duration 97 sec)

progress 71% (read 24395448320 bytes, duration 97 sec)

progress 72% (read 24739053568 bytes, duration 97 sec)

progress 73% (read 25082658816 bytes, duration 97 sec)

progress 74% (read 25426264064 bytes, duration 97 sec)

progress 75% (read 25769803776 bytes, duration 97 sec)

progress 76% (read 26113409024 bytes, duration 97 sec)

progress 77% (read 26457014272 bytes, duration 97 sec)

progress 78% (read 26800619520 bytes, duration 97 sec)

progress 79% (read 27144224768 bytes, duration 97 sec)

progress 80% (read 27487830016 bytes, duration 97 sec)

progress 81% (read 27831435264 bytes, duration 97 sec)

progress 82% (read 28175040512 bytes, duration 97 sec)

progress 83% (read 28518645760 bytes, duration 97 sec)

progress 84% (read 28862185472 bytes, duration 97 sec)

progress 85% (read 29205790720 bytes, duration 97 sec)

progress 86% (read 29549395968 bytes, duration 97 sec)

progress 87% (read 29893001216 bytes, duration 97 sec)

progress 88% (read 30236606464 bytes, duration 97 sec)

progress 89% (read 30580211712 bytes, duration 97 sec)

progress 90% (read 30923816960 bytes, duration 97 sec)

progress 91% (read 31267422208 bytes, duration 97 sec)

progress 92% (read 31610961920 bytes, duration 97 sec)

progress 93% (read 31954567168 bytes, duration 97 sec)

progress 94% (read 32298172416 bytes, duration 97 sec)

progress 95% (read 32641777664 bytes, duration 97 sec)

progress 96% (read 32985382912 bytes, duration 97 sec)

progress 97% (read 33328988160 bytes, duration 97 sec)

progress 98% (read 33672593408 bytes, durationwd 97 sec)

progress 99% (read 34016198656 bytes, duration 97 sec)

progress 100% (read 34359738368 bytes, duration 97 sec)

vma: restore failed - vma blk_flush drive-virtio0 failed

/bin/bash: line 1: 3055 Done zstd -q -d -c /var/lib/vz/dump/vzdump-qemu-104-2021_02_05-22_00_07.vma.zst

3056 Trace/breakpoint trap | vma extract -v -r /var/tmp/vzdumptmp3053.fifo - /var/tmp/vzdumptmp3053

device-mapper: message ioctl on (253:4) failed: Operation not supported

Failed to process message "delete 1".

Failed to suspend pve/data with queued messages.

unable to cleanup 'local-lvm:vm-100-disk-0' - lvremove 'pve/vm-100-disk-0' error: Failed to update pool pve/data.

no lock found trying to remove 'create' lock

TASK ERROR: command 'set -o pipefail && zstd -q -d -c /var/lib/vz/dump/vzdump-qemu-104-2021_02_05-22_00_07.vma.zst | vma extract -v -r /var/tmp/vzdumptmp3053.fifo - /var/tmp/vzdumptmp3053' failed: exit code 133

Mir ist schon klar, dass dem Restroreprozess irgendwas an dem neu angelegten Storage nicht schmeckt ---- bloß was ??

Habe keine Lust die VM´neu zu basteln...

Last edited: