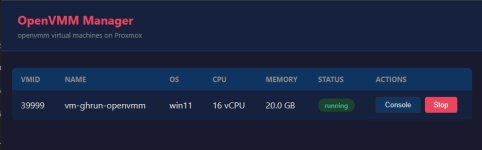

I can report a bit of a progress on my side. By using the following cpu flag: +hv-passthrough cpu utilization drops from 11% to about 3%. WIth VBS enabled and running. Also VM feels way faster. Keep in mind that +hv-passthrough cannot be set with GUI.

In the meantime I have upgraded qemu to 10.2 and I am using kernel 7.0-rc6 both from pve-test repo.

I noticed that snapshotting functionality does not work if VM is on. I am testing on my home/lab setup. I need to verify this on Xeon hardware ...

Thanks to @lordprotector

In the meantime I have upgraded qemu to 10.2 and I am using kernel 7.0-rc6 both from pve-test repo.

I noticed that snapshotting functionality does not work if VM is on. I am testing on my home/lab setup. I need to verify this on Xeon hardware ...

Thanks to @lordprotector