Hello Proxmox Community. First post here!

I'm encountering some significant issues after upgrading my Proxmox VE host/cluster from version 8.3.4 to 8.3.5 and would appreciate any insights or guidance.

Environment:

Working Scenario (Proxmox VE 8.3.4): Before the upgrade, UEFI PXE booting for my VMs worked perfectly. I could:

Problem Description (After Upgrading to Proxmox VE 8.3.5): Immediately after upgrading to 8.3.5, the following issues appeared:

Troubleshooting Done So Far:

Questions:

Any help or pointers would be greatly appreciated. I'm happy to provide relevant configuration files or log snippets if needed (e.g., pveversion -v, VM config, SDN config, network config).

Thank you!

I'm encountering some significant issues after upgrading my Proxmox VE host/cluster from version 8.3.4 to 8.3.5 and would appreciate any insights or guidance.

Environment:

- Proxmox VE version (before): 8.3.4

- Proxmox VE version (after): 8.3.5

- VM BIOS Type: OVMF (UEFI)

- Network Card Model (tested): Intel 1000 (primarily), potentially others if relevant.

Working Scenario (Proxmox VE 8.3.4): Before the upgrade, UEFI PXE booting for my VMs worked perfectly. I could:

- Set the network card (netX) as the first boot device in the VM's Options > Boot Order.

- Alternatively, manually select the "Network Boot" option from within the VM's UEFI boot menu (accessed via ESC during startup).Both methods successfully initiated the PXE boot process.

Problem Description (After Upgrading to Proxmox VE 8.3.5): Immediately after upgrading to 8.3.5, the following issues appeared:

- UEFI PXE Boot Failure:

- Setting the network card as the first boot device in Proxmox Boot Order no longer triggers a PXE boot attempt. The VM seems to skip it and moves to the next device.

- More significantly, the Network Boot option has completely disappeared from the list of bootable devices within the VM's internal UEFI menu. It's no longer available for manual selection.

- Software-Defined Network (SDN) Malfunction:

- Alongside the PXE boot issue, our configured Proxmox SDN setup has stopped working correctly after the upgrade.

- Consequently, VMs that rely on this SDN configuration are also malfunctioning.

Troubleshooting Done So Far:

- Verified VM configuration (Boot Order, UEFI settings).

- Rebooted the Proxmox host(s).

- Checked basic network connectivity for the host.

Questions:

- Is this a known regression or bug introduced in Proxmox VE 8.3.5?

- Has anyone else experienced similar issues with UEFI PXE boot or SDN breaking after this specific upgrade?

- Are there any specific logs (QEMU, pvedaemon, SDN logs, kernel logs) I should check for relevant errors?

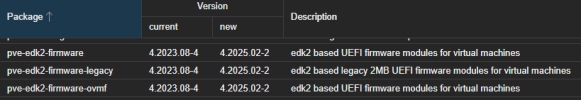

- Could the UEFI PXE issue and the SDN issue be related, perhaps due to changes in the underlying network stack or related packages (QEMU, EDK2 firmware)?

- Are there any potential workarounds or fixes available?

Any help or pointers would be greatly appreciated. I'm happy to provide relevant configuration files or log snippets if needed (e.g., pveversion -v, VM config, SDN config, network config).

Thank you!