Hi all,

TL;WR:

Original post:

~~~~~~~~~~~~~~~~~~~~~~~~~~

I am restoring from tape. I think it is the whole media set, but I am not quite sure.

I ran the task from the GUI, by selecting the date and then clicking "Restore" :

The restore-button opens a window to first select a media set; the single available snapshot is selected.

There are 5 backups of the container, only the first of which is written to tape.

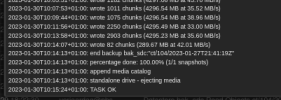

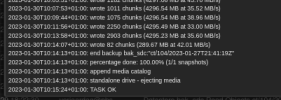

I had expected the chunks to be read and synchronously been written to PBS. Instead the tape spins through at relatively high speeds, but the task monitor only says "File n, restored 0 B, register nnnn chunks" :

Disk writes are just about 100kb/s, with hardly any CPU being involved.

What should I expect here?

I only thought of checking the help with respect to restoring after the process did not match my expectation. In the help there is mention of options for retrieving only partial data from tape, but I think in my case it is the whole media set.

My expectation for this media set with only this single backup of one container:

At what data speed is the tape running now? Because the PBS tape driver runs separate from the default tape driver in Linux, I am not sure whether I can use tape tools while the tape is being run by PBS. The backup itself ran with 30-40 MB/s:

I had the option of replacing it with an LTO4 drive so I'd expect at least comparable performance. The restore-task window (PBS 2.4) shows the number of chunks and the timestamp, but not the data rate. Comparing the screenshot above (about 110 seconds for 1000 chunks giving 35 MB/s) with the earlier screenshot, I'd expect at least comparable datarates.

Seeing there's a mismatch between my expectation and the actual process: what should I expect to see while restoring?

My main 'worry' now is that I have to sit and wait an indefinite time for the tape to have been scanned/indexed fully, to switch tapes a couple of times, and then go through the tapes again for the actual restore.

The actual reason for the restore will find another ticket (looking at the clock, probably tomorrow ;-) ) , my four earlier backups of the container as well as the backup I just made all fail verification (where these four used to pass verification). I hope this backup from tape will overwrite the first. If a dedeuplicated chunk in the first backup got corrupt, will it cause all subsequent backups to fail?

TL;WR:

- I had a snapshot that failed verification

- Restore from tape did not correct any chunks

- On re-verification it turned out that the verification had incorrectly flagged a number of chunks as failed

- Lessons learned: use server grade hardware or exercise patience (ie, don't run multiple I/O intensive tasks in parallel on a single SAS HDD in case of an underpowered CPU and lack of ECC memory)

Original post:

~~~~~~~~~~~~~~~~~~~~~~~~~~

I am restoring from tape. I think it is the whole media set, but I am not quite sure.

I ran the task from the GUI, by selecting the date and then clicking "Restore" :

The restore-button opens a window to first select a media set; the single available snapshot is selected.

There are 5 backups of the container, only the first of which is written to tape.

I had expected the chunks to be read and synchronously been written to PBS. Instead the tape spins through at relatively high speeds, but the task monitor only says "File n, restored 0 B, register nnnn chunks" :

Disk writes are just about 100kb/s, with hardly any CPU being involved.

What should I expect here?

I only thought of checking the help with respect to restoring after the process did not match my expectation. In the help there is mention of options for retrieving only partial data from tape, but I think in my case it is the whole media set.

My expectation for this media set with only this single backup of one container:

- Press restore

- Select the media set to restore (all contents, which is only one item in this case)

- See chunks being read from tape

- See chunks being written to storage

- Switch tape if when needed

- ... repeat ...

- Until the last chunk is read and written

- Done

At what data speed is the tape running now? Because the PBS tape driver runs separate from the default tape driver in Linux, I am not sure whether I can use tape tools while the tape is being run by PBS. The backup itself ran with 30-40 MB/s:

I had the option of replacing it with an LTO4 drive so I'd expect at least comparable performance. The restore-task window (PBS 2.4) shows the number of chunks and the timestamp, but not the data rate. Comparing the screenshot above (about 110 seconds for 1000 chunks giving 35 MB/s) with the earlier screenshot, I'd expect at least comparable datarates.

Seeing there's a mismatch between my expectation and the actual process: what should I expect to see while restoring?

My main 'worry' now is that I have to sit and wait an indefinite time for the tape to have been scanned/indexed fully, to switch tapes a couple of times, and then go through the tapes again for the actual restore.

The actual reason for the restore will find another ticket (looking at the clock, probably tomorrow ;-) ) , my four earlier backups of the container as well as the backup I just made all fail verification (where these four used to pass verification). I hope this backup from tape will overwrite the first. If a dedeuplicated chunk in the first backup got corrupt, will it cause all subsequent backups to fail?

Last edited: