Hi All,

Hope you are having a great day!

I have today come across an interesting error with snapshots on our proxmox cluster. There were updates on the cluster overnight and i fear this has caused this issue.

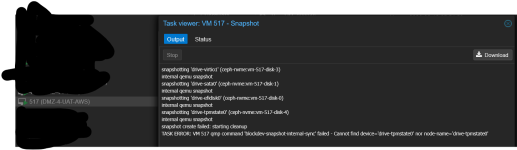

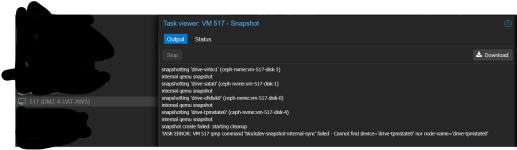

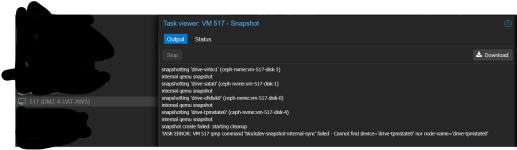

Currently whenever we attempt to snapshot a VM whilst it is running, the following error happens after the "CLEANUP" line in the GUI: qmp command 'blockdev-snapshot-internal-sync' failed - Cannot find device='drive-tpmstate0' nor node-name='drive-tpmstate0'

This error is replicable on all of our VM's (approx 70ish) no matter their age.

Interestingly enough if the VM is not running (Shutdown or Stopped) then the snapshot succeeds with no issues.

Has anyone ever come across this issue before and know what it might be?

Many thanks for any and all help!

I will put the pve versions / vm configs etc down below:

PVEVERSION -V

PVE STORAGE CONFIGURATION

VM CONFIGURATION

PS OF ONE OF THE EFFECTED MACHINES

SCREENSHOT OF GUI WHEN DOING A SNAP WITH THE VM POWERED ON (AND RAM UNITCKED)

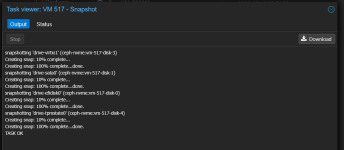

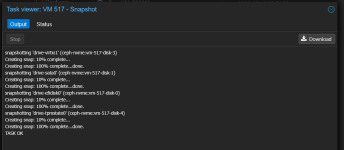

SCREENSHOT OF GUI WHEN DOING A SNAP WITH THE VM POWERED OFF

Hope you are having a great day!

I have today come across an interesting error with snapshots on our proxmox cluster. There were updates on the cluster overnight and i fear this has caused this issue.

Currently whenever we attempt to snapshot a VM whilst it is running, the following error happens after the "CLEANUP" line in the GUI: qmp command 'blockdev-snapshot-internal-sync' failed - Cannot find device='drive-tpmstate0' nor node-name='drive-tpmstate0'

This error is replicable on all of our VM's (approx 70ish) no matter their age.

Interestingly enough if the VM is not running (Shutdown or Stopped) then the snapshot succeeds with no issues.

Has anyone ever come across this issue before and know what it might be?

Many thanks for any and all help!

I will put the pve versions / vm configs etc down below:

PVEVERSION -V

Bash:

root@S-1121:~# pveversion -v

proxmox-ve: 9.1.0 (running kernel: 6.17.9-1-pve)

pve-manager: 9.1.9 (running version: 9.1.9/ee7bad0a3d1546c9)

proxmox-kernel-helper: 9.0.4

proxmox-kernel-7.0: 7.0.0-3

proxmox-kernel-7.0.0-3-pve-signed: 7.0.0-3

proxmox-kernel-6.17: 6.17.13-6

proxmox-kernel-6.17.13-6-pve-signed: 6.17.13-6

proxmox-kernel-6.17.9-1-pve-signed: 6.17.9-1

proxmox-kernel-6.8: 6.8.12-18

proxmox-kernel-6.8.12-18-pve-signed: 6.8.12-18

proxmox-kernel-6.8.4-2-pve-signed: 6.8.4-2

ceph: 20.2.1-pve1

ceph-fuse: 20.2.1-pve1

corosync: 3.1.10-pve2

criu: 4.1.1-1

dnsmasq: 2.91-1

frr-pythontools: 10.4.1-1+pve1

ifupdown2: 3.3.0-1+pmx12

ksm-control-daemon: 1.5-1

libjs-extjs: 7.0.0-5

libproxmox-acme-perl: 1.7.1

libproxmox-backup-qemu0: 2.0.2

libproxmox-rs-perl: 0.4.1

libpve-access-control: 9.0.7

libpve-apiclient-perl: 3.4.2

libpve-cluster-api-perl: 9.1.2

libpve-cluster-perl: 9.1.2

libpve-common-perl: 9.1.11

libpve-guest-common-perl: 6.0.2

libpve-http-server-perl: 6.0.5

libpve-network-perl: 1.3.0

libpve-notify-perl: 9.1.2

libpve-rs-perl: 0.13.0

libpve-storage-perl: 9.1.2

libspice-server1: 0.15.2-1+b1

lvm2: 2.03.31-2+pmx1

lxc-pve: 6.0.5-4

lxcfs: 6.0.4-pve1

novnc-pve: 1.6.0-4

openvswitch-switch: 3.5.0-1+b1

proxmox-backup-client: 4.2.0-1

proxmox-backup-file-restore: 4.2.0-1

proxmox-backup-restore-image: 1.0.0

proxmox-firewall: 1.2.2

proxmox-kernel-helper: 9.0.4

proxmox-mail-forward: 1.0.3

proxmox-mini-journalreader: 1.6

proxmox-offline-mirror-helper: 0.7.3

proxmox-widget-toolkit: 5.1.9

pve-cluster: 9.1.2

pve-container: 6.1.5

pve-docs: 9.1.2

pve-edk2-firmware: 4.2025.05-2

pve-esxi-import-tools: 1.0.1

pve-firewall: 6.0.4

pve-firmware: 3.18-3

pve-ha-manager: 5.2.0

pve-i18n: 3.7.1

pve-qemu-kvm: 10.1.2-7

pve-xtermjs: 5.5.0-3

qemu-server: 9.1.9

smartmontools: 7.4-pve1

spiceterm: 3.4.2

swtpm: 0.8.0+pve3

vncterm: 1.9.2

zfsutils-linux: 2.4.1-pve1PVE STORAGE CONFIGURATION

Bash:

root@S-1121:~# cat /etc/pve/storage.cfg

dir: local

disable

path /var/lib/vz

content vztmpl,iso,backup

shared 0

zfspool: local-zfs

disable

pool rpool/data

content images,rootdir

sparse 0

rbd: ceph-nvme

content rootdir,images

krbd 0

pool ceph-nvme

cephfs: ceph-isostore

path /mnt/pve/ceph-isostore

content vztmpl,backup,iso

fs-name ceph-isostore

pbs: PBS

datastore Backups

server [REDACTED]

content backup

fingerprint [REDACTED]

prune-backups keep-all=1

username [REDACTED]VM CONFIGURATION

Bash:

agent: 1

bios: ovmf

boot: order=sata0;ide0

cores: 12

cpu: host

efidisk0: ceph-nvme:vm-517-disk-0,efitype=4m,pre-enrolled-keys=1,size=1M

ide0: none,media=cdrom

machine: pc-q35-9.2+pve1

memory: 49152

meta: creation-qemu=9.2.0,ctime=1750265172

name: DMZ-4-UAT-AWS

net0: virtio=BC:24:11:9D:8E:E4,bridge=vmbr2,firewall=1,tag=3569

numa: 0

ostype: win11

parent: Burgas_HF7_260427

sata0: ceph-nvme:vm-517-disk-1,discard=on,size=80G,ssd=1

scsihw: virtio-scsi-single

smbios1: uuid=0356df6a-d2d1-40f2-9466-fded870edb7f

sockets: 1

tpmstate0: ceph-nvme:vm-517-disk-4,size=4M,version=v2.0

unused0: ceph-nvme:vm-517-disk-2

vga: virtio,memory=16

virtio1: ceph-nvme:vm-517-disk-3,iothread=1,size=80G

vmgenid: 23526ef7-3bf4-496b-9892-1ea6ba9bbeb8PS OF ONE OF THE EFFECTED MACHINES

Bash:

root@S-1121:~# ps -ax | grep -- 'id 517'

807333 ? Sl 32:23 /usr/bin/kvm -id 517 -name DMZ-4-UAT-AWS,debug-threads=on -no-shutdown -chardev socket,id=qmp,path=/var/run/qemu-server/517.qmp,server=on,wait=off -mon chardev=qmp,mode=control -chardev socket,id=qmp-event,path=/var/run/qmeventd.sock,reconnect-ms=5000 -mon chardev=qmp-event,mode=control -pidfile /var/run/qemu-server/517.pid -daemonize -smbios type=1,uuid=0356df6a-d2d1-40f2-9466-fded870edb7f -drive if=pflash,unit=0,format=raw,readonly=on,file=/usr/share/pve-edk2-firmware//OVMF_CODE_4M.secboot.fd -drive if=pflash,unit=1,id=drive-efidisk0,cache=writeback,format=raw,file=rbd:ceph-nvme/vm-517-disk-0:conf=/etc/pve/ceph.conf:id=admin:keyring=/etc/pve/priv/ceph/ceph-nvme.keyring:rbd_cache_policy=writeback,size=540672 -smp 12,sockets=1,cores=12,maxcpus=12 -nodefaults -boot menu=on,strict=on,reboot-timeout=1000,splash=/usr/share/qemu-server/bootsplash.jpg -vnc unix:/var/run/qemu-server/517.vnc,password=on -global kvm-pit.lost_tick_policy=discard -cpu host,hv_ipi,hv_relaxed,hv_reset,hv_runtime,hv_spinlocks=0x1fff,hv_stimer,hv_synic,hv_time,hv_vapic,hv_vpindex,+kvm_pv_eoi,+kvm_pv_unhalt -m 49152 -object iothread,id=iothread-virtio1 -global ICH9-LPC.disable_s3=1 -global ICH9-LPC.disable_s4=1 -readconfig /usr/share/qemu-server/pve-q35-4.0.cfg -device vmgenid,guid=23526ef7-3bf4-496b-9892-1ea6ba9bbeb8 -device usb-tablet,id=tablet,bus=ehci.0,port=1 -chardev socket,id=tpmchar,path=/var/run/qemu-server/517.swtpm -tpmdev emulator,id=tpmdev,chardev=tpmchar -device tpm-tis,tpmdev=tpmdev -device virtio-vga,id=vga,max_hostmem=16777216,bus=pcie.0,addr=0x1 -chardev socket,path=/var/run/qemu-server/517.qga,server=on,wait=off,id=qga0 -device virtio-serial,id=qga0,bus=pci.0,addr=0x8 -device virtserialport,chardev=qga0,name=org.qemu.guest_agent.0 -device virtio-serial,id=spice,bus=pci.0,addr=0x9 -chardev spicevmc,id=vdagent,name=vdagent -device virtserialport,chardev=vdagent,name=com.redhat.spice.0 -spice tls-port=61002,addr=127.0.0.1,tls-ciphers=HIGH,seamless-migration=on -device virtio-balloon-pci,id=balloon0,bus=pci.0,addr=0x3,free-page-reporting=on -iscsi initiator-name=iqn.1993-08.org.debian:01:ee6eef56d51 -drive if=none,id=drive-ide0,media=cdrom,aio=io_uring -device ide-cd,bus=ide.0,unit=0,drive=drive-ide0,id=ide0,bootindex=101 -drive file=rbd:ceph-nvme/vm-517-disk-3:conf=/etc/pve/ceph.conf:id=admin:keyring=/etc/pve/priv/ceph/ceph-nvme.keyring,if=none,id=drive-virtio1,format=raw,cache=none,aio=io_uring,detect-zeroes=on -device virtio-blk-pci,drive=drive-virtio1,id=virtio1,bus=pci.0,addr=0xb,iothread=iothread-virtio1 -device ahci,id=ahci0,multifunction=on,bus=pci.0,addr=0x7 -drive file=rbd:ceph-nvme/vm-517-disk-1:conf=/etc/pve/ceph.conf:id=admin:keyring=/etc/pve/priv/ceph/ceph-nvme.keyring,if=none,id=drive-sata0,discard=on,format=raw,cache=none,aio=io_uring,detect-zeroes=unmap -device ide-hd,bus=ahci0.0,drive=drive-sata0,id=sata0,rotation_rate=1,bootindex=100 -netdev type=tap,id=net0,ifname=tap517i0,script=/usr/libexec/qemu-server/pve-bridge,downscript=/usr/libexec/qemu-server/pve-bridgedown,vhost=on -device virtio-net-pci,mac=BC:24:11:9D:8E:E4,netdev=net0,bus=pci.0,addr=0x12,id=net0,rx_queue_size=1024,tx_queue_size=256 -rtc driftfix=slew,base=localtime -machine hpet=off,type=pc-q35-9.2+pve1SCREENSHOT OF GUI WHEN DOING A SNAP WITH THE VM POWERED ON (AND RAM UNITCKED)

SCREENSHOT OF GUI WHEN DOING A SNAP WITH THE VM POWERED OFF