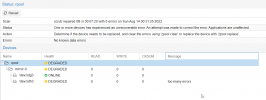

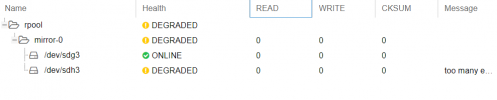

Rpool disk DEGRADED

- Thread starter YaseenKamala

- Start date

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

I don't know if this will help however here is the output of

root@dw-srv-p4:~# smartctl -a /dev/sdh3

smartctl 7.2 2020-12-30 r5155 [x86_64-linux-5.4.128-1-pve] (local build)

Copyright (C) 2002-20, Bruce Allen, Christian Franke, www.smartmontools.org

=== START OF INFORMATION SECTION ===

Model Family: Samsung based SSDs

Device Model: Samsung SSD 860 PRO 1TB

Serial Number: S42NNF0K702967R

LU WWN Device Id: 5 002538 e405cb8c1

Firmware Version: RVM01B6Q

User Capacity: 1,024,209,543,168 bytes [1.02 TB]

Sector Size: 512 bytes logical/physical

Rotation Rate: Solid State Device

Form Factor: 2.5 inches

TRIM Command: Available, deterministic, zeroed

Device is: In smartctl database [for details use: -P show]

ATA Version is: ACS-4 T13/BSR INCITS 529 revision 5

SATA Version is: SATA 3.1, 6.0 Gb/s (current: 6.0 Gb/s)

Local Time is: Thu Apr 6 14:02:20 2023 CEST

SMART support is: Available - device has SMART capability.

SMART support is: Enabled

=== START OF READ SMART DATA SECTION ===

SMART overall-health self-assessment test result: PASSED

General SMART Values:

Offline data collection status: (0x00) Offline data collection activity

was never started.

Auto Offline Data Collection: Disabled.

Self-test execution status: ( 0) The previous self-test routine completed

without error or no self-test has ever

been run.

Total time to complete Offline

data collection: ( 0) seconds.

Offline data collection

capabilities: (0x53) SMART execute Offline immediate.

Auto Offline data collection on/off support.

Suspend Offline collection upon new

command.

No Offline surface scan supported.

Self-test supported.

No Conveyance Self-test supported.

Selective Self-test supported.

SMART capabilities: (0x0003) Saves SMART data before entering

power-saving mode.

Supports SMART auto save timer.

Error logging capability: (0x01) Error logging supported.

General Purpose Logging supported.

Short self-test routine

recommended polling time: ( 2) minutes.

Extended self-test routine

recommended polling time: ( 85) minutes.

SCT capabilities: (0x003d) SCT Status supported.

SCT Error Recovery Control supported.

SCT Feature Control supported.

SCT Data Table supported.

SMART Attributes Data Structure revision number: 1

Vendor Specific SMART Attributes with Thresholds:

ID# ATTRIBUTE_NAME FLAG VALUE WORST THRESH TYPE UPDATED WHEN_FAILED RAW_VALUE

5 Reallocated_Sector_Ct 0x0033 100 100 010 Pre-fail Always - 0

9 Power_On_Hours 0x0032 093 093 000 Old_age Always - 35281

12 Power_Cycle_Count 0x0032 099 099 000 Old_age Always - 41

177 Wear_Leveling_Count 0x0013 077 077 000 Pre-fail Always - 496

179 Used_Rsvd_Blk_Cnt_Tot 0x0013 100 100 010 Pre-fail Always - 0

181 Program_Fail_Cnt_Total 0x0032 100 100 010 Old_age Always - 0

182 Erase_Fail_Count_Total 0x0032 100 100 010 Old_age Always - 0

183 Runtime_Bad_Block 0x0013 100 100 010 Pre-fail Always - 0

187 Uncorrectable_Error_Cnt 0x0032 100 100 000 Old_age Always - 0

190 Airflow_Temperature_Cel 0x0032 074 042 000 Old_age Always - 26

195 ECC_Error_Rate 0x001a 200 200 000 Old_age Always - 0

199 CRC_Error_Count 0x003e 100 100 000 Old_age Always - 0

235 POR_Recovery_Count 0x0012 099 099 000 Old_age Always - 15

241 Total_LBAs_Written 0x0032 099 099 000 Old_age Always - 298608380386

SMART Error Log Version: 1

No Errors Logged

SMART Self-test log structure revision number 1

No self-tests have been logged. [To run self-tests, use: smartctl -t]

SMART Selective self-test log data structure revision number 1

SPAN MIN_LBA MAX_LBA CURRENT_TEST_STATUS

1 0 0 Not_testing

2 0 0 Not_testing

3 0 0 Not_testing

4 0 0 Not_testing

5 0 0 Not_testing

Selective self-test flags (0x0):

After scanning selected spans, do NOT read-scan remainder of disk.

If Selective self-test is pending on power-up, resume after 0 minute delay.

Code:

smartctl -a /dev/sdh3root@dw-srv-p4:~# smartctl -a /dev/sdh3

smartctl 7.2 2020-12-30 r5155 [x86_64-linux-5.4.128-1-pve] (local build)

Copyright (C) 2002-20, Bruce Allen, Christian Franke, www.smartmontools.org

=== START OF INFORMATION SECTION ===

Model Family: Samsung based SSDs

Device Model: Samsung SSD 860 PRO 1TB

Serial Number: S42NNF0K702967R

LU WWN Device Id: 5 002538 e405cb8c1

Firmware Version: RVM01B6Q

User Capacity: 1,024,209,543,168 bytes [1.02 TB]

Sector Size: 512 bytes logical/physical

Rotation Rate: Solid State Device

Form Factor: 2.5 inches

TRIM Command: Available, deterministic, zeroed

Device is: In smartctl database [for details use: -P show]

ATA Version is: ACS-4 T13/BSR INCITS 529 revision 5

SATA Version is: SATA 3.1, 6.0 Gb/s (current: 6.0 Gb/s)

Local Time is: Thu Apr 6 14:02:20 2023 CEST

SMART support is: Available - device has SMART capability.

SMART support is: Enabled

=== START OF READ SMART DATA SECTION ===

SMART overall-health self-assessment test result: PASSED

General SMART Values:

Offline data collection status: (0x00) Offline data collection activity

was never started.

Auto Offline Data Collection: Disabled.

Self-test execution status: ( 0) The previous self-test routine completed

without error or no self-test has ever

been run.

Total time to complete Offline

data collection: ( 0) seconds.

Offline data collection

capabilities: (0x53) SMART execute Offline immediate.

Auto Offline data collection on/off support.

Suspend Offline collection upon new

command.

No Offline surface scan supported.

Self-test supported.

No Conveyance Self-test supported.

Selective Self-test supported.

SMART capabilities: (0x0003) Saves SMART data before entering

power-saving mode.

Supports SMART auto save timer.

Error logging capability: (0x01) Error logging supported.

General Purpose Logging supported.

Short self-test routine

recommended polling time: ( 2) minutes.

Extended self-test routine

recommended polling time: ( 85) minutes.

SCT capabilities: (0x003d) SCT Status supported.

SCT Error Recovery Control supported.

SCT Feature Control supported.

SCT Data Table supported.

SMART Attributes Data Structure revision number: 1

Vendor Specific SMART Attributes with Thresholds:

ID# ATTRIBUTE_NAME FLAG VALUE WORST THRESH TYPE UPDATED WHEN_FAILED RAW_VALUE

5 Reallocated_Sector_Ct 0x0033 100 100 010 Pre-fail Always - 0

9 Power_On_Hours 0x0032 093 093 000 Old_age Always - 35281

12 Power_Cycle_Count 0x0032 099 099 000 Old_age Always - 41

177 Wear_Leveling_Count 0x0013 077 077 000 Pre-fail Always - 496

179 Used_Rsvd_Blk_Cnt_Tot 0x0013 100 100 010 Pre-fail Always - 0

181 Program_Fail_Cnt_Total 0x0032 100 100 010 Old_age Always - 0

182 Erase_Fail_Count_Total 0x0032 100 100 010 Old_age Always - 0

183 Runtime_Bad_Block 0x0013 100 100 010 Pre-fail Always - 0

187 Uncorrectable_Error_Cnt 0x0032 100 100 000 Old_age Always - 0

190 Airflow_Temperature_Cel 0x0032 074 042 000 Old_age Always - 26

195 ECC_Error_Rate 0x001a 200 200 000 Old_age Always - 0

199 CRC_Error_Count 0x003e 100 100 000 Old_age Always - 0

235 POR_Recovery_Count 0x0012 099 099 000 Old_age Always - 15

241 Total_LBAs_Written 0x0032 099 099 000 Old_age Always - 298608380386

SMART Error Log Version: 1

No Errors Logged

SMART Self-test log structure revision number 1

No self-tests have been logged. [To run self-tests, use: smartctl -t]

SMART Selective self-test log data structure revision number 1

SPAN MIN_LBA MAX_LBA CURRENT_TEST_STATUS

1 0 0 Not_testing

2 0 0 Not_testing

3 0 0 Not_testing

4 0 0 Not_testing

5 0 0 Not_testing

Selective self-test flags (0x0):

After scanning selected spans, do NOT read-scan remainder of disk.

If Selective self-test is pending on power-up, resume after 0 minute delay.

I would replace the SATA cable in case the SSD is fine and just the connection is bad. I would lso try to plug it into another SATA port of your mainboard and check the SSDs power cable. If that doesn't help that SSD maybe got a bad NAND chip or controller. In that case you could replace the disk as described in "replacing a failed bootable device": https://pve.proxmox.com/wiki/ZFS_on_Linux#_zfs_administration

Thanks for your help @Dunuin,

When I excute

this what I got, do you know why?

For your information, this was version 5.x I have updated last year to 6.4-13

Do you know how much is risky the process?

Thanks,

Yaseen

When I excute

proxmox-boot-tool status it does not work!this what I got, do you know why?

# proxmox-boot-tool status

Re-executing '/usr/sbin/proxmox-boot-tool' in new private mount namespace..

E: /etc/kernel/proxmox-boot-uuids does not exist.

For your information, this was version 5.x I have updated last year to 6.4-13

Do you know how much is risky the process?

Thanks,

Yaseen

Last edited:

Here is how the disk partions are:

sdg 8:96 0 953.9G 0 disk

├─sdg1 8:97 0 1007K 0 part

├─sdg2 8:98 0 512M 0 part

└─sdg3 8:99 0 953.4G 0 part

sdh 8:112 0 953.9G 0 disk

├─sdh1 8:113 0 1007K 0 part

├─sdh2 8:114 0 512M 0 part

└─sdh3 8:115 0 953.4G 0 part

sdg 8:96 0 953.9G 0 disk

├─sdg1 8:97 0 1007K 0 part

├─sdg2 8:98 0 512M 0 part

└─sdg3 8:99 0 953.4G 0 part

sdh 8:112 0 953.9G 0 disk

├─sdh1 8:113 0 1007K 0 part

├─sdh2 8:114 0 512M 0 part

└─sdh3 8:115 0 953.4G 0 part

Last edited:

I found this article Proxmox Boot Tool, here is the output of

I think it says

anyone can confirm that please, if yes then how that can be corrected to point to the new machine name?

findmnt / :

Code:

root@dw-srv-p4:/# findmnt /

TARGET SOURCE FSTYPE OPTIONS

/ rpool/ROOT/pve-1 zfs rw,relatime,xattr,noacl

root@dw-srv-p4:/# cd /rpool/ROOT/pve-1

root@dw-srv-p4:/rpool/ROOT/pve-1# ls -al

total 1

drwxr-xr-x 2 root root 2 Mar 5 2019 .

drwxr-xr-x 3 root root 3 Mar 5 2019 ..I think it says

/etc/kernel/proxmox-boot-uuids does not exist, because it's refering to the old machine name which was pve-1, you can see the machine name is dw-srv-p4.anyone can confirm that please, if yes then how that can be corrected to point to the new machine name?

"pve-1" isn't the machine name, it's the name of the default ZFS dataset PVE uses as its root filesystem. That is always "pve-1".

Last edited:

I did not know, Thanks for the information. but then why I can't excute"pve-1" isn't the machine name, it's the name of the default ZFS dataset PVE uses as its root filesystem. That is always "pve-1".

proxmox-boot-tool status'? do you have an idea about ?You installed with PVE 5.4 so your system isn't configured to use the proxmox-boot-tool. You would need to move from legacy-grub to proxmox-boot-tool like described in your linked wiki article. "proxmox-boot-tool status" probably can't find them because they never existed.I did not know, Thanks for the information. but then why I can't excuteproxmox-boot-tool status'? do you have an idea about ?