Hi,

i have a problem.

i want to replicate a Server wirh multiple HDDs.

If i enable Replication to another Node i get "out of Space" Error.

On the Target Node the Space is free:

Why i get the Error? Other VMs work fine.

Other VMs:

Thanks

i have a problem.

i want to replicate a Server wirh multiple HDDs.

If i enable Replication to another Node i get "out of Space" Error.

Code:

2023-07-21 14:04:06 132-0: start replication job

2023-07-21 14:04:07 132-0: guest => VM 132, running => 1906

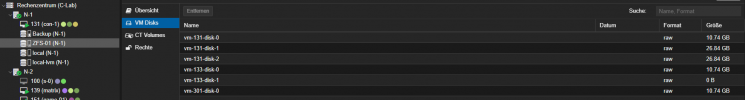

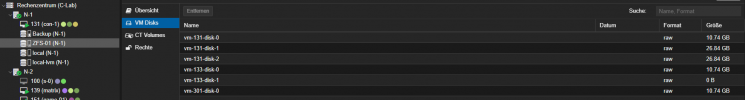

2023-07-21 14:04:07 132-0: volumes => ZFS-01:vm-132-disk-0,ZFS-01:vm-132-disk-1,ZFS-01:vm-132-disk-2,ZFS-01:vm-132-disk-3,ZFS-01:vm-132-disk-4

2023-07-21 14:04:08 132-0: freeze guest filesystem

2023-07-21 14:04:09 132-0: create snapshot '__replicate_132-0_1689941046__' on ZFS-01:vm-132-disk-0

2023-07-21 14:04:09 132-0: create snapshot '__replicate_132-0_1689941046__' on ZFS-01:vm-132-disk-1

2023-07-21 14:04:09 132-0: create snapshot '__replicate_132-0_1689941046__' on ZFS-01:vm-132-disk-2

2023-07-21 14:04:09 132-0: create snapshot '__replicate_132-0_1689941046__' on ZFS-01:vm-132-disk-3

2023-07-21 14:04:09 132-0: thaw guest filesystem

2023-07-21 14:04:09 132-0: delete previous replication snapshot '__replicate_132-0_1689941046__' on ZFS-01:vm-132-disk-0

2023-07-21 14:04:09 132-0: delete previous replication snapshot '__replicate_132-0_1689941046__' on ZFS-01:vm-132-disk-1

2023-07-21 14:04:09 132-0: delete previous replication snapshot '__replicate_132-0_1689941046__' on ZFS-01:vm-132-disk-2

2023-07-21 14:04:09 132-0: end replication job with error: zfs error: cannot create snapshot 'ZFS-01/vm-132-disk-3@__replicate_132-0_1689941046__': out of spaceOn the Target Node the Space is free:

Why i get the Error? Other VMs work fine.

Other VMs:

Thanks

Last edited: