Hi,

I created a PVE cluster connecting nodes using Tinc on a gigabit connection on Hetzner.

This is needed because I'm going to migrate all VMs to new nodes and I want to do so incrementally using the built-in PVE replication.

For this reason I wanted to connect old and new nodes in a cluster and use pvesr.

Tinc VPN is working good and all nodes see themselves in the

I created a new cluster and added nodes specifying this network with the command

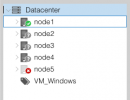

The nodes correctly connected to the cluster but when I tried connect the fifth node the situation is the following:

Last lines from syslog:

PVE Version (I'm still on 6.4 here, but new nodes are 7.2):

I know that this is a "non standard" configuration, but I need it only during the switchover.

After I transferred all VMs to new node I will recreate the cluster using the local network, so I need it to work only for a couple of weeks.

Thank you very much!

Bye

I created a PVE cluster connecting nodes using Tinc on a gigabit connection on Hetzner.

This is needed because I'm going to migrate all VMs to new nodes and I want to do so incrementally using the built-in PVE replication.

For this reason I wanted to connect old and new nodes in a cluster and use pvesr.

Tinc VPN is working good and all nodes see themselves in the

192.168.85.0/24 network.I created a new cluster and added nodes specifying this network with the command

pvecm add node1 --use_ssh 1 -link0 192.168.85.X`.The nodes correctly connected to the cluster but when I tried connect the fifth node the situation is the following:

Code:

root@node1:~# pvecm nodes

Membership information

----------------------

Nodeid Votes Name

1 1 node1 (local)

2 1 node2

3 1 node3

5 1 node5

Code:

root@node1:~# pvecm status

Cluster information

-------------------

Name: cluster

Config Version: 5

Transport: knet

Secure auth: on

Quorum information

------------------

Date: Fri Sep 9 10:57:34 2022

Quorum provider: corosync_votequorum

Nodes: 4

Node ID: 0x00000001

Ring ID: 1.c199e

Quorate: Yes

Votequorum information

----------------------

Expected votes: 5

Highest expected: 5

Total votes: 4

Quorum: 3

Flags: Quorate

Membership information

----------------------

Nodeid Votes Name

0x00000001 1 192.168.85.1 (local)

0x00000002 1 192.168.85.2

0x00000003 1 192.168.85.3

0x00000005 1 192.168.85.5Last lines from syslog:

Code:

root@node1:~# tail /var/log/syslog

Sep 9 10:59:00 node1 systemd[1]: Starting Proxmox VE replication runner...

Sep 9 10:59:02 node1 corosync[2113720]: [TOTEM ] Token has not been received in 8144 ms

Sep 9 10:59:03 node1 corosync[2113720]: [QUORUM] Sync members[4]: 1 2 3 5

Sep 9 10:59:03 node1 corosync[2113720]: [TOTEM ] A new membership (1.c1a2a) was formed. Members

Sep 9 10:59:03 node1 corosync[2113720]: [QUORUM] Members[4]: 1 2 3 5

Sep 9 10:59:03 node1 corosync[2113720]: [MAIN ] Completed service synchronization, ready to provide service.

Sep 9 10:59:03 node1 pvesr[344229]: trying to acquire cfs lock 'file-replication_cfg' ...

Sep 9 10:59:04 node1 pvesr[344229]: trying to acquire cfs lock 'file-replication_cfg' ...

Sep 9 10:59:05 node1 systemd[1]: pvesr.service: Succeeded.

Sep 9 10:59:05 node1 systemd[1]: Started Proxmox VE replication runner.PVE Version (I'm still on 6.4 here, but new nodes are 7.2):

Code:

root@node1:~# pveversion -v

proxmox-ve: 6.4-1 (running kernel: 5.4.128-1-pve)

pve-manager: 6.4-13 (running version: 6.4-13/9f411e79)

pve-kernel-5.4: 6.4-5

pve-kernel-helper: 6.4-5

pve-kernel-5.4.128-1-pve: 5.4.128-2

pve-kernel-5.4.78-2-pve: 5.4.78-2

pve-kernel-5.4.73-1-pve: 5.4.73-1

ceph: 15.2.14-pve1~bpo10

ceph-fuse: 15.2.14-pve1~bpo10

corosync: 3.1.2-pve1

criu: 3.11-3

glusterfs-client: 5.5-3

ifupdown: 0.8.35+pve1

ksm-control-daemon: 1.3-1

libjs-extjs: 6.0.1-10

libknet1: 1.20-pve1

libproxmox-acme-perl: 1.1.0

libproxmox-backup-qemu0: 1.1.0-1

libpve-access-control: 6.4-3

libpve-apiclient-perl: 3.1-3

libpve-common-perl: 6.4-3

libpve-guest-common-perl: 3.1-5

libpve-http-server-perl: 3.2-3

libpve-storage-perl: 6.4-1

libqb0: 1.0.5-1

libspice-server1: 0.14.2-4~pve6+1

lvm2: 2.03.02-pve4

lxc-pve: 4.0.6-2

lxcfs: 4.0.6-pve1

novnc-pve: 1.1.0-1

proxmox-backup-client: 1.1.13-2

proxmox-mini-journalreader: 1.1-1

proxmox-widget-toolkit: 2.6-1

pve-cluster: 6.4-1

pve-container: 3.3-6

pve-docs: 6.4-2

pve-edk2-firmware: 2.20200531-1

pve-firewall: 4.1-4

pve-firmware: 3.2-4

pve-ha-manager: 3.1-1

pve-i18n: 2.3-1

pve-qemu-kvm: 5.2.0-6

pve-xtermjs: 4.7.0-3

qemu-server: 6.4-2

smartmontools: 7.2-pve2

spiceterm: 3.1-1

vncterm: 1.6-2

zfsutils-linux: 2.0.5-pve1~bpo10+1I know that this is a "non standard" configuration, but I need it only during the switchover.

After I transferred all VMs to new node I will recreate the cluster using the local network, so I need it to work only for a couple of weeks.

Thank you very much!

Bye