Hi,

I have a four node Proxmox 9.1.2 cluster with fibre attached shared storage.

One of the nodes encountered a system board failure which resulted it to go down and become inaccessible. In researching how to recover from a dead node, I saw the recommendation is to evict the dead host from the cluster and rebuild it once the server was repaired.

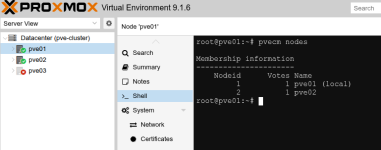

Since the host had already been down for over 2 days, I went forward with evicting it from the cluster by running the "pvecm delnode" command from one of the running nodes in the cluster. This resulted in an error stating that the dead node did not exist. A "pvecm nodes" command from a running node did not list the dead node. However, the dead node was still being displayed in the web GUI. I was able to clean up the GUI by removing/deleting the entry for the dead node in the corosync.conf file.

I have questions regarding this and how Proxmox determines if a node is not going to be coming back up.

1. How long does a node need to be down before the other nodes in the cluster "removes" it from the quorum? For example, the result from the pvecm nodes command did not show the dead node.

2. I "cleaned up" the dead node from the GUI by editing the corosync.conf. file. Is this the correct way to remove the node from the GUI? If not, what else needs to be done.

3. What is the correct approach to bring a node up after it has been down for an extended period of time (days)? Manual cleanup? Rebuild?

Thanks,

Henry

I have a four node Proxmox 9.1.2 cluster with fibre attached shared storage.

One of the nodes encountered a system board failure which resulted it to go down and become inaccessible. In researching how to recover from a dead node, I saw the recommendation is to evict the dead host from the cluster and rebuild it once the server was repaired.

Since the host had already been down for over 2 days, I went forward with evicting it from the cluster by running the "pvecm delnode" command from one of the running nodes in the cluster. This resulted in an error stating that the dead node did not exist. A "pvecm nodes" command from a running node did not list the dead node. However, the dead node was still being displayed in the web GUI. I was able to clean up the GUI by removing/deleting the entry for the dead node in the corosync.conf file.

I have questions regarding this and how Proxmox determines if a node is not going to be coming back up.

1. How long does a node need to be down before the other nodes in the cluster "removes" it from the quorum? For example, the result from the pvecm nodes command did not show the dead node.

2. I "cleaned up" the dead node from the GUI by editing the corosync.conf. file. Is this the correct way to remove the node from the GUI? If not, what else needs to be done.

3. What is the correct approach to bring a node up after it has been down for an extended period of time (days)? Manual cleanup? Rebuild?

Thanks,

Henry