Hi,

I’m running Proxmox VE 9.1.4 on Debian 13 on multiple Huawei 2288H V5 nodes. The servers use an OEM Broadcom/LSI MegaRAID SAS3508 controller.

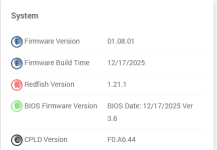

Hardware / software details:

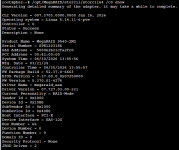

Key kernel logs:

At this point it looks like a compatibility issue between newer Linux kernels and old MegaRAID firmware, not something Proxmox-specific.

Has anyone seen the same SAS3508 + Proxmox (Debian 12/13) behavior? I’m considering rolling back to kernel 6.14.11-5-pve. I’ve also found multiple reports online where stability issues with MegaRAID controllers were mitigated by adding the following kernel parameters via GRUB:

These seem to reduce firmware hangs and unexpected controller resets on older MegaRAID firmware when running newer kernels.

I’m running Proxmox VE 9.1.4 on Debian 13 on multiple Huawei 2288H V5 nodes. The servers use an OEM Broadcom/LSI MegaRAID SAS3508 controller.

Hardware / software details:

- Server: Huawei 2288H V5

- RAID controller: Broadcom / LSI SAS3508 (OEM Huawei)

- RAID firmware: 5.140.00-3319 (Huawei confirmed this firmware is EOL, last supported on Debian 10)

- Proxmox VE: 9.1.4

- OS: Debian 13

- Kernel : 6.17.4-2-pve

- Driver: megaraid_sas (in-kernel driver from the Linux kernel, no out-of-tree module)

Key kernel logs:

Code:

megaraid_sas 0000:1c:00.0: Fatal firmware error: Line 169 in fw/raid/utils.c

megaraid_sas 0000:1c:00.0: FW in FAULT state Fault code:0x10000

megaraid_sas 0000:1c:00.0: resetting fusion adapter

megaraid_sas 0000:1c:00.0: Reset successful

megaraid_sas 0000:1c:00.0: Controller encountered an error and was resetHas anyone seen the same SAS3508 + Proxmox (Debian 12/13) behavior? I’m considering rolling back to kernel 6.14.11-5-pve. I’ve also found multiple reports online where stability issues with MegaRAID controllers were mitigated by adding the following kernel parameters via GRUB:

pcie_aspm=offpci=noaermegaraid_sas.msix_disable=1These seem to reduce firmware hangs and unexpected controller resets on older MegaRAID firmware when running newer kernels.