Well, with the 6.18 kernel being absolutely unusable due to SATA controller dropouts crashing containers using NFS and occasionally the entire Proxmox host I expected a repeat with the 7.0 one. Happy to say that it passed every test that would guarantee a crash on 6.18 and after 24 hours uptime I don't notice any real issues, awesome.

Opt-in Linux 7.0 Kernel for Proxmox VE 9 available on test

- Thread starter t.lamprecht

- Start date

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Cant install proxmox-kernel-7.0. But it was due to having no space left on /boot/efi (1GB). Using a

on removal it also set up the proxmox-kernel-7.0 that the server tried to install before. After reboot, node is online with kernel 7.0

apt autoremove helped. Just wanted to post it here, if anyone has the same situation.

Code:

Continue? [Y/n] Y

Setting up proxmox-kernel-7.0.0-1-rc7-pve-signed (7.0.0-1~rc7+1) ...

Examining /etc/kernel/postinst.d.

run-parts: executing /etc/kernel/postinst.d/initramfs-tools 7.0.0-1-rc7-pve /boot/vmlinuz-7.0.0-1-rc7-pve

update-initramfs: Generating /boot/initrd.img-7.0.0-1-rc7-pve

Running hook script 'zz-proxmox-boot'..

Re-executing '/etc/kernel/postinst.d/zz-proxmox-boot' in new private mount namespace..

No /etc/kernel/proxmox-boot-uuids found, skipping ESP sync.

Updating kernel version 7.0.0-1-rc7-pve in systemd-boot...

install: error writing '/boot/efi/1446e4c3f5ea48c4a3bbc9c2ba342a6d/7.0.0-1-rc7-pve/linux': No space left on device

Error: could not copy '/boot/vmlinuz-7.0.0-1-rc7-pve' to '/boot/efi/1446e4c3f5ea48c4a3bbc9c2ba342a6d/7.0.0-1-rc7-pve/linux'.

/usr/lib/kernel/install.d/90-loaderentry.install failed with exit status 1.

run-parts: /etc/initramfs/post-update.d//systemd-boot exited with return code 1

run-parts: /etc/kernel/postinst.d/initramfs-tools exited with return code 1

Failed to process /etc/kernel/postinst.d at /var/lib/dpkg/info/proxmox-kernel-7.0.0-1-rc7-pve-signed.postinst line 20.

dpkg: error processing package proxmox-kernel-7.0.0-1-rc7-pve-signed (--configure):

installed proxmox-kernel-7.0.0-1-rc7-pve-signed package post-installation script subprocess returned error exit status 2

dpkg: dependency problems prevent configuration of proxmox-kernel-7.0:

proxmox-kernel-7.0 depends on proxmox-kernel-7.0.0-1-rc7-pve-signed | proxmox-kernel-7.0.0-1-rc7-pve; however:

Package proxmox-kernel-7.0.0-1-rc7-pve-signed is not configured yet.

Package proxmox-kernel-7.0.0-1-rc7-pve is not installed.

Package proxmox-kernel-7.0.0-1-rc7-pve-signed which provides proxmox-kernel-7.0.0-1-rc7-pve is not configured yet.

dpkg: error processing package proxmox-kernel-7.0 (--configure):

dependency problems - leaving unconfigured

Errors were encountered while processing:

proxmox-kernel-7.0.0-1-rc7-pve-signed

proxmox-kernel-7.0

Error: Sub-process /usr/bin/dpkg returned an error code (1)

Code:

root@pveneo:~# apt autoremove

REMOVING:

libtlsrpt0 proxmox-kernel-6.14.11-1-pve-signed proxmox-kernel-6.17.13-1-pve-signed proxmox-kernel-6.17.4-1-pve-signed proxmox-rrd-migration-tool

libzpool6linux proxmox-kernel-6.14.11-4-pve-signed proxmox-kernel-6.17.2-1-pve-signed proxmox-kernel-6.17.4-2-pve-signed

menu proxmox-kernel-6.14.11-5-pve-signed proxmox-kernel-6.17.2-2-pve-signed proxmox-kernel-6.17.9-1-pve-signedon removal it also set up the proxmox-kernel-7.0 that the server tried to install before. After reboot, node is online with kernel 7.0

I really wish I hadn't gone with the default partitioning sometimes because of stuff like that, and the root lvm "only" being 100gb by default.Cant install proxmox-kernel-7.0. But it was due to having no space left on /boot/efi (1GB). Using aapt autoremovehelped. Just wanted to post it here, if anyone has the same situation.

*snip*

on removal it also set up the proxmox-kernel-7.0 that the server tried to install before. After reboot, node is online with kernel 7.0

I have found one minor bug - when backups are running my webui gets really slow and tasks (container console/login/etc ) keep timing out (ssh to the node is fine and all my containers are still running normal though), but the second the backup is done it's like nothing happened, even without reloading the page.

Running a tailscale subnet router on a VM and after upgrading the download speed tanked to ~0.2 Mbps from normal ~100Mbps.

Weirdly the upload speed was fine.

Tested using openspeedtest on another VM and both of them are up to date Debian trixie installs.

VM:s have no iptables and tailscale gets a direct connection according to tailscale status.

Weirdly the upload speed was fine.

Tested using openspeedtest on another VM and both of them are up to date Debian trixie installs.

VM:s have no iptables and tailscale gets a direct connection according to tailscale status.

Doesnt work on my 245KI read that Kernel 7 has now SR-IOV already enabled.

Any hint how to use / enable it ?

I would need it for the intel iGPU (265H)

Code:

[ 4.241268] i915 0000:00:02.0: driver does not support SR-IOV configuration via sysfs<br>Even when lspci is showing it is supported

Code:

Capabilities: [320 v1] Single Root I/O Virtualization (SR-IOV)

IOVCap: Migration- 10BitTagReq+ IntMsgNum 0

IOVCtl: Enable- Migration- Interrupt- MSE- ARIHierarchy- 10BitTagReq-

IOVSta: Migration-

Initial VFs: 7, Total VFs: 7, Number of VFs: 0, Function Dependency Link: 00

VF offset: 1, stride: 1, Device ID: 7d67

Supported Page Size: 00000553, System Page Size: 00000001

Region 0: Memory at 000000b010000000 (64-bit, prefetchable)

VF Migration: offset: 00000000, BIR: 0But intel website states its unsupported on Meteor Lake

https://www.intel.com/content/www/us/en/support/articles/000093216/graphics/processor-graphics.html

Last edited:

But according the Intel site it should work, because 245K and my 265H are Arrow Lake CPUs, which should support .

MeteorLake are Ultra 1xx CPUs.

| Intel® Core™ Ultra Processor (Series 2) processor family (Formerly Known as Arrow Lake) | Single Root IO Virtualization (SR-IOV) |

MeteorLake are Ultra 1xx CPUs.

Im really confused about intel new naming scheme, but Im assuming 245K is Core Ultra 100 series (Meteor Lake) and Core Ultra 200 is new release of Arrow Lake Refresh? Anyway, it works just fine with patched strongtz/sriov-dkms on 6.19 and does not work on upstream 7.0.

If you have any suggestion how can I test sriov on 7.0 please let me know, I will try that. sysfs / `options i915 max_vfs=7` doesnt work.

If you have any suggestion how can I test sriov on 7.0 please let me know, I will try that. sysfs / `options i915 max_vfs=7` doesnt work.

Code:

$

lspci | grep -iE "(meteor|arrow)"

00:01.0 PCI bridge: Intel Corporation Meteor Lake-H PCIe Root Port (rev 10)

00:02.0 VGA compatible controller: Intel Corporation Arrow Lake-S [Intel Graphics] (rev 06)

00:07.0 PCI bridge: Intel Corporation Meteor Lake-P Thunderbolt 4 PCI Express Root Port #0 (rev 10)

00:07.1 PCI bridge: Intel Corporation Meteor Lake-P Thunderbolt 4 PCI Express Root Port #1 (rev 10)

00:0b.0 Processing accelerators: Intel Corporation Arrow Lake NPU (rev 01)

00:0d.0 USB controller: Intel Corporation Meteor Lake-P Thunderbolt 4 USB Controller (rev 10)

00:0d.2 USB controller: Intel Corporation Meteor Lake-P Thunderbolt 4 NHI #0 (rev 10)MeteorLake was only the Mobile Ultra100 CPUs

https://www.intel.de/content/www/de...name/90353/products-formerly-meteor-lake.html

ArrowLake is Ultra 200 for Desktop and Mobiles

https://www.intel.de/content/www/de...name/225837/products-formerly-arrow-lake.html

Your one

https://www.intel.de/content/www/de...-24m-cache-up-to-5-20-ghz/specifications.html

Same here, doesn't work with sysfs parameter and grub kernel parameter alone.

https://www.intel.de/content/www/de...name/90353/products-formerly-meteor-lake.html

ArrowLake is Ultra 200 for Desktop and Mobiles

https://www.intel.de/content/www/de...name/225837/products-formerly-arrow-lake.html

Your one

https://www.intel.de/content/www/de...-24m-cache-up-to-5-20-ghz/specifications.html

Same here, doesn't work with sysfs parameter and grub kernel parameter alone.

Some people say that SRIOV has been deprecated in the i915 driver.

Furthermore, I believe that METs that do not support SRIOV in the XE driver will not work as a result.

Wouldn’t it be better to discuss this on Intel’s Git

https://github.com/strongtz/i915-sriov-dkms#

README

Xe module currently does not support MTL (Meteor Lake) and LNL (Lunar Lake) platforms. Please use i915 instead.

https://github.com/intel/linux-intel-lts/issues/33#issuecomment-3247751213

https://github.com/strongtz/i915-sriov-dkms/issues/345

Furthermore, I believe that METs that do not support SRIOV in the XE driver will not work as a result.

Wouldn’t it be better to discuss this on Intel’s Git

https://github.com/strongtz/i915-sriov-dkms#

README

Xe module currently does not support MTL (Meteor Lake) and LNL (Lunar Lake) platforms. Please use i915 instead.

https://github.com/intel/linux-intel-lts/issues/33#issuecomment-3247751213

https://github.com/strongtz/i915-sriov-dkms/issues/345

Last edited:

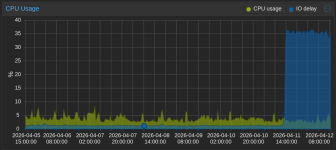

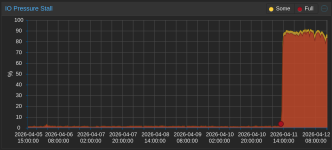

Also observed IO delay / IO Pressure Stall increase after upgrading our test hypervisors yesterday (11.04.2026 ~12:00). Even though the numbers look way off the charts I have not noticed any performance regressions so far. Thanks for making Linux 7.0 available this early!This is most likely stemming from the newer Qemu 10.2, which is also available on the test repo and is likely an accounting issue, but we're looking into that in any case.

FYI here is one of our 'default' VM configs of VMs running on these HVs:

Code:

agent: 1

bios: ovmf

boot: order=virtio0

cores: 8

cpu: x86-64-v3

efidisk0: ceph-nvme01:vm-106-disk-0,efitype=4m,pre-enrolled-keys=1,size=528K

hotplug: disk,network

machine: q35

memory: 24576

name: <redacted>

net0: virtio=C6:45:40:46:FB:BA,bridge=vmbr0,tag=400

numa: 0

onboot: 1

ostype: l26

scsihw: virtio-scsi-single

smbios1: <redacted>

sockets: 1

tablet: 0

tags: <redacted>

vga: virtio

virtio0: ceph-nvme01:vm-106-disk-1,discard=on,iothread=1,size=20G

virtio1: ceph-nvme01:vm-106-disk-2,discard=on,iothread=1,size=50G

virtio2: ceph-nvme01:vm-106-disk-3,discard=on,iothread=1,size=300G

vmgenid: <redacted>

Last edited:

Also observed IO delay / IO Pressure Stall increase after upgrading our test hypervisors yesterday (11.04.2026 ~12:00). Even though the numbers look way off the charts I have not noticed any performance regressions so far. Thanks for making Linux 7.0 available this early!

<snip>

Here you go - in case you've not already found it this is courtesy of @uzumo in this thread https://forum.proxmox.com/threads/a...talls”-patches-in-the-test-repository.182186/

In short, its not the kernel update itself its the pve-qemu-kvm package and the Proxmox gurus have confirmed cosmetic only and working on a fix.

Get the best of testing the 7,0 kernel along with other updates by holding back pve-qemu-kvm per uzomo!

Code:

apt reinstall pve-qemu-kvm=10.1.2-7

apt-mark hold pve-qemu-kvm

apt-get dist-upgradeHappy Proxmox!

Really appreciate the 7.0 preview here. I've had very specific issues, exotic , some might say stupid setup with Opnsense as a guest VM with a Mellanox 4 LX (mlx5 drivers) as a passthrough. I had to hard reset over and over, as software reboots would cause the card to hang. A full power off and power on would allow the card in passthrough mode to work correctly.

Issue 1 - Jan 20 (Boot -25) - 6.17.4-2-pve m1x5 NIC firmware crash: assert_var[1] = 0xbadcOffe

- Both ports on card 83:00.0 and 83:00.1 simultaneously threw severity 3 firmware internal errors

The VMs' KVM threads were deadlocking on memory page migration interacting with VFIO/PCI (m1x5 passthrough).

Looking around, I noticed the 7.0 changelog includes these mlx5-related merge branches: mlx5-misc-fixes-2026-02-18 , mlx5-misc-fixes-2026-02-24 , mlx5-misc-fixes-2026-03-05 , mlx5-misc-fixes-2026-03-16 , mlx5-misc-fixes-2026-03-30 , mlx5-next , net-mlx5e-rx-datapath-enhancements , net-mlx5e-save-per-channel-async-icosq-in-default , net-mlx5-hws-single-flow-counter-support , devlink-and-mlx5-support-cross-function-rate-scheduling , mlx5-add-tso-support-for-udp-over-gre-over-vlan , and disable-interrupts-and-ensure-dbell-updation .

Either way so far I am good to go here. A few reboots down with no issues. I'm cheap, these are cheap, these are fast. I doubt a lot of these will back port but hard to say, the dependencies seem intense.

Issue 1 - Jan 20 (Boot -25) - 6.17.4-2-pve m1x5 NIC firmware crash: assert_var[1] = 0xbadcOffe

- Both ports on card 83:00.0 and 83:00.1 simultaneously threw severity 3 firmware internal errors

- synd 0x1 / ext_synd 0x8a47 - the Oxbadcoffe sentinel is Mellanox's "bad coffee" marker for a firmware assertion failure

- Escalated to "device's health compromised - reached miss count" on both ports

- The driver then unloaded the E-Switch on both, forcing a reboot ~45 minutes later

- Multiple kvm / CPU O/KVM threads stuck in state

(uninterruptible sleep)

(uninterruptible sleep) - All stuck in do swap page → migration _entry wait while VEIO was active (vfio_ poi_rw,

vfio basic _config_read)

The VMs' KVM threads were deadlocking on memory page migration interacting with VFIO/PCI (m1x5 passthrough).

Looking around, I noticed the 7.0 changelog includes these mlx5-related merge branches: mlx5-misc-fixes-2026-02-18 , mlx5-misc-fixes-2026-02-24 , mlx5-misc-fixes-2026-03-05 , mlx5-misc-fixes-2026-03-16 , mlx5-misc-fixes-2026-03-30 , mlx5-next , net-mlx5e-rx-datapath-enhancements , net-mlx5e-save-per-channel-async-icosq-in-default , net-mlx5-hws-single-flow-counter-support , devlink-and-mlx5-support-cross-function-rate-scheduling , mlx5-add-tso-support-for-udp-over-gre-over-vlan , and disable-interrupts-and-ensure-dbell-updation .

Either way so far I am good to go here. A few reboots down with no issues. I'm cheap, these are cheap, these are fast. I doubt a lot of these will back port but hard to say, the dependencies seem intense.

Last edited:

I've just updated to the final 7.0.0-1-pve and everything's running fine, except that after installation i had to pin this kernel, as the system would always choose the "old" 7.0.0-1-rc7-pve" one (tried on different machines without luck)

So i did a:

So i did a:

# pve-efiboot-tool kernel pin 7.0.0-1-pveThe issue occurs because the bootloader interprets the version

The simplest solution is to run

Or just

7.0.0-1-rc7-pve as greater than 7.0.0-1-pve when comparing versions.The simplest solution is to run

apt purge proxmox-{kernel,headers}-7.0.0-1-rc* to remove the affected packages.Or just

apt purge proxmox-kernel-7.0.0-1-rc* if you don't have the headers installed. Then you don't need to have a kernel pinned, and have it become stale because you forgot to unpin it.I've just updated to the final 7.0.0-1-pve and everything's running fine, except that after installation i had to pin this kernel, as the system would always choose the "old" 7.0.0-1-rc7-pve" one (tried on different machines without luck)

Last edited:

Yes thanks, i was aware of the naming issue, but was waiting for the final solution from @t.lamprecht. so pinning at least did the job right now. I expect a name change soon to come over that problem.The issue occurs because the bootloader interprets the version `7.0.0-1-rc7-pve` as greater than `7.0.0-1-pve` when comparing versions.

The simplest solution is to runapt purge proxmox-{kernel,headers}-7.0.0-1-rc*to remove the affected packages.

Or justapt purge proxmox-kernel-7.0.0-1-rc*if you don't have the headers installed. Then you don't need to have a kernel pinned, and have it become stale because you forgot to unpin it.

Although this is the official Linux Kernel 7.0.0 release, it is still classified as beta since Ubuntu 26.04 has not yet been released. Proxmox leverages the Ubuntu kernel, enhanced with custom compile flags, built-in ZFS support, and patches optimized for virtual machines and LXC containers. (If you are curious of the patches, checkout the proxmox-kernel git.)

Ubuntu 26.04 Release Information

Ubuntu 26.04 Release Information

- Final Planned Release Date: April 23, 2026

- Point Release (26.04.1): Scheduled for August 6, 2026 (this is typically when direct upgrades from 24.04 LTS become officially supported).

Last edited:

yes, we indeed uploaded a proxmox-kernel-7.0.0-2-pve-signed package to circumvent this versioning "issue", over adapting the simpler ordering algorithms of the proxmox-boot-tool. As while the versions were correctly ordered from the POV of apt, given that the existing p-b-t algorithm is very simple to implement and matches the one from other tooling like systemd-boot it was just the safer choice.Yes thanks, i was aware of the naming issue, but was waiting for the final solution from @t.lamprecht. so pinning at least did the job right now. I expect a name change soon to come over that problem.

We never made us directly dependent on Ubuntu's release schedule, while we base of the Ubuntu kernel for now, it really is an independent kernel, as the differences in the buildsystem, kernel config and adaptions show. In fact here we mostly waited for the Linus' kernel.org release for a broader rollout and will start to roll out the change in the default-kernel soonish.Although this is the official Linux Kernel 7.0.0 release, it is still classified as beta since Ubuntu 26.04 has not yet been released.

But given that with kernels, especially new major releases, we like to take our time, it might still only land to enterprise after Ubuntu's release, but that's mostly a coincidence, not the underlying cause of the timing.