Hi there.

Have a proxmox with some VMs

100 Home Assistan

105 PBServer which I only start few moments before backups are schedulled

have a LXC also, pihole.

have 2 backup jobs schedulled with same config, even though in the log the config appears in different way, although matching.

I also have setup the Telegram webhook notification system, working fine.

the point is that one of the backup jobs is notified and the other one shows a code 400 error

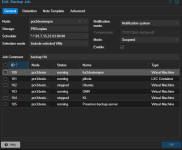

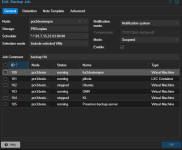

this is job 1

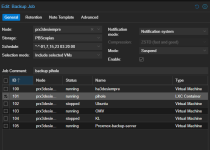

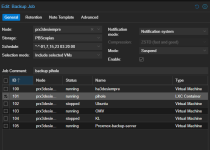

and this is job 2

jobs run correctly, but for the first job (id100)

this is what the log shows

As you can see, 105 machine starts at 02:55

at 03:00 first backup (100) starts and ends successfully, unless the notification

and then at 03:20 second backup (101) starts and ends succesfully with success notification.

Don´t even know where to start.

Have a proxmox with some VMs

100 Home Assistan

105 PBServer which I only start few moments before backups are schedulled

have a LXC also, pihole.

have 2 backup jobs schedulled with same config, even though in the log the config appears in different way, although matching.

I also have setup the Telegram webhook notification system, working fine.

the point is that one of the backup jobs is notified and the other one shows a code 400 error

this is job 1

and this is job 2

jobs run correctly, but for the first job (id100)

this is what the log shows

Code:

Mar 01 02:55:02 prx3desiempre qm[2302635]: start VM 105: UPID:prx3desiempre:002322AB:08DE4A21:69A39C75:qmstart:105:root@pam:

Mar 01 02:55:02 prx3desiempre qm[2302633]: <root@pam> starting task UPID:prx3desiempre:002322AB:08DE4A21:69A39C75:qmstart:105:root@pam:

Mar 01 02:55:02 prx3desiempre systemd[1]: Started 105.scope.

Mar 01 02:55:02 prx3desiempre kernel: tap105i0: entered promiscuous mode

Mar 01 02:55:02 prx3desiempre kernel: vmbr0: port 5(tap105i0) entered blocking state

Mar 01 02:55:02 prx3desiempre kernel: vmbr0: port 5(tap105i0) entered disabled state

Mar 01 02:55:02 prx3desiempre kernel: tap105i0: entered allmulticast mode

Mar 01 02:55:02 prx3desiempre kernel: vmbr0: port 5(tap105i0) entered blocking state

Mar 01 02:55:02 prx3desiempre kernel: vmbr0: port 5(tap105i0) entered forwarding state

Mar 01 02:55:02 prx3desiempre qm[2302635]: VM 105 started with PID 2302647.

Mar 01 02:55:02 prx3desiempre qm[2302633]: <root@pam> end task UPID:prx3desiempre:002322AB:08DE4A21:69A39C75:qmstart:105:root@pam: OK

Mar 01 02:55:02 prx3desiempre CRON[2302631]: pam_unix(cron:session): session closed for user root

Mar 01 02:55:10 prx3desiempre pvestatd[990]: PBScopias: error fetching datastores - 500 Can't connect to 192.168.0.231:8007 (No route to host)

Mar 01 02:55:18 prx3desiempre pvestatd[990]: PBScopias: error fetching datastores - 500 Can't connect to 192.168.0.231:8007 (Connection refused)

Mar 01 03:00:07 prx3desiempre pvescheduler[2304255]: <root@pam> starting task UPID:prx3desiempre:00232900:08DEC167:69A39DA7:vzdump:100:root@pam:

Mar 01 03:00:07 prx3desiempre pvescheduler[2304256]: INFO: starting new backup job: vzdump 100 --quiet 1 --notes-template '{{guestname}}{{node}} Acaba de hacerse una copia de seguridad' --mode suspend --compress gzip --fleecing 0 --node prx3desiempre --notification-mode notification-system --prune-backups 'keep-monthly=2' --storage PBScopias

Mar 01 03:00:07 prx3desiempre pvescheduler[2304256]: INFO: Starting Backup of VM 100 (qemu)

Mar 01 03:00:08 prx3desiempre qm[2304279]: <root@pam> starting task UPID:prx3desiempre:00232918:08DEC1EE:69A39DA8:qmpause:100:root@pam:

Mar 01 03:00:08 prx3desiempre qm[2304280]: suspend VM 100: UPID:prx3desiempre:00232918:08DEC1EE:69A39DA8:qmpause:100:root@pam:

Mar 01 03:00:08 prx3desiempre qm[2304279]: <root@pam> end task UPID:prx3desiempre:00232918:08DEC1EE:69A39DA8:qmpause:100:root@pam: OK

Mar 01 03:00:11 prx3desiempre pvescheduler[2304256]: VM 100 qmp command failed - VM 100 qmp command 'guest-ping' failed - got timeout

Mar 01 03:00:26 prx3desiempre pvestatd[990]: status update time (9.059 seconds)

Mar 01 03:01:27 prx3desiempre pvestatd[990]: status update time (10.130 seconds)

Mar 01 03:01:45 prx3desiempre pvestatd[990]: status update time (7.788 seconds)

Mar 01 03:01:55 prx3desiempre pvestatd[990]: status update time (8.601 seconds)

Mar 01 03:02:08 prx3desiempre pvestatd[990]: status update time (10.332 seconds)

Mar 01 03:02:16 prx3desiempre pvestatd[990]: status update time (7.892 seconds)

Mar 01 03:02:22 prx3desiempre qm[2305101]: <root@pam> starting task UPID:prx3desiempre:00232C4E:08DEF61C:69A39E2E:qmresume:100:root@pam:

Mar 01 03:02:22 prx3desiempre qm[2305102]: resume VM 100: UPID:prx3desiempre:00232C4E:08DEF61C:69A39E2E:qmresume:100:root@pam:

Mar 01 03:02:22 prx3desiempre qm[2305101]: <root@pam> end task UPID:prx3desiempre:00232C4E:08DEF61C:69A39E2E:qmresume:100:root@pam: OK

Mar 01 03:02:22 prx3desiempre pvescheduler[2304256]: INFO: Finished Backup of VM 100 (00:02:15)

Mar 01 03:02:22 prx3desiempre pvescheduler[2304256]: INFO: Backup job finished successfully

Mar 01 03:02:22 prx3desiempre perl[2304256]: could not notify via target `notificaciones_telegtram`: https://api.telegram.org/bot<masked>/sendMessage: status code 400

Mar 01 03:03:21 prx3desiempre pvestatd[990]: got timeout

Mar 01 03:03:28 prx3desiempre pvestatd[990]: status update time (9.563 seconds)

Mar 01 03:10:01 prx3desiempre CRON[2307581]: pam_unix(cron:session): session opened for user root(uid=0) by (uid=0)

Mar 01 03:10:01 prx3desiempre CRON[2307582]: (root) CMD (test -e /run/systemd/system || SERVICE_MODE=1 /sbin/e2scrub_all -A -r)

Mar 01 03:10:01 prx3desiempre CRON[2307581]: pam_unix(cron:session): session closed for user root

Mar 01 03:10:35 prx3desiempre systemd[1]: Starting e2scrub_all.service - Online ext4 Metadata Check for All Filesystems...

Mar 01 03:10:35 prx3desiempre systemd[1]: e2scrub_all.service: Deactivated successfully.

Mar 01 03:10:35 prx3desiempre systemd[1]: Finished e2scrub_all.service - Online ext4 Metadata Check for All Filesystems.

Mar 01 03:17:01 prx3desiempre CRON[2309792]: pam_unix(cron:session): session opened for user root(uid=0) by (uid=0)

Mar 01 03:17:01 prx3desiempre CRON[2309793]: (root) CMD (cd / && run-parts --report /etc/cron.hourly)

Mar 01 03:17:01 prx3desiempre CRON[2309792]: pam_unix(cron:session): session closed for user root

Mar 01 03:17:24 prx3desiempre pvestatd[990]: status update time (5.063 seconds)

Mar 01 03:20:07 prx3desiempre pvescheduler[2310743]: <root@pam> starting task UPID:prx3desiempre:00234258:08E09666:69A3A257:vzdump:101:root@pam:

Mar 01 03:20:08 prx3desiempre pvescheduler[2310744]: INFO: starting new backup job: vzdump 101 --storage PBScopias --prune-backups 'keep-monthly=2' --notification-mode notification-system --node prx3desiempre --mailnotification always --fleecing 0 --compress zstd --mode suspend --notes-template '{{guestname}} acaba de hacerse una copia de seguridad' --quiet 1

Mar 01 03:20:08 prx3desiempre pvescheduler[2310744]: INFO: Starting Backup of VM 101 (lxc)

Mar 01 03:20:45 prx3desiempre pvescheduler[2310744]: INFO: Finished Backup of VM 101 (00:00:37)

Mar 01 03:20:45 prx3desiempre pvescheduler[2310744]: INFO: Backup job finished successfully

Mar 01 03:20:46 prx3desiempre perl[2310744]: notified via target `notificaciones_telegtram`

Mar 01 03:24:10 prx3desiempre systemd[1]: Starting man-db.service - Daily man-db regeneration...

Mar 01 03:24:10 prx3desiempre systemd[1]: man-db.service: Deactivated successfully.

Mar 01 03:24:10 prx3desiempre systemd[1]: Finished man-db.service - Daily man-db regeneration.

Mar 01 03:30:01 prx3desiempre CRON[2313938]: pam_unix(cron:session): session opened for user root(uid=0) by (uid=0)

Mar 01 03:30:01 prx3desiempre CRON[2313939]: (root) CMD (test -e /run/systemd/system || SERVICE_MODE=1 /usr/lib/x86_64-linux-gnu/e2fsprogs/e2scrub_all_cron)

Mar 01 03:30:01 prx3desiempre CRON[2313938]: pam_unix(cron:session): session closed for user root

Mar 01 03:31:28 prx3desiempre pvestatd[990]: status update time (9.855 seconds)

Mar 01 03:39:20 prx3desiempre pvestatd[990]: got timeout

Mar 01 03:55:01 prx3desiempre CRON[2321861]: pam_unix(cron:session): session opened for user root(uid=0) by (uid=0)

Mar 01 03:55:02 prx3desiempre CRON[2321862]: (root) CMD (/usr/sbin/qm shutdown 105)

Mar 01 03:55:02 prx3desiempre qm[2321863]: <root@pam> starting task UPID:prx3desiempre:00236DC8:08E3C8AA:69A3AA86:qmshutdown:105:root@pam:

Mar 01 03:55:02 prx3desiempre qm[2321864]: shutdown VM 105: UPID:prx3desiempre:00236DC8:08E3C8AA:69A3AA86:qmshutdown:105:root@pam:

Mar 01 03:55:03 prx3desiempre kernel: tap105i0: left allmulticast mode

Mar 01 03:55:03 prx3desiempre kernel: vmbr0: port 5(tap105i0) entered disabled state

Mar 01 03:55:03 prx3desiempre qmeventd[639]: read: Connection reset by peer

Mar 01 03:55:03 prx3desiempre systemd[1]: 105.scope: Deactivated successfully.

Mar 01 03:55:03 prx3desiempre systemd[1]: 105.scope: Consumed 3min 50.638s CPU time.

Mar 01 03:55:03 prx3desiempre qm[2321863]: <root@pam> end task UPID:prx3desiempre:00236DC8:08E3C8AA:69A3AA86:qmshutdown:105:root@pam: OK

Mar 01 03:55:03 prx3desiempre CRON[2321861]: pam_unix(cron:session): session closed for user root

Mar 01 03:55:04 prx3desiempre qmeventd[2321877]: Starting cleanup for 105

Mar 01 03:55:04 prx3desiempre qmeventd[2321877]: Finished cleanup for 105As you can see, 105 machine starts at 02:55

at 03:00 first backup (100) starts and ends successfully, unless the notification

and then at 03:20 second backup (101) starts and ends succesfully with success notification.

Don´t even know where to start.