System:

Every morning ~7-8 GiB SWAP is used by KVM processes and Proxmox daemons (pvedaemon, pve-ha-crm etc.).

During the day vmstat shows so = 0 – nothing is actively being swapped out.

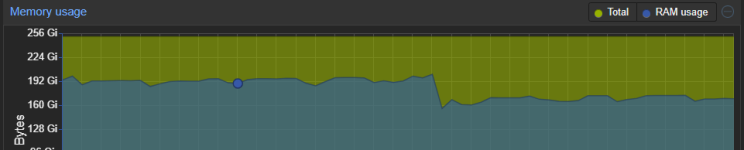

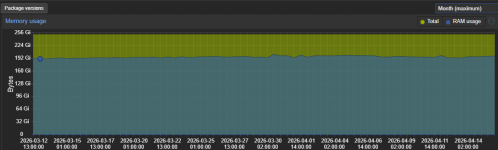

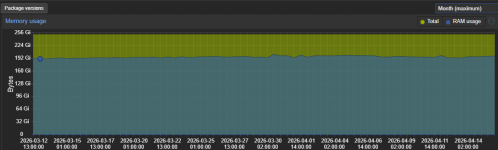

The weekly RAM graph confirms free RAM never drops below ~35 GiB.

swapoff -a && swapon -a fixes it temporarily, but SWAP refills overnight.

At night the backups are running. Everything worked fine. At January it started with the described problem.

Question:

Why does SWAP fill up nightly when RAM usage never exceeds 86% and swappiness is 1?

Is ZFS ARC eviction latency still a valid cause even when global free RAM never drops below 35 GiB?

Could memory fragmentation explain this?

Has anyone a similar problem?

I only have 8TB diskspace on my ZFS - so decreasing to 96GB should be no problem, is it?

Would that be the correct way?

Kind Regards

Raid007

- Proxmox VE (8.4.17, updated, no fresh install), ZFS 2.2.9-pve1, Linux 6.8.12-20-pve

- 256 GiB RAM, Single CPU (no NUMA)

- vm.swappiness = 1 - set from 60 to 5 and than to 1

- ZFS ARC: default limit of 50% RAM = 125.7 GiB, currently used at 125.6 GiB (99.9%) - but no /etc/modprobe.d/zfs.conf is existing

- Not updated to ZFS 2.3.0-pve1 - there is a update about the usage...

Every morning ~7-8 GiB SWAP is used by KVM processes and Proxmox daemons (pvedaemon, pve-ha-crm etc.).

During the day vmstat shows so = 0 – nothing is actively being swapped out.

The weekly RAM graph confirms free RAM never drops below ~35 GiB.

swapoff -a && swapon -a fixes it temporarily, but SWAP refills overnight.

At night the backups are running. Everything worked fine. At January it started with the described problem.

Question:

Why does SWAP fill up nightly when RAM usage never exceeds 86% and swappiness is 1?

Is ZFS ARC eviction latency still a valid cause even when global free RAM never drops below 35 GiB?

Could memory fragmentation explain this?

Has anyone a similar problem?

I only have 8TB diskspace on my ZFS - so decreasing to 96GB should be no problem, is it?

Would that be the correct way?

Code:

cat << 'EOF' > /etc/modprobe.d/zfs.conf

options zfs zfs_arc_max=103079215104

options zfs zfs_arc_min=4294967296

options zfs zfs_arc_sys_free=8589934592

EOF

update-initramfs -uKind Regards

Raid007